Separate AI Helpdesk Triage from Remediation Without a Shared Deploy

A practical Java architecture walkthrough for teams that need different ownership, release cadence, and operational boundaries around AI support workflows.

A helpdesk bot looks straightforward right up to the point where two teams need to own different parts of it. The helpdesk team owns ticket intake, severity, and the workflow around escalation. The knowledge-base team owns remediation text, prompt changes, and the policy churn that follows every VPN rollout, MFA change, and laptop refresh.

That split is where the tidy one-service demo starts lying to you. If everything lives in one app, every KB tweak becomes a helpdesk deployment, every prompt change turns into a shared release event, and the line between “call another capability” and “ship somebody else’s logic in my process” disappears. Nothing builds cross-team harmony like waiting on someone else’s deployment window to update a password-reset hint.

So this tutorial builds the awkward version on purpose. We keep triage in one Quarkus app, publish remediation as a separate A2A agent in another Quarkus app, and let the two talk over JSON-RPC like real remote systems. That extra ceremony has a real business reason behind it: separate ownership, separate release cadence, and a contract both teams can change without pretending they still share one codebase.

What we build

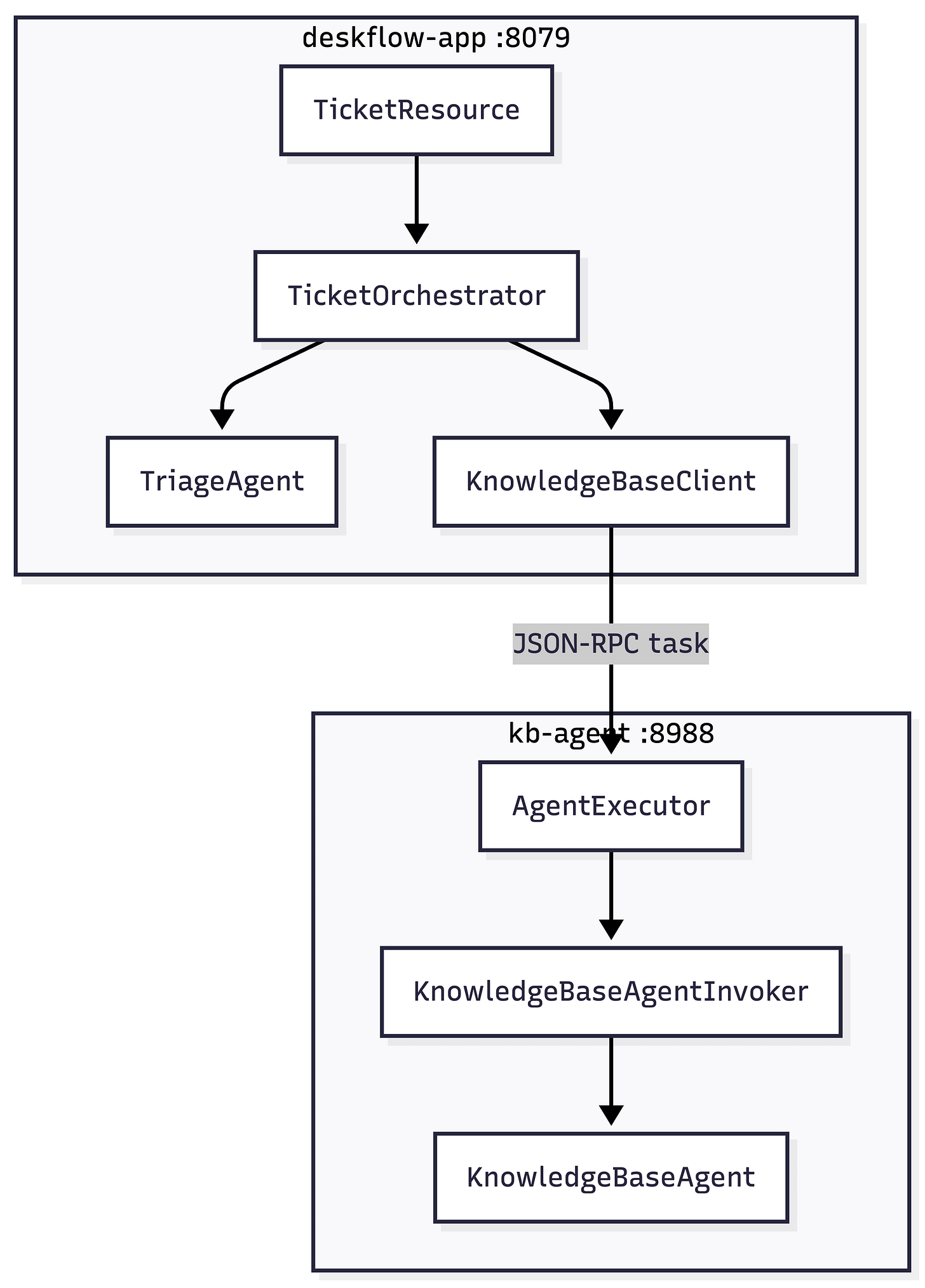

We end up with two processes:

kb-agenton 8988 - an A2A JSON-RPC server backed by a LangChain4j@RegisterAiServicedeskflow-appon 8079 - a REST API that runs local triage and then calls the KB agent over A2A

The class list is small on purpose:

TicketResource-POST /ticketsTicketOrchestrator- runs triage and the remote KB callTriageAgent- local LangChain4j AI service that returns JSON classificationKnowledgeBaseClient- plain contract for the KB call (no LangChain4j annotations on this type)A2aKnowledgeBaseClient-AgenticServices.a2aBuilder(...).build()+UntypedAgent.invoke(...)so A2A does not go through@RegisterAiServiceAI-service codegenKnowledgeBaseAgent- server-side LangChain4j AI serviceKnowledgeBaseAgentInvoker-@ApplicationScopedbridge; calls the AI service under@ActivateRequestContextso A2A worker threads have an active CDI request scopeKnowledgeBaseAgentCard- publishes/.well-known/agent-card.jsonKnowledgeBaseExecutorProducer- maps A2A tasks to the KB AI service viaKnowledgeBaseAgentInvoker

What you need

I am using the Quarkus CLI for project generation and the generated Maven wrappers for the rest. That keeps the setup readable.

Java 21+

Maven 3.9+

Quarkus CLI installed

Ollama running on http://localhost:11434

A pulled model tag such as

llama3.2Some ☕️

Pull the model once before we start:

ollama pull llama3.2Both apps read OLLAMA_MODEL if you want a different pulled tag without editing application.properties.

Generate the project skeleton

Create a working directory, generate two empty Quarkus apps, and add the source folders we will fill by hand:

mkdir a2a-deskflow

cd a2a-deskflow

quarkus create app dev.deskflow:kb-agent \

--package-name=dev.kbagent \

--extension='rest-jackson,quarkus-langchain4j-ollama' \

--no-code

quarkus create app dev.deskflow:deskflow-app \

--package-name=dev.deskflow \

--extension='rest-jackson,quarkus-langchain4j-ollama, quarkus-langchain4j-agentic' \

--no-code

mkdir -p kb-agent/src/main/java/dev/kbagent

mkdir -p kb-agent/src/test/java/dev/kbagent

mkdir -p kb-agent/src/test/resources

mkdir -p deskflow-app/src/main/java/dev/deskflow/model

mkdir -p deskflow-app/src/test/java/dev/deskflow

Create the parent pom.xml in a2a-deskflow/:

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 https://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>dev.deskflow</groupId>

<artifactId>a2a-deskflow</artifactId>

<version>1.0.0-SNAPSHOT</version>

<packaging>pom</packaging>

<name>A2A DeskFlow (aggregator)</name>

<modules>

<module>kb-agent</module>

<module>deskflow-app</module>

</modules>

</project>The parent POM only aggregates the modules. Each module keeps its own ./mvnw, which is what we use for tests and dev mode.

Build kb-agent

We start with the server because the main app has nothing useful to call until the KB agent exists.

Change kb-agent/pom.xml

Add the following property and dependencies to kb-agent/pom.xml:

<!-- Must match the A2A spec version pulled in by langchain4j-agentic-a2a (deskflow-app). -->

<a2a.io.sdk.version>0.3.2.Final</a2a.io.sdk.version>

<dependency>

<groupId>io.github.a2asdk</groupId>

<artifactId>a2a-java-sdk-reference-jsonrpc</artifactId>

<version>${a2a.io.sdk.version}</version>

</dependency>

<!-- gRPC stubs: referenced from the A2A SDK classpath for optional gRPC transport; not used at

runtime for JSON-RPC-only apps. Present so ARC can index those types and avoid WARN noise. -->

<dependency>

<groupId>io.grpc</groupId>

<artifactId>grpc-stub</artifactId>

</dependency>

<dependency>

<groupId>io.rest-assured</groupId>

<artifactId>rest-assured</artifactId>

<scope>test</scope>

</dependency>The io.grpc:grpc-stub line is easy to misread. We are not running gRPC for this sample. The A2A Java SDK still carries optional gRPC transport types on the classpath, and Quarkus ARC tries to index io.grpc.stub.Abstract*Stub during augmentation. If grpc-stub is missing, dev mode and tests print Failed to index ... Class does not exist even though every request stays on JSON-RPC. Adding the stub JAR gives ARC something real to load; the Quarkus BOM supplies the version.

Set kb-agent/src/main/resources/application.properties

quarkus.http.port=8988

# Advertised base URL for the agent card (what remote clients should call)

deskflow.a2a.public-base-url=http://localhost:8988

# Local Ollama - pull the tag once: ollama pull llama3.2

quarkus.langchain4j.ollama.base-url=http://localhost:11434

quarkus.langchain4j.ollama.chat-model.model-id=${OLLAMA_MODEL:llama3.2}

quarkus.langchain4j.ollama.chat-model.log-requests=true

quarkus.langchain4j.ollama.chat-model.log-responses=true

quarkus.langchain4j.timeout=120sThe property to watch is deskflow.a2a.public-base-url. That value ends up in the agent card, which means it has to be the address callers can really use. localhost is fine for a laptop run. It is useless behind a real ingress.

Create kb-agent/src/main/java/dev/kbagent/KnowledgeBaseAgent.java

package dev.kbagent;

import dev.langchain4j.service.SystemMessage;

import dev.langchain4j.service.UserMessage;

import io.quarkiverse.langchain4j.RegisterAiService;

/**

* LangChain4j AI service that returns knowledge-base style remediation

* guidance.

*/

@RegisterAiService

public interface KnowledgeBaseAgent {

@SystemMessage("""

You are a Level-1 IT support knowledge base assistant.

Your job is to return concise, actionable remediation steps

for common enterprise IT issues.

Known issue patterns and their standard resolutions:

VPN / Network connectivity:

- Check that the GlobalProtect client is version 6.x or later.

- Reconnect using the campus gateway (vpn.corp.example.com).

- If the issue persists, flush DNS: ipconfig /flushdns (Windows) or

sudo dscacheutil -flushcache (macOS).

Email / Outlook not syncing:

- Verify the user's mailbox is not over quota (check via admin portal).

- Remove and re-add the Exchange account in Outlook settings.

- Run the Microsoft Support and Recovery Assistant (SaRA) tool.

Password / SSO locked out:

- Direct the user to the self-service password reset portal:

https://sspreset.corp.example.com

- If MFA device is lost, raise a ticket to the Identity team with

manager approval attached.

Laptop performance / high CPU:

- Run Windows Update; pending updates often hold CPU at 100%.

- Check for runaway processes in Task Manager → sort by CPU.

- If the device is >4 years old, flag for refresh cycle.

Software installation request:

- Check the approved software catalogue at https://apps.corp.example.com.

- For unapproved software, route to the procurement team for a licence review.

- Deployment is handled via Intune — user does not need admin rights.

Printer / peripheral not recognised:

- Remove and reinstall the device driver from IT's driver repository.

- Check USB port with a different device to rule out hardware fault.

- For network printers, verify the printer IP is reachable: ping <printer-ip>.

If the issue does not match a known pattern:

- Acknowledge the issue.

- Provide general diagnostic steps (event viewer, logs, screenshots).

- Recommend escalation to Level 2 with a list of data to collect first.

Keep your answer to 3-5 bullet points. Be specific. Avoid generic advice.

""")

@UserMessage("""

Support ticket details:

- Category : {category}

- Severity : {severity}

- Summary : {summary}

- Details : {details}

Return the remediation steps for this issue.

""")

String findRemediation(String category, String severity, String summary, String details);

}

This is deliberately fake but complete. I do not want to drag retrieval into an A2A tutorial unless retrieval is the point.

Create kb-agent/src/main/java/dev/kbagent/KnowledgeBaseAgentCard.java

package dev.kbagent;

import java.util.List;

import org.eclipse.microprofile.config.inject.ConfigProperty;

import io.a2a.server.PublicAgentCard;

import io.a2a.spec.AgentCapabilities;

import io.a2a.spec.AgentCard;

import io.a2a.spec.AgentSkill;

import io.a2a.spec.TransportProtocol;

import jakarta.enterprise.context.ApplicationScoped;

import jakarta.enterprise.inject.Produces;

@ApplicationScoped

public class KnowledgeBaseAgentCard {

@ConfigProperty(name = "deskflow.a2a.public-base-url")

String publicBaseUrl;

@Produces

@PublicAgentCard

public AgentCard agentCard() {

String base = publicBaseUrl.endsWith("/") ? publicBaseUrl.substring(0, publicBaseUrl.length() - 1)

: publicBaseUrl;

String jsonRpcUrl = base + "/";

return new AgentCard.Builder()

.name("DeskFlow Knowledge Base Agent")

.description(

"""

Returns Level-1 remediation steps for common enterprise

IT support categories including VPN, email, identity,

performance, software, and peripherals.

""")

.url(jsonRpcUrl)

.version("1.0.0")

.capabilities(

new AgentCapabilities.Builder()

.streaming(false)

.pushNotifications(false)

.stateTransitionHistory(false)

.build())

.defaultInputModes(List.of("text"))

.defaultOutputModes(List.of("text"))

.preferredTransport(TransportProtocol.JSONRPC.asString())

.skills(

List.of(

new AgentSkill.Builder()

.id("kb-remediation")

.name("IT Remediation Lookup")

.description(

"Returns actionable remediation steps given a ticket category, severity, summary, and details")

.tags(List.of("itsm", "helpdesk",

"remediation",

"knowledge-base"))

.examples(

List.of(

"VPN not connecting after OS update",

"User locked out of SSO following password expiry"))

.build()))

.build();

}

}

The important bit for io.github.a2asdk 0.3.x is the top-level url (the JSON-RPC endpoint LangChain4j’s client resolves). Nested builders use new AgentCard.Builder() style in this SDK line, not a static AgentCard.builder() helper.

Create kb-agent/src/main/java/dev/kbagent/KnowledgeBaseAgentInvoker.java

LangChain4j registers @RegisterAiService beans as request-scoped by default. The A2A AgentExecutor runs on a worker thread pool, not on an HTTP request thread, so calling KnowledgeBaseAgent directly from the executor throws ContextNotActiveException. A separate @ApplicationScoped bean with @ActivateRequestContext on findRemediation activates a synthetic request scope for that call. It has to be a different bean than KnowledgeBaseExecutorProducer: putting @ActivateRequestContext on the producer and calling it from the anonymous AgentExecutor inside the same class is self-invocation, and CDI does not run the interceptor.

package dev.kbagent;

import jakarta.enterprise.context.ApplicationScoped;

import jakarta.enterprise.context.control.ActivateRequestContext;

import jakarta.inject.Inject;

/**

* Invokes the request-scoped {@link KnowledgeBaseAgent} from non-request

* threads (for example the

* A2A agent executor pool), where CDI's request context is not active by

* default.

*/

@ApplicationScoped

public class KnowledgeBaseAgentInvoker {

private final KnowledgeBaseAgent kbAgent;

@Inject

public KnowledgeBaseAgentInvoker(KnowledgeBaseAgent kbAgent) {

this.kbAgent = kbAgent;

}

@ActivateRequestContext

public String findRemediation(String category, String severity, String summary, String details) {

return kbAgent.findRemediation(category, severity, summary, details);

}

}

Create kb-agent/src/main/java/dev/kbagent/KnowledgeBaseExecutorProducer.java

package dev.kbagent;

import java.util.ArrayList;

import java.util.List;

import org.jboss.logging.Logger;

import io.a2a.server.agentexecution.AgentExecutor;

import io.a2a.server.agentexecution.RequestContext;

import io.a2a.server.events.EventQueue;

import io.a2a.server.tasks.TaskUpdater;

import io.a2a.spec.InvalidParamsError;

import io.a2a.spec.JSONRPCError;

import io.a2a.spec.Message;

import io.a2a.spec.Part;

import io.a2a.spec.TextPart;

import jakarta.enterprise.context.ApplicationScoped;

import jakarta.enterprise.inject.Produces;

import jakarta.inject.Inject;

/**

* Bridges the A2A task lifecycle to the {@link KnowledgeBaseAgent} AI service.

* <p>

* The {@code deskflow-app} client sends parameters as ordered {@link TextPart}

* values (same order as

* {@code AgenticServices.a2aBuilder(...).inputKeys(...)}). This executor reads

* them by index:

* {@code [0] category}, {@code [1] severity}, {@code [2] summary},

* {@code [3] details}.

*/

@ApplicationScoped

public class KnowledgeBaseExecutorProducer {

private static final Logger LOG = Logger.getLogger(KnowledgeBaseExecutorProducer.class);

private final KnowledgeBaseAgentInvoker kbAgentInvoker;

@Inject

public KnowledgeBaseExecutorProducer(KnowledgeBaseAgentInvoker kbAgentInvoker) {

this.kbAgentInvoker = kbAgentInvoker;

}

@Produces

public AgentExecutor agentExecutor() {

return new AgentExecutor() {

@Override

public void execute(RequestContext context, EventQueue eventQueue) throws JSONRPCError {

TaskUpdater updater = new TaskUpdater(context, eventQueue);

LOG.info("[KBAgent] Received A2A task request");

if (context.getTask() == null) {

updater.submit();

}

updater.startWork();

List<String> inputs = new ArrayList<>();

Message message = context.getMessage();

if (message != null && message.getParts() != null) {

for (Part<?> part : message.getParts()) {

if (part instanceof TextPart textPart) {

inputs.add(textPart.getText());

}

}

}

if (inputs.size() < 4) {

throw new InvalidParamsError(

"Expected 4 text parts: category, severity, summary, details. Got: " + inputs.size());

}

String category = inputs.get(0);

String severity = inputs.get(1);

String summary = inputs.get(2);

String details = inputs.get(3);

LOG.infof("[KBAgent] Looking up remediation — category=%s severity=%s", category, severity);

String remediation = kbAgentInvoker.findRemediation(category, severity, summary, details);

LOG.debugf("[KBAgent] Remediation result: %s", remediation);

updater.addArtifact(List.of(new TextPart(remediation)));

updater.complete();

}

@Override

public void cancel(RequestContext context, EventQueue eventQueue) throws JSONRPCError {

new TaskUpdater(context, eventQueue).cancel();

}

};

}

}

This class is the real seam in the whole sample. The client sends four ordered text parts. The server reads four ordered text parts. If you change the method signature on one side and forget the other, the model still runs. It just receives garbage in a very confident format. KnowledgeBaseAgentInvoker exists only so those four parts reach KnowledgeBaseAgent without tripping request-scope CDI on the A2A executor thread.

Build deskflow-app

Now we build the caller: the REST API that classifies a ticket locally and then asks the KB agent for remediation text.

Add Dependencies to deskflow-app/pom.xml

<!-- Required for AgenticServices.a2aBuilder() at runtime (DummyA2AService otherwise). -->

<dependency>

<groupId>dev.langchain4j</groupId>

<artifactId>langchain4j-agentic-a2a</artifactId>

</dependency>

<!-- See kb-agent: satisfies ARC indexer for optional gRPC types from the A2A client stack. -->

<dependency>

<groupId>io.grpc</groupId>

<artifactId>grpc-stub</artifactId>

</dependency>

<dependency>

<groupId>io.quarkus</groupId>

<artifactId>quarkus-junit-mockito</artifactId>

<scope>test</scope>

</dependency>

<dependency>

<groupId>io.rest-assured</groupId>

<artifactId>rest-assured</artifactId>

<scope>test</scope>

</dependency>Same grpc-stub entry as kb-agent: the client dependency graph still surfaces gRPC stub types to ARC.

Set deskflow-app/src/main/resources/application.properties

quarkus.http.port=8079

quarkus.langchain4j.ollama.base-url=http://localhost:11434

quarkus.langchain4j.ollama.chat-model.model-id=${OLLAMA_MODEL:llama3.2}

quarkus.langchain4j.ollama.chat-model.log-requests=true

quarkus.langchain4j.ollama.chat-model.log-responses=true

quarkus.langchain4j.timeout=120s

# Remote KB agent (JSON-RPC). Must match deskflow.a2a.public-base-url on kb-agent for local runs.

deskflow.kb-agent.url=http://localhost:8988

a2a.blocking.agent.timeout.seconds=60

deskflow.kb-agent.url points at the remote agent, and a2a.blocking.agent.timeout.seconds gives the A2A call a little more patience than the default. Local models are not fast just because they are local.

Create deskflow-app/src/main/java/dev/deskflow/model/SupportTicket.java

package dev.deskflow.model;

public record SupportTicket(String id, String summary, String details, String reportedBy) {}Create deskflow-app/src/main/java/dev/deskflow/model/TriageResult.java

package dev.deskflow.model;

public record TriageResult(

String ticketId,

String severity,

String category,

String remediationHint,

boolean escalationRequired) {}Create deskflow-app/src/main/java/dev/deskflow/TriageAgent.java

package dev.deskflow;

import dev.langchain4j.service.SystemMessage;

import dev.langchain4j.service.UserMessage;

import io.quarkiverse.langchain4j.RegisterAiService;

@RegisterAiService

public interface TriageAgent {

@SystemMessage(

"""

You are a helpdesk triage specialist.

Given a support ticket summary and details, return ONLY a JSON object

with no Markdown fences and no extra text, following this exact schema:

{

"severity": "<CRITICAL|HIGH|MEDIUM|LOW>",

"category": "<VPN|EMAIL|IDENTITY|PERFORMANCE|SOFTWARE|PERIPHERAL|OTHER>",

"escalationRequired": <true|false>

}

Severity rules:

- CRITICAL: system-wide outage, data loss, security incident

- HIGH: single user fully blocked, deadline impact

- MEDIUM: degraded functionality, workaround exists

- LOW: cosmetic, informational, or enhancement request

Escalation rules:

- escalationRequired = true when severity is CRITICAL or HIGH

AND the issue cannot be resolved with standard Level-1 steps.

""")

@UserMessage(

"""

Ticket summary : {summary}

Ticket details : {details}

""")

String classify(String summary, String details);

}I kept the output as raw JSON because it makes the tutorial easy to read. In production I would want stronger guardrails or structured output.

Create deskflow-app/src/main/java/dev/deskflow/KnowledgeBaseClient.java

Keep this as a plain interface with no @RegisterAiService, no @Agent, and no @A2AClientAgent on the type. Quarkus LangChain4j treats @A2AClientAgent as one of the agentic annotations that imply an AI service (AnnotationsImpliesAiServiceBuildItem in the agentic deployment module). If you also slap @RegisterAiService on the same interface, the build generates KnowledgeBaseClient$$QuarkusImpl that runs through AiServiceMethodImplementationSupport and expects a @UserMessage template. A pure A2A hop has no such template, so you either get IllegalArgumentException: template cannot be null or blank or the Improper invocation of a standalone agent outside of an agentic system warning followed by the same failure.

The fix used in this repo: inject KnowledgeBaseClient by type, implement it with a normal CDI bean, and call AgenticServices.a2aBuilder(baseUrl).inputKeys(...).outputKey("remediationHint").build() once. That returns an UntypedAgent; invoke(Map) performs the remote A2A call without pretending the method is a chat prompt.

package dev.deskflow;

/**

* Contract for calling the remote knowledge-base agent. Implementation uses LangChain4j A2A programmatically

* so we do not mix {@code @RegisterAiService} with agent-only transports (that combination routes through

* {@code AiServiceMethodImplementationSupport} and expects a {@code @UserMessage} template).

*/

public interface KnowledgeBaseClient {

String findRemediation(String category, String severity, String summary, String details);

}

Create deskflow-app/src/main/java/dev/deskflow/A2aKnowledgeBaseClient.java

package dev.deskflow;

import dev.langchain4j.agentic.AgenticServices;

import dev.langchain4j.agentic.UntypedAgent;

import jakarta.enterprise.context.ApplicationScoped;

import jakarta.inject.Inject;

import java.util.LinkedHashMap;

import java.util.Map;

import org.eclipse.microprofile.config.inject.ConfigProperty;

/**

* Remote KB lookup over A2A using LangChain4j's programmatic client. Input key order must match the

* {@code TextPart} order expected by {@code KnowledgeBaseExecutorProducer} on {@code kb-agent}.

*/

@ApplicationScoped

public class A2aKnowledgeBaseClient implements KnowledgeBaseClient {

private final UntypedAgent remoteKb;

@Inject

public A2aKnowledgeBaseClient(@ConfigProperty(name = "deskflow.kb-agent.url") String kbAgentBaseUrl) {

String base = kbAgentBaseUrl.trim();

this.remoteKb = AgenticServices.a2aBuilder(base)

.inputKeys("category", "severity", "summary", "details")

.outputKey("remediationHint")

.build();

}

@Override

public String findRemediation(String category, String severity, String summary, String details) {

Map<String, Object> inputs = new LinkedHashMap<>();

inputs.put("category", category);

inputs.put("severity", severity);

inputs.put("summary", summary);

inputs.put("details", details);

Object result = remoteKb.invoke(inputs);

if (result == null) {

return "";

}

if (result instanceof String s) {

return s;

}

return result.toString();

}

}

Use LinkedHashMap so the key order matches the inputKeys order. That order must stay aligned with the TextPart index contract in KnowledgeBaseExecutorProducer on kb-agent. Treat them as a pair.

Create deskflow-app/src/main/java/dev/deskflow/TicketOrchestrator.java

package dev.deskflow;

import com.fasterxml.jackson.databind.JsonNode;

import com.fasterxml.jackson.databind.ObjectMapper;

import dev.deskflow.model.SupportTicket;

import dev.deskflow.model.TriageResult;

import jakarta.enterprise.context.ApplicationScoped;

import jakarta.inject.Inject;

import org.jboss.logging.Logger;

@ApplicationScoped

public class TicketOrchestrator {

private static final Logger LOG = Logger.getLogger(TicketOrchestrator.class);

private final TriageAgent triageAgent;

private final KnowledgeBaseClient kbClient;

private final ObjectMapper mapper = new ObjectMapper();

@Inject

public TicketOrchestrator(TriageAgent triageAgent, KnowledgeBaseClient kbClient) {

this.triageAgent = triageAgent;

this.kbClient = kbClient;

}

public TriageResult process(SupportTicket ticket) {

LOG.infof("[Orchestrator] Processing ticket %s: %s", ticket.id(), ticket.summary());

String triageJson = triageAgent.classify(ticket.summary(), ticket.details());

LOG.debugf("[Orchestrator] Triage result JSON: %s", triageJson);

String severity;

String category;

boolean escalation;

try {

JsonNode node = mapper.readTree(triageJson);

severity = node.get("severity").asText();

category = node.get("category").asText();

escalation = node.get("escalationRequired").asBoolean();

} catch (Exception e) {

LOG.errorf(e, "[Orchestrator] Failed to parse triage JSON, defaulting to MEDIUM/OTHER");

severity = "MEDIUM";

category = "OTHER";

escalation = false;

}

category = sanitizeCategory(category);

LOG.infof("[Orchestrator] Calling remote KB agent — category=%s severity=%s", category, severity);

String remediation = kbClient.findRemediation(category, severity, ticket.summary(), ticket.details());

return new TriageResult(ticket.id(), severity, category, remediation, escalation);

}

/**

* Models sometimes emit multiple enum labels (e.g. {@code "PERFORMANCE|SOFTWARE"}). The KB contract expects a

* single category token.

*/

private static String sanitizeCategory(String category) {

if (category == null || category.isBlank()) {

return "OTHER";

}

String first = category.split("\\|")[0].trim();

return first.isEmpty() ? "OTHER" : first;

}

}

The fallback to MEDIUM / OTHER is there because small local models are sometimes stubborn about returning raw JSON. I would rather degrade in a visible way than explode on a parse error in the middle of the tutorial.

Create deskflow-app/src/main/java/dev/deskflow/TicketResource.java

package dev.deskflow;

import dev.deskflow.model.SupportTicket;

import dev.deskflow.model.TriageResult;

import jakarta.inject.Inject;

import jakarta.ws.rs.Consumes;

import jakarta.ws.rs.POST;

import jakarta.ws.rs.Path;

import jakarta.ws.rs.Produces;

import jakarta.ws.rs.core.MediaType;

import java.util.UUID;

@Path("/tickets")

@Consumes(MediaType.APPLICATION_JSON)

@Produces(MediaType.APPLICATION_JSON)

public class TicketResource {

private final TicketOrchestrator orchestrator;

@Inject

public TicketResource(TicketOrchestrator orchestrator) {

this.orchestrator = orchestrator;

}

@POST

public TriageResult submitTicket(SupportTicket ticket) {

SupportTicket enriched =

ticket.id() == null || ticket.id().isBlank()

? new SupportTicket(

UUID.randomUUID().toString(),

ticket.summary(),

ticket.details(),

ticket.reportedBy())

: ticket;

return orchestrator.process(enriched);

}

}

That is the whole application path on the caller side: HTTP request in, local triage, remote A2A call, JSON response out.

Add tests before we run it

The end-to-end flow still depends on Ollama, so I want two smaller tests as well: one for the public agent card, and one for the REST path with mocks.

Create kb-agent/src/test/resources/application.properties

%test.quarkus.langchain4j.ollama.devservices.enabled=false

%test.quarkus.langchain4j.ollama.base-url=http://127.0.0.1:11434

%test.quarkus.langchain4j.ollama.chat-model.model-id=llama3.2Create kb-agent/src/test/java/dev/kbagent/AgentCardResourceTest.java

package dev.kbagent;

import static org.hamcrest.Matchers.equalTo;

import org.junit.jupiter.api.Test;

import io.quarkus.test.junit.QuarkusTest;

import io.restassured.RestAssured;

@QuarkusTest

class AgentCardResourceTest {

@Test

void wellKnownAgentCardIsServed() {

RestAssured.given()

.when()

.get("/.well-known/agent-card.json")

.then()

.statusCode(200)

.body("name", equalTo("DeskFlow Knowledge Base Agent"));

}

}Create deskflow-app/src/test/java/dev/deskflow/TicketResourceTest.java

package dev.deskflow;

import static io.restassured.RestAssured.given;

import static org.hamcrest.Matchers.equalTo;

import static org.mockito.ArgumentMatchers.anyString;

import static org.mockito.Mockito.when;

import io.quarkus.test.InjectMock;

import io.quarkus.test.junit.QuarkusTest;

import org.junit.jupiter.api.Test;

@QuarkusTest

class TicketResourceTest {

@InjectMock

TriageAgent triageAgent;

@InjectMock

KnowledgeBaseClient knowledgeBaseClient;

@Test

void postTicketReturnsTriageAndRemediation() {

when(triageAgent.classify(anyString(), anyString()))

.thenReturn("{\"severity\":\"HIGH\",\"category\":\"VPN\",\"escalationRequired\":true}");

when(knowledgeBaseClient.findRemediation(anyString(), anyString(), anyString(), anyString()))

.thenReturn("- Verify GlobalProtect version.\n- Reconnect to vpn.corp.example.com.\n");

given().contentType("application/json")

.body(

"""

{

"summary": "VPN will not connect",

"details": "After OS update",

"reportedBy": "test@example.com"

}

""")

.when()

.post("/tickets")

.then()

.statusCode(200)

.body("severity", equalTo("HIGH"))

.body("category", equalTo("VPN"))

.body("escalationRequired", equalTo(true));

}

}The deskflow-app test does not need extra test properties because we mock both AI services. The point there is the REST path and orchestration shape, not live model output.

Run both services

Start the KB agent first:

cd a2a-deskflow/kb-agent

./mvnw quarkus:devOpen another terminal and check the agent card:

cd a2a-deskflow

curl -s http://localhost:8988/.well-known/agent-card.json | jq .nameYou should see:

"DeskFlow Knowledge Base Agent"Open one more terminal and start the main app:

cd a2a-deskflow/deskflow-app

./mvnw quarkus:devAt this point you should have:

kb-agentlistening on http://localhost:8988deskflow-applistening on http://localhost:8079

Send a real ticket through the whole flow

Open a third terminal and send a VPN-flavored ticket:

curl -s -X POST http://localhost:8079/tickets \

-H "Content-Type: application/json" \

-d '{

"summary": "Cannot connect to corporate VPN after upgrading macOS",

"details": "GlobalProtect shows error: Gateway not responding. Tried restarting.",

"reportedBy": "alice@deskflow.example"

}' | jq .You should get a JSON object with this shape:

{

"ticketId": "8f9d9f8d-1e36-4ce9-b40a-6a0ff1a6c3d5",

"severity": "HIGH",

"category": "VPN",

"remediationHint": "- Check that the GlobalProtect client is version 6.x or later.\n- Reconnect using the campus gateway...\n",

"escalationRequired": true

}The exact severity, category, and remediation text can move around a little depending on your model. The important part is the path:

deskflow-appaccepts the ticketTriageAgentreturns JSONTicketOrchestratorparses that JSONKnowledgeBaseClientsends the four text parts over A2AKnowledgeBaseExecutorProducercallsKnowledgeBaseAgentInvoker, which activates a request scope and delegates toKnowledgeBaseAgentThe remediation text comes back as the response payload

Watch the logs while you run that request.

kb-agent should log:

[KBAgent] Received A2A task request[KBAgent] Looking up remediation - category=... severity=...

deskflow-app should log:

[Orchestrator] Processing ticket ...[Orchestrator] Calling remote KB agent - category=... severity=...

Run the fast tests

When you want a quick check without starting both apps by hand, run the module tests:

cd a2a-deskflow/kb-agent

./mvnw test

cd ../deskflow-app

./mvnw testWhat those tests prove:

kb-agentserves the public agent carddeskflow-appaccepts a REST ticket and produces a response shape with mocked AI calls

What they do not prove:

live end-to-end A2A over two running processes

real model behavior in Ollama

That is why I keep both the fast tests and the manual smoke test.

Close the loop

We ended up with a helpdesk service that keeps local triage local and sends remediation lookup across a real service boundary. That is the whole point of the sample. A2A here is not magic orchestration dust. It is a clean contract between two services, which is exactly why it is useful.