Governed Knowledge for Enterprise AI Agents

A platform approach for exposing proprietary engineering knowledge to agents with ownership, access control, quality checks, and measurable adoption.

AI coding agents are strange new colleagues. They can refactor a service in ten minutes, then confidently invent the wrong authentication helper because your real one lives in an internal wiki last edited by someone called “platform-core” three reorganizations ago.

That is not a model problem alone. It is an enterprise information problem.

Most companies already have the knowledge their agents need. It sits in framework documentation, service templates, reference architectures, ADRs, runbooks, security rules, API catalogs, migration guides, and the quiet little README in the repository everybody copies from but nobody admits is the real platform contract.

The missing layer is not “more context” in the abstract. The missing layer is a managed way to publish proprietary engineering knowledge to agents, measure whether the agent can use it, and keep the whole thing boring enough for security and platform teams to sign off.

That is where documentation MCP servers and similar context systems become more interesting than their first demo suggests.

The Small Demo Hides the Enterprise Problem

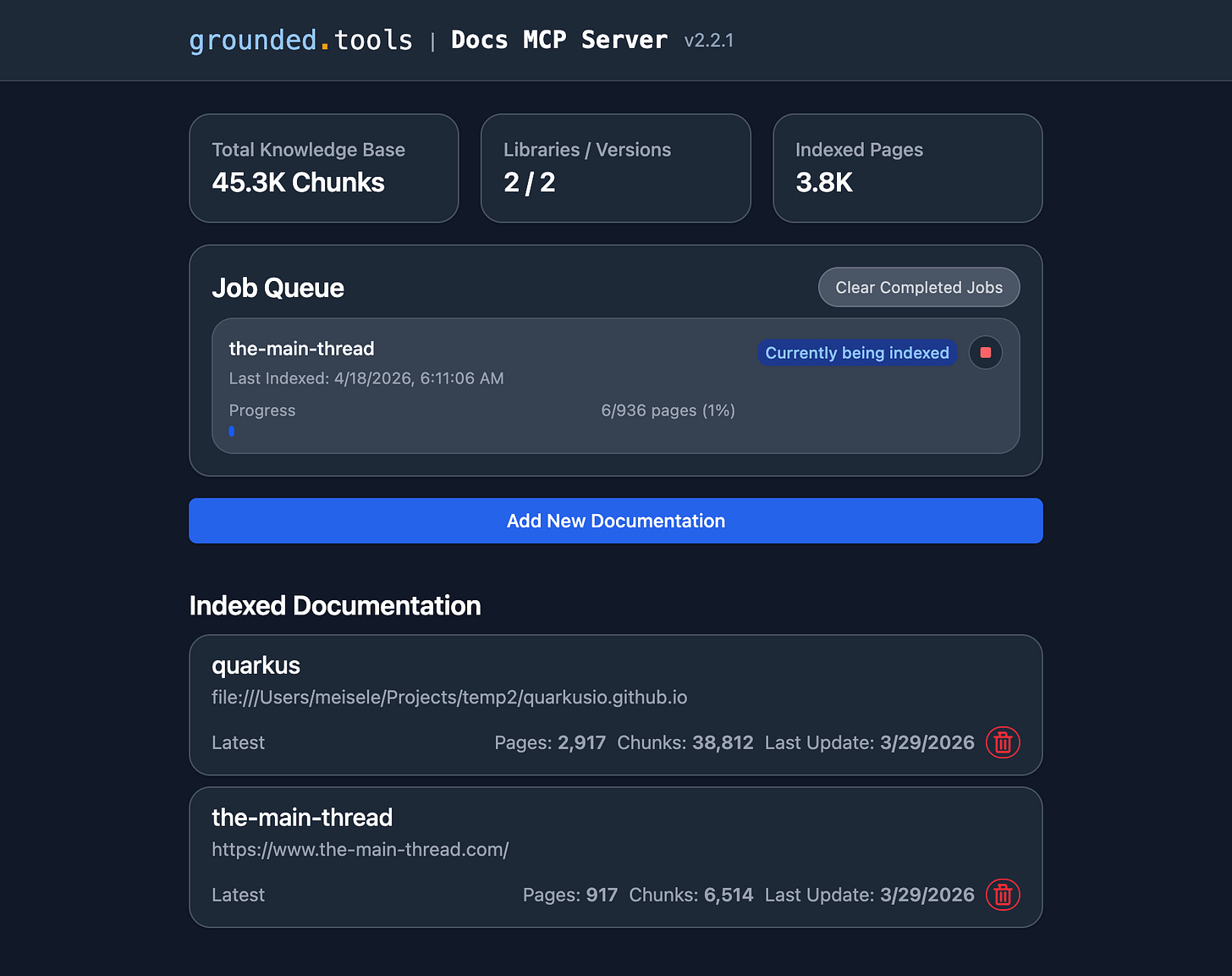

The basic pitch is simple. A tool like Grounded Docs MCP Server indexes documentation from websites, GitHub, npm, PyPI, and local files. It exposes that knowledge through the Model Context Protocol, so an MCP-aware coding assistant can search the right documentation instead of guessing from training data.

The repository positions itself as an open-source alternative to Context7, Nia, and Ref.Tools. This product category is forming around one shared pain and is growing. Some examples:

Context7 focuses on up-to-date, version-specific public library documentation for coding assistants.

Ref describes an MCP documentation server for public and private documentation.

Nia reaches wider, across repositories, documentation, Slack, Google Drive, and local knowledge sources.

Grounded Docs is appealing when you want an open-source, local, indexable documentation server with CLI, web UI, MCP, rich file support, and optional semantic search.

For a developer laptop, that is useful since longer. Index the React docs, ask about useEffect, get less nonsense. Fine.

For an enterprise, the interesting question is different:

What if the authoritative source is not React? What if it is your internal Java starter, your approved Terraform modules, your Kubernetes workload blueprint, your identity provider integration, your payment retry policy, your logging schema, and the one blessed way to get a service through production review?

That is where these tools stop being nice agent accessories and become part of the software supply chain.

Treat Internal Knowledge Like a Product

Enterprises are used to treating code as an asset. The build pipeline has owners, versioning, tests, signing, deployment rules, and rollback paths.

Internal knowledge rarely gets the same treatment. It gets pages. Pages get copied. Copies get old. Old pages get embedded into onboarding decks. Decks get screenshotted. Then an agent arrives, reads one random artifact, and now the company has automated folklore.

The first shift is to stop thinking about “the docs” as one pile.

Agents need several different classes of proprietary information:

Internal framework manuals - How to use the company service framework, starter dependencies, extension points, release process, and supported versions.

Blueprints and golden paths - The approved shape for a REST service, event-driven service, batch job, frontend app, data pipeline, or internal tool.

Best practices - Logging, metrics, tracing, auth, secrets, error handling, persistence, testing, threat modeling, and operational readiness.

Decision records - Why the platform works this way, where the hard constraints are, and which older approaches are no longer allowed.

Reference implementations - Small but complete examples that compile, deploy, and pass checks.

API and schema catalogs - OpenAPI, AsyncAPI, protobuf, GraphQL schemas, database contracts, and event formats.

Operational knowledge - Runbooks, incident patterns, SLOs, alert response, dashboard conventions, and known failure modes.

Security and compliance rules - The bits that should shape code before a human reviewer has to repeat them for the thousandth time.

Some of this is documentation. Some of it is code. Some of it is policy. Some of it is institutional memory that currently survives because a senior engineer knows who to ask. That is charming in the same way an unreplicated production database is charming.

An enterprise context system should make those sources available to agents as versioned, owned, testable knowledge.

MCP Is the Access Point, Not the Whole System

MCP matters because it gives agents a common way to discover and call tools. The official MCP authorization specification also gives the security discussion a place to stand: HTTP-based MCP servers can use OAuth-style authorization, bearer tokens, protected resource metadata, and proper token validation.

That does not mean “put an MCP server in front of Confluence” is the whole architecture. It is only the front door.

A useful enterprise setup has a few layers:

Source ingestion - Pull from Git repositories, docs sites, API catalogs, package registries, local folders, wikis, document stores, and approved exports from collaboration tools.

Normalization - Convert documents into clean Markdown or structured chunks, preserve headings and code blocks, remove navigation junk, and attach metadata like source, owner, version, product area, and sensitivity.

Indexing - Store both lexical search data and embeddings. Lexical search is still good for exact symbols, package names, error codes, and policy IDs. Embeddings help when the user asks in human language.

Policy filtering - Apply access control before retrieval, not after the model has seen the content. An engineer in payments should not get merger planning notes because both contain the word “settlement.”

Retrieval tools - Expose small, boring tools: search, fetch, list sources, resolve versions, find an approved blueprint, retrieve a reference implementation, or explain the current production standard for a task.

Observation - Log what the agent searched, which documents were returned, which answer cited them, and where the agent still guessed.

Evaluation - Continuously test retrieval quality and answer quality with realistic engineering tasks.

Grounded Docs already points in this direction. It can index local files, GitHub repositories, package registries, websites, archives, Office documents, PDFs, source code, and structured data. It has a CLI for scripted indexing and search, a web UI for management, and an MCP server for agents. Its deployment model also matters: a single process works for local use, while a coordinator and workers fit larger indexing workloads.

The enterprise work is to wrap that shape with ownership, governance, and quality measurement.

How Proprietary Information Reaches the Agent

There are two bad defaults.

The first is to let the agent browse everything the user can browse. That sounds simple until you remember that enterprise permissions are messy, documents are overshared, and “available” does not mean “approved for code generation.”

The second is to hand-curate a tiny prompt file with all the blessed rules. That works for the first week. Then a library changes, a security rule moves, a platform team updates the template, and the prompt file becomes a fossil with YAML.

A better model is a publishing pipeline.

Each knowledge source should have:

An owner - A team or group responsible for correctness.

A source of truth - The repo, docs site, catalog, or document collection where updates happen.

A publication path - How content is transformed, indexed, versioned, and exposed to agents.

A sensitivity label - Public, internal, confidential, regulated, customer data, security-sensitive, or whatever your company already uses.

A freshness rule - How often it is refreshed and when stale content should stop being used.

A deprecation rule - How old patterns are marked so an agent does not recommend them with a straight face.

For internal frameworks, the source of truth should usually be near the code. The same repository that publishes company-quarkus-starter should publish the agent-facing guide, supported versions, migration recipes, and small compilable examples. The index should know that version 4.2 of the framework pairs with specific libraries, platform BOMs, config names, and deployment constraints.

For blueprints, treat them as executable examples. A “golden path” service that does not build is not a golden path. It is corporate fan fiction. Keep a small repository per blueprint or a monorepo with scenario folders, then index the README, code, generated OpenAPI, test commands, and deployment manifests.

For best practices, prefer short, decision-oriented pages over giant policy documents. Agents need the rule, the reason, and the example. If the answer is “use mTLS between services,” the retrievable unit should include the approved library, config snippet, failure mode, and test command.

For architecture decisions, index the ADRs but connect them to current guidance. An ADR from 2021 may explain why something happened. It should not silently override a 2026 platform standard because the vector search liked its wording.

Access Control Is a Product Feature

The enterprise version of this system lives or dies on access control.

Grounded Docs supports optional OAuth2 authentication for MCP endpoints and can validate JWTs from providers such as Keycloak, Auth0, Clerk, or Azure AD. That is a good start, but its current model is binary: authenticated users get access to all MCP tools. Its own authentication docs also call out an important deployment boundary: internal API endpoints used for worker communication need network-level protection and should not be exposed directly.

That is not a criticism. It is the line where product fit meets enterprise fit.

In a company setting, there are at least three authorization questions:

Who may call the MCP server?

Which tools may they use?

Which indexed documents may appear in results?

The last one is the hard part.

If the retrieval layer can return content the user should not see, the damage is already done before the model writes an answer. Redaction after retrieval is not a serious control. The index needs document-level ACL metadata, group mapping, tenant boundaries where needed, and ideally policy checks in the retrieval query itself.

This is also where scoped servers can help. Nia’s scoped MCP model is a useful pattern: create a focused MCP server for one source or one workflow so the agent sees fewer tools and less irrelevant knowledge. The same idea works internally: one scoped server for the payments platform, one for Java service blueprints, one for data engineering standards, one for incident response. Smaller context surfaces are easier to secure and easier for agents to use.

Quality Is Not “The Answer Looked Good”

The old documentation metric was page views. That was already weak for humans. For agents, it is close to useless.

An agent can retrieve a page, ignore the relevant paragraph, combine it with an old memory, and still produce something that looks tidy in a pull request. We need measurements closer to the work.

I would split quality into four layers.

Source quality - Is the underlying information correct?

Check ownership, review date, supported version, deprecation status, build status for examples, and whether code snippets are tested. If a page has no owner and no freshness signal, the agent should treat it as weak evidence.

Index quality - Did the system ingest the source correctly?

Check whether pages are missing, chunks are too large, headings were preserved, code fences survived, metadata is attached, and duplicate pages are merged or ranked correctly. Bad chunking can make perfect documentation invisible.

Retrieval quality - Does the right information come back for realistic queries?

Build an evaluation set from actual developer tasks:

“Create a new Java service with the approved identity integration.”

“Add an outbound Kafka producer using the company schema rules.”

“Migrate a service from platform framework 3.x to 4.x.”

“Find the approved Terraform module for a private S3 bucket.”

“Explain how to handle PII in application logs.”

“Generate the readiness checklist for a production review.”

For each task, define the expected documents and snippets. Then measure recall, precision, rank position, version correctness, and whether restricted documents stay out of results.

Answer quality - Did the agent use the retrieved information correctly?

This needs task-level evaluation. The generated change should compile, use the approved library, avoid deprecated config, cite the right source, ask for missing information when needed, and pass the same checks a human contribution would pass.

For code-heavy tasks, automated checks are your friend:

Compile the generated project.

Run unit and integration tests.

Run policy-as-code checks against Kubernetes, Terraform, or pipeline config.

Scan for banned dependencies and deprecated APIs.

Compare generated files with blueprint expectations.

Verify that required observability, auth, and error-handling pieces exist.

A small evaluation can be very concrete:

Task - “Create a new Java service with the approved identity integration.”

Expected sources - The current service blueprint, the internal framework authentication guide, the supported dependency matrix, and the production readiness checklist.

Retrieval pass - Those sources appear in the first few results, the deprecated framework 3.x migration guide does not outrank the current guide, and no restricted documents appear for a normal application developer.

Generated-output pass - The service builds, tests pass, the approved auth dependency is present, the config uses the current property names, and the readiness checklist has no missing mandatory items.

Failure feedback - If retrieval missed the auth guide, fix ranking or metadata. If retrieval succeeded but the code used an old property, fix the source page or add a focused example. If the blueprint itself fails, stop blaming the model and fix the blueprint.

This is where the feedback loop gets real. If agents keep failing the same task, the fix may not be a better model. It may be a missing example, bad source metadata, stale docs, or a retrieval ranking problem.

The Metadata Matters More Than People Think

Enterprises love repositories. Agents love precise metadata.

Every indexed unit should carry enough information for the agent to judge whether it is safe to use:

Source URL or repository path

Owning team

Last reviewed date

Product or platform area

Supported versions

Deprecated versions

Sensitivity label

Intended audience

Environment scope, such as dev, test, production, regulated, or cloud region

Relationship to other sources, such as “replaces”, “supersedes”, “example for”, or “policy behind”

Without this, the agent sees a flat soup of text. It may retrieve a migration guide as if it were the current setup guide. It may prefer an old incident workaround over the standard solution because the workaround contains more specific error messages. Specific wrong text beats vague correct text more often than we like.

Metadata gives the retrieval layer something to rank with and gives the model something to reason about.

Internal Frameworks Are the Killer Use Case

Public documentation tools are easiest to understand with React or Next.js because everyone knows the pain of version drift. But the enterprise payoff is bigger with internal frameworks.

Internal frameworks often have a small expert team and a large consumer base. The framework team answers the same questions in Slack, code reviews, office hours, and migration tickets:

Which starter should I use?

Which version is supported?

How do I configure auth?

How do I expose metrics?

How do I write a contract test?

Why does the build plugin fail in CI?

Is the old annotation still allowed?

What changed in the new platform release?

An agent with a well-maintained internal framework index can answer many of these questions at the point of work. Better, it can apply the answer while editing code.

This does not remove the platform team. It changes their job. Instead of repeating answers, they curate source material, own examples, watch evaluation results, and fix the docs when agents fail. That is a better use of senior engineers than Slack archaeology.

Blueprints Should Be Executable Context

Blueprints are where the agent story becomes practical.

A human can read a long “service standard” page and map it to a new project. An agent needs something more concrete. Give it an executable blueprint:

A minimal service that builds.

Tests that prove the important behavior.

Deployment manifests with the approved defaults.

Security config that matches the real identity setup.

Observability config that emits the required telemetry.

A README that explains where to change names, packages, topics, and secrets.

A changelog that maps platform versions to blueprint versions.

Then index all of it.

When the user asks an agent to create a new service, the agent should retrieve the blueprint, identify the supported version, copy the shape, and adapt only the business-specific parts. This is much safer than asking a general model to invent “a typical enterprise service” from vibes and Stack Overflow residue.

Best Practices Need Tests or They Become Decoration

“Best practice” is one of those phrases that sounds responsible while hiding a lot of hand-waving.

For agents, a best practice should usually have one of three forms:

A rule the agent can apply directly.

An example the agent can copy safely.

A check that verifies the result.

For example:

“Use structured JSON logs” is weak.

“Use the

company-logging-jsondependency, includetrace_id,span_id,customer_hash, and never logemail,ssn, or raw token fields” is useful.“Run

./mvnw test -Plog-policyand the OpenRewrite recipecompany.logging.NoRawPiiLogs“ is better.

The retrieval system should prefer the version with commands, examples, and checks. Agents are much better when the company standard is executable.

A Practical Adoption Path

I would not start by indexing the whole company. That creates a large search box with unclear trust. We already have enough of those.

This needs an owner. In most companies, that should be platform engineering or developer experience, with security and architecture close enough to say “no” before the first incident review.

Start with one high-value workflow:

Pick a repeated engineering task, such as creating a new service, migrating a framework version, or adding an approved integration.

Identify the authoritative sources and delete or demote obvious duplicates.

Turn the best examples into executable blueprints.

Add metadata: owner, version, freshness, sensitivity, and deprecation status.

Index the sources with a local or hosted context system.

Expose a narrow MCP server or narrow tool set to one agent environment.

Build an evaluation set from real tasks.

Measure retrieval and answer quality before expanding.

Feed failures back into docs, metadata, ranking, and examples.

After that, add more domains. Internal frameworks first, then blueprints, then operational runbooks, then broader architecture guidance. The order matters. Agents need sharp, actionable sources before they need a museum.

What I Would Watch in Production

Once agents use proprietary context, observability becomes part of governance. I would want to see:

Which sources are retrieved most often.

Which queries return no useful result.

Which deprecated documents still appear in top results.

Which tasks fail evaluation after retrieval succeeds.

Which documents have no owner or stale review dates.

Which access denials happen and whether they are expected.

Which sources cause agents to cite conflicting guidance.

Which blueprint versions are used in generated changes.

This is not surveillance for its own sake. It is how you find the rot. If ten agents fail the same migration, the platform team gets a signal. If an old runbook keeps outranking the current standard, the docs team gets a signal. If a restricted source shows up where it should not, security gets a signal before it becomes an incident.

The Real Enterprise Angle

The enterprise problem is not that agents lack information. Enterprises are drowning in information. The problem is that agents need approved, current, scoped, measurable information at the moment they act.

Documentation MCP servers are one practical way to build that layer. Grounded Docs shows the useful primitives: local indexing, multiple source types, version-aware search, CLI automation, MCP access, optional embeddings, OAuth for protected HTTP endpoints, and deployment modes that can grow beyond a laptop. Tools like Context7, Ref, and Nia show adjacent product directions: public library freshness, private documentation search, broader multi-source context, and scoped MCPs.

The winning pattern for enterprises will probably be less glamorous than the demo videos. It will look like a knowledge publishing pipeline with owners, metadata, access checks, tested examples, retrieval evaluations, and boring operational dashboards.

That is good.

Boring is what lets agents touch real systems.