Why Java Modernization Estimates Need More Than Lines of Code

Use a spiderweb to see the parts a migration can hide: tests, docs, integrations, runtime behavior, and the loops that make AI work expensive.

Estimating an AI-assisted modernization project is weirdly easy to fake. Count the modules. Count the lines. Ask the model for a number. Add a confidence interval that looks serious. Done.

The problem is that modernization effort does not follow the shape of the repository alone and even the most fancy estimation prompt rarely gives you real results. Modernization follows the shape of the work around the repository. A clean Java 8 to Java 17 migration with strong tests can use fewer tokens than a smaller Spring Boot 2 to 3 upgrade where the tests are flaky, the security filters are custom, and the downstream batch export depends on a date format nobody wants to talk about. When I was originally asked the question how an assessment could look like for this, I started with a 3-axis model:

Structural scope - how much code, configuration, and project surface is in scope.

Semantic complexity - how much framework behavior, domain meaning, and hidden coupling has to survive the change.

Feedback readiness - how quickly the team can validate changes through builds, tests, CI, and review.

That first sketch was useful but it felt off and way too easy. It does move the estimate away from raw lines of code (sigh) and gives Java developers better words than “this service feels scary.” But three axes are just way too clean for enterprise Java.

Once you look at established modernization and estimation approaches, the missing pieces become hard to ignore: hidden assumptions, side-by-side operation, dependency mapping, data conversion, organizational readiness, test data, operations, and external interfaces. Those are not details. They are often the project. And despite all the AI, we do not want to forget about history, right?

So I personally decided to not push too hard on just the 3-axis and dug a little deeper into how a real world estimation approach or better assessment looks like. And with more dimensions, I naturally landed on a spiderweb.

Not because spiderwebs are more scientific or look better ;-) They don’t. But they make the right point: the shape matters more than the total score. And they give a better, visual representation of potential risk. Let’s take a look at the thinking behind my approach.

Why Three Axes Are Not Enough

The older modernization literature is refreshingly blunt about the problem. SEI’s Legacy System Modernization Strategies talks about large, complex, fragile systems, and about legacy and modernized components running side by side before the migration is finished. That alone breaks the simple three-axis view. Side-by-side operation is not just scope. It creates integration risk, sequencing risk, rollback risk, and support risk.

The strangler fig pattern points in the same direction. Incremental modernization is attractive because big-bang rewrites are risky, but running old and new behavior together means you have to understand the boundaries between them. AWS makes the same point in its strangler fig guidance: the old and new systems coexist while behavior moves over. The boundary is where a lot of the estimate lives.

SEI’s Options Analysis for Reengineering also matters here because it tries to reveal implicit stakeholder assumptions, constraints, and major drivers before deciding what can be reused or mined from a legacy system. In plain language: the code is not the whole truth. The assumptions around the code are part of the asset, and sometimes the assumptions are missing.

AWS modernization guidance uses business, functional, technical, financial, and digital-readiness lenses. It also calls out data migration and the volume of data to be converted. Microsoft’s application and data modernization readiness guidance talks about people, infrastructure, development process, data management, application estate, operations, and AI readiness. DORA’s continuous delivery capability keeps coming back to fast feedback, reliable tests, loosely coupled architecture, test data management, observability, database change management, and code maintainability.

Classic estimation methods point in the same direction. COCOMO II is not just “count size.” It combines size with product, platform, personnel, project, reuse, and process factors. Function Point Analysis counts inputs, outputs, inquiries, internal data, and external interface data. COSMIC sizing goes even more directly at data movements across boundaries.

They all say the same uncomfortable thing in different language: a modernization estimate has to include boundaries, data, feedback, people, constraints, and missing knowledge.

The Six Dimensions of AI assisted Modernization Estimation

When looking at the core problem, I am looking at the following dimensions:

Structural scope

Semantic complexity

Knowledge transparency

Integration and data surface

Feedback readiness

Operational and governance constraints

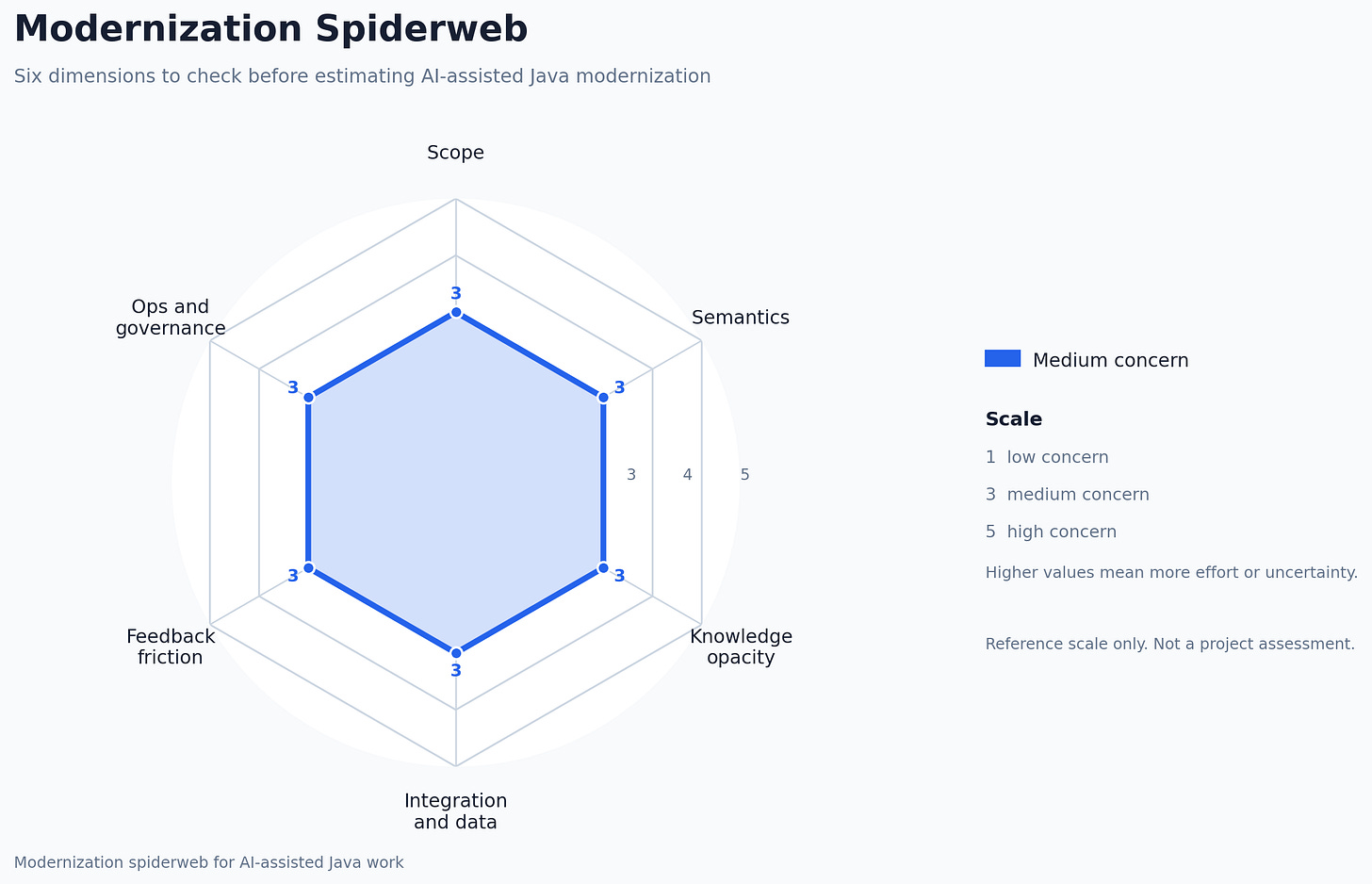

When I draw it, I flip the positive dimensions into risk language so the chart has one meaning: farther from the center means more effort or more uncertainty. So “knowledge transparency” becomes “knowledge opacity” on the chart, and “feedback readiness” becomes “feedback friction.”

These are the six spokes. This first diagram is only a reference shape, not a project assessment.

The values I use in the examples later are deliberately rough. Think of 1 as low concern, 3 as medium concern, and 5 as high concern. They are assumptions for illustration, not real assessments.

Then two multipliers sit outside the web:

Pass intensity - how many compile, test, repair, review, and clarification loops the work will need.

Token accounting - how the chosen model, tool schemas, context strategy, caching, and reasoning mode change the actual bill.

Use provider-specific accounting where possible. OpenAI documents input, output, cached, and reasoning tokens in its token counting help, and its prompt caching guide explains why stable prompt prefixes matter. Anthropic provides a message token counting endpoint, and Google documents Gemini token counting in its Gemini API token guide and Vertex AI CountTokens API.

This is not a scoring machine. It is a way to stop the first estimate from forgetting the obvious.

The Six Dimensions

Structural scope - What is physically in scope?

This touches on the idea of project scale, but I prefer “scope” because scale tempts us back into line counting.

Look at modules, repositories, source roots, generated code, parent POMs, Gradle convention plugins, deployment descriptors, Helm charts, Terraform, OpenAPI files, WSDLs, protobuf schemas, test fixtures, and shared libraries.

Generated code deserves special treatment. If it can be regenerated, regenerate it. If it was generated once and then hand-edited for seven years, congratulations, it is now legacy code with a fake mustache.

Semantic complexity - What behavior has to survive?

This is where the Java part gets real. Persistence behavior, transaction boundaries, lazy loading, security filters, interceptors, schedulers, thread pools, serialization, reflection, classloading, app-server APIs, homegrown annotations, and framework lifecycle hooks all live here.

The model can change imports. It cannot guess which odd-looking transaction boundary exists because a downstream reconciliation job depends on side effects after commit.

Knowledge transparency - Can the system explain itself?

Custom frameworks, internal blueprints, undocumented conventions, code generators, company-specific annotations, architectural decision records, runbooks, example payloads, old wiki pages, ownership files, and “ask the platform team before touching this” comments all affect whether an AI-assisted assessment is trustworthy.

This is also where enterprise document search matters. Searchable internal knowledge is not just developer comfort. It changes modernization cost. If the model or the developer can find the blueprint that explains why every service extends AbstractTenantAwareWorkflowBean, the project is different from one where the only explanation is oral tradition and Slack archaeology.

Integration and data surface - What boundaries must keep working?

Modernization often fails at the edges: REST APIs, SOAP services, Kafka topics, JMS queues, SFTP drops, fixed-width files, CSV exports, database views, partner feeds, report extracts, authentication flows, event payloads, enum mappings, date formats, character encodings, and “temporary” adapters.

The estimate changes when a Java object maps to a JSON payload. It changes more when it maps to XML, then to a COBOL copybook, then to a nightly fixed-width file where empty means zero unless the partner is in France. I wish that sentence felt exaggerated.

This is where Function Point Analysis and COSMIC sizing are useful reminders. Boundaries and data movement are not side effects of the code. They are part of the work.

Feedback readiness - How quickly do we know if we broke something?

Can a clean checkout build? Can one module build alone? Are tests deterministic? Is there test data? Are contract tests available? Can integration tests run without production credentials? Does CI fail for real reasons? Is there enough observability to debug changed behavior?

DORA’s continuous delivery work is relevant because modernization is mostly controlled change. Fast feedback, reliable tests, database change management, and deployable software are not process decoration. They reduce repair loops.

Operational and governance constraints - What rules shape the work?

Security review, compliance, audit trails, data residency, production access, release windows, change advisory boards, support teams, on-call ownership, SLOs, rollback rules, dependency approval, and vendor contracts can all change the estimate.

Some of these constraints are annoying. Some are necessary. All of them are real. If an agent can propose a dependency upgrade in ten seconds but the organization needs two weeks to approve it, the estimate should know that.

AI Assessment Suitability

A codebase is suitable for AI-assisted assessment when intent is visible, boundaries are documented, feedback is fast, and changes can be sliced small enough to verify.

Bad AI suitability usually comes from the following things:

no documentation for internal frameworks,

no examples of real payloads,

no contract tests,

no reliable local build,

no clear owner for shared libraries,

no test data,

no logs that explain production behavior,

and no way to run a safe slice.

That does not mean AI is useless. It means the first AI task is discovery, not modernization.

The Method

Even the most straight forward estimate still needs measurement.

I think about it like this:

Modernization effort ~= structural scope

x semantic risk

x knowledge opacity

x integration and data risk

x feedback-loop cost

x operational constraints

x pass intensity

x token accounting overheadPlease do not paste this into a spreadsheet and pretend the multiplication is real. It is a reminder of what tends to multiply the work.

The practical method is:

Draw the spiderweb for the project.

Name the weak spokes.

Collect the smallest evidence pack that can confirm or challenge the shape.

Pick representative slices.

Run a calibration pass.

Measure tokens, tool calls, build/test loops, review defects, clarification points, undocumented assumptions, and integration contracts touched.

Produce P50 and P80 ranges by phase.

The calibration pass is where opinion starts turning into evidence.

What to Measure First

Before putting numbers on the project, I collect a small evidence pack. Nothing fancy. Just enough to stop guessing.

Repository and build shape

Number of modules and repositories.

Build system and parent topology.

Source roots, generated source roots, and vendored code.

Test source roots and test categories.

Shared libraries and ownership boundaries.

Modernization target

Java version jump.

Framework jump.

Runtime change.

Namespace change.

Build tool change.

Deployment model change.

Knowledge sources

Architecture diagrams.

ADRs.

Internal framework documentation.

Blueprint repositories.

Code generator documentation.

Runbooks.

Example payloads.

Known owners.

Integration and data boundaries

REST, SOAP, messaging, batch, SFTP, database, and reporting interfaces.

Schemas and example payloads.

Downstream consumers and upstream producers.

Type mappings and format conversions.

Data migration or data conversion scope.

Contract tests or golden files.

Feedback quality

Clean checkout build.

Module-level build.

Unit, integration, contract, and end-to-end tests.

Test data availability.

CI reliability.

Observability for changed behavior.

Operational and governance constraints

Security approval.

Compliance and audit requirements.

Release windows.

Change approval.

Production access.

Rollback rules.

Support ownership.

Agent and token accounting

Which model and provider.

Tool schemas included in the prompt.

Input, output, cached, and reasoning token categories.

Prompt caching expectations.

File retrieval strategy.

Log truncation and context compaction strategy.

This list looks long because enterprise modernization is long before anyone writes code. And yes. Just estimating modernization effort is already a project. Of course, you can exit right here and just phantasize that an agent swarm will figure this out for you in the future and the models will be 100% better in the next six months, but I leave the speculation to others and assume that the people being responsible for the transactional systems relevant in my life (banks, insurances, etc) are risk aware and do not buy into every hype from the very beginning. (another sigh).

The Calibration Run

The best move is to run a representative slice before estimating the full project.

Pick three to five slices:

one easy mechanical module,

one module with a real framework upgrade,

one module with persistence or security behavior,

one integration-heavy module,

one module with poor documentation,

and one cross-module change if the migration has shared libraries.

For each slice, record:

input tokens,

output tokens,

cached tokens if the provider reports them,

reasoning tokens if the provider reports them,

number of tool calls,

number of compile/test cycles,

number of human clarification points,

number of undocumented assumptions discovered,

number of integration contracts touched,

and final review defects.

Then estimate the larger project with P50 and P80 ranges:

P50 - What happens if the remaining modules behave like the sample and the workflow stays clean.

P80 - What happens if the hard modules are harder, CI feedback is slower, documentation is thinner, and more repair loops are needed.

This gives you an estimate people can argue with. That is much better than a number everyone politely nods at and then ignores.

The Prompt I Would Use

Before we look at real examples, let’s take a look at how I gather these first, raw numbers. I am using the model I intend to work with. And I am giving it a prompt. It forces the assessment to name its evidence, its assumptions, and its weak spokes.

It is not sacred text. It is more like a checklist for the things teams skip when the meeting is already running over.

You are estimating an AI-assisted Java modernization project.

Use a modernization spiderweb, not a single score.

Assess these six dimensions:

1. Structural scope

- modules, repositories, source roots, generated code, build files,

deployment files, API contracts, shared libraries, ownership boundaries

2. Semantic complexity

- framework behavior, persistence, security, transactions, reflection,

classloading, generated code, app-server-specific APIs, serialization,

concurrency, schedulers

3. Knowledge transparency

- documentation, ADRs, internal frameworks, blueprints, conventions,

code generators, examples, runbooks, ownership, known tribal knowledge gaps

4. Integration and data surface

- APIs, queues, files, schemas, database contracts, partner formats,

data type mappings, data conversion, downstream consumers, upstream producers

5. Feedback readiness

- local build, CI health, test reliability, contract tests, integration

environments, test data, observability, acceptance criteria

6. Operational and governance constraints

- security, compliance, audit, release windows, change approval,

production access, rollback, support ownership, SLOs

Also estimate:

7. Pass intensity

- expected compile/test/repair loops per slice

- expected human clarification points

- likely causes of rework

8. Token accounting regime

- provider and model

- input/output/cached/reasoning token categories

- tool schemas and file inputs included

- prompt caching assumptions

- log truncation and context compaction strategy

Produce:

- measured signals

- inferred risks

- weak spiderweb spokes

- AI assessment suitability

- recommended deterministic tools before LLM work

- representative calibration slices

- P50 and P80 token budget ranges by phase

- the assumptions that would invalidate the estimateThe important output is not the score. It is the assumptions that make the score fragile.

Concrete Java Examples

The spiderweb diagrams below are assumptions for illustration. They are not based on real project assessments for obvious reasons but should give you an idea for the methodology.

Remember: The scale is 1 to 5, where 1 means low effort or low uncertainty and 5 means high effort or high uncertainty.

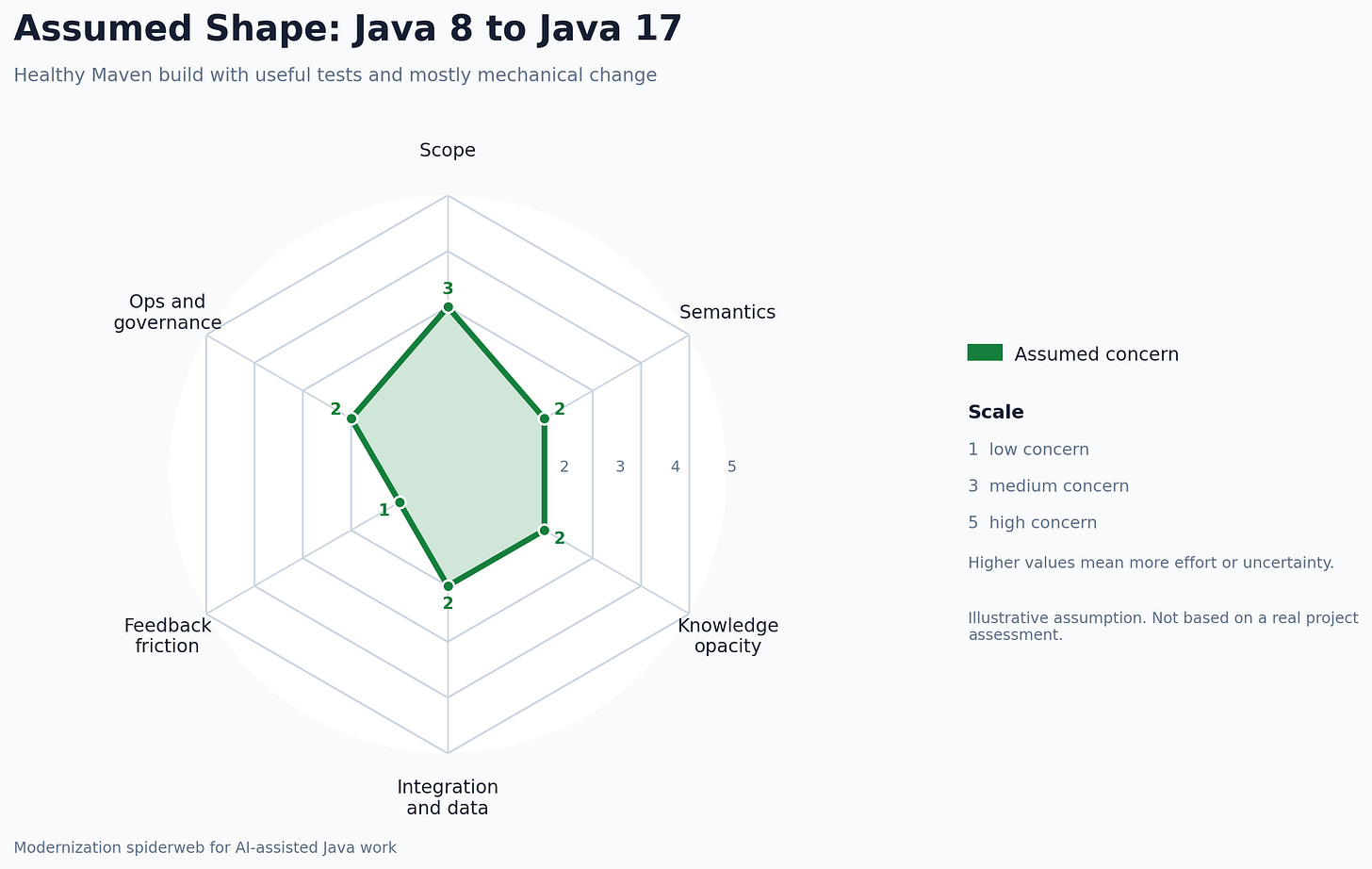

Java 8 to Java 17 in a Healthy Maven Build

Imagine a 20-module Maven application on Java 8. It has a working CI build, a decent unit test suite, and a small number of integration tests. The target is Java 17.

Structural scope - Medium to high. Twenty modules, parent POM changes, compiler settings, dependencies, tests.

Semantic complexity - Medium. The Java version jump matters, but many changes are mechanical if the app is not using deep reflection or unsupported JDK internals.

Knowledge transparency - Medium to high. Maven structure and dependency intent are visible. Internal conventions are documented well enough.

Integration and data surface - Low to medium unless the upgrade touches serialization, generated clients, or file formats.

Feedback readiness - High. CI runs. Tests mostly work. Failures mean something.

Operational and governance constraints - Usually manageable unless the runtime upgrade triggers security, container, or platform approval.

This is a good case for deterministic tools before LLMs. Run OpenRewrite Java 17 recipes first. Let the model inspect leftovers, explain failures, and handle ambiguous cases.

The estimate should not be based on “all Java files in the repo.” It should be based on recipe leftovers plus validation loops.

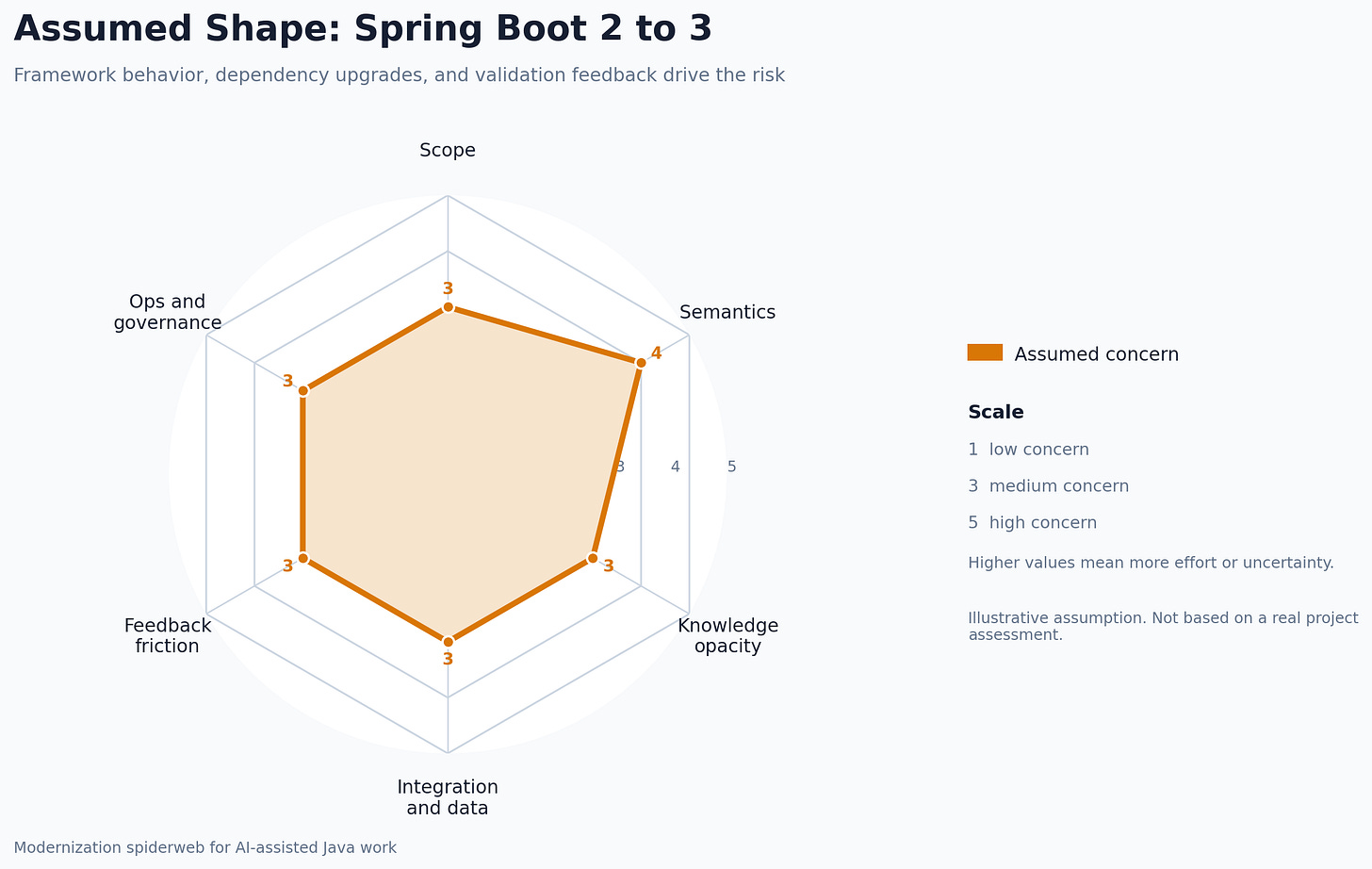

Spring Boot 2 to 3 With javax to jakarta Changes

Now take a Spring Boot 2 application moving to Spring Boot 3. The visible change is the javax.* to jakarta.* namespace move. The real work often hides in dependency compatibility, servlet filters, security configuration, validation annotations, generated clients, test libraries, and framework behavior changes.

Structural scope - Medium. The touched files may be many, but a lot are import changes.

Semantic complexity - Medium to high. Security and persistence behavior can change without looking dramatic in the diff.

Knowledge transparency - Depends. If the security model follows documented Spring conventions, good. If there is an internal authentication blueprint layered over Spring Security, this spoke can collapse quickly.

Integration and data surface - Often medium. REST payloads, validation behavior, generated clients, and downstream error handling can change.

Feedback readiness - This decides the bill. Good integration tests reduce the number of human/model interpretation loops. Weak tests turn this into archaeology.

Operational and governance constraints - Medium. Dependency upgrades, container base images, vulnerability scans, and release coordination can all matter.

I would split the work like this:

Mechanical namespace changes: use recipes, including OpenRewrite Jakarta migration recipes.

Dependency convergence: use build tooling and targeted model help.

Security config: inspect manually with the model as reviewer.

Persistence behavior: validate with integration tests and representative queries.

Generated code: exclude or regenerate.

Integration contracts: compare payload examples before and after.

This is where a neat score can lie to you. The diff may look broad but shallow. The risk is not the import count. The risk is whether runtime behavior still matches production expectations.

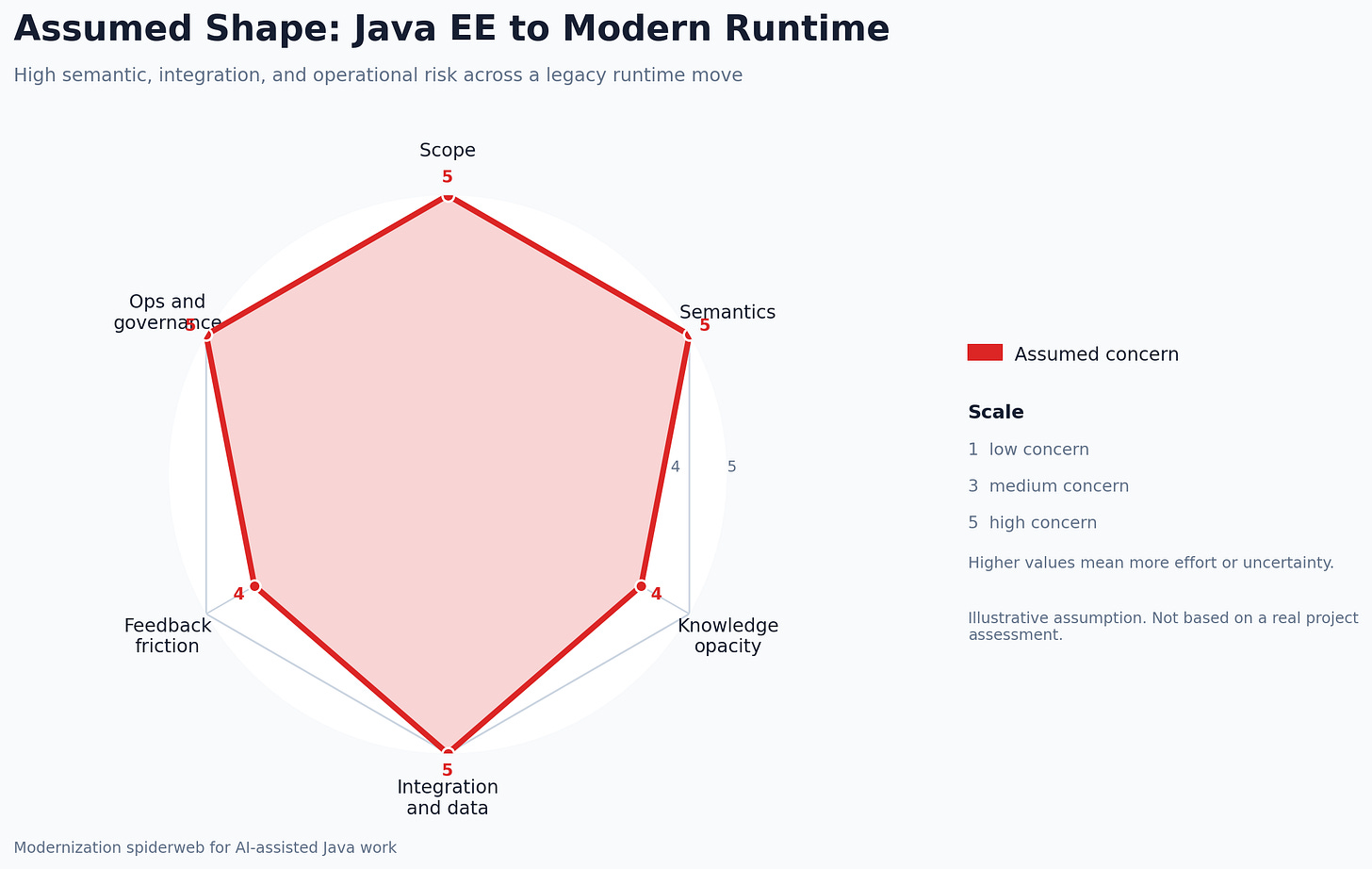

Old Java EE Application to Quarkus or Spring Boot

Now the fun one. A legacy Java EE application uses EJBs, JPA, JMS, JAX-RS, XML descriptors, app-server-specific resources, and a database schema that has opinions.

Structural scope - High. Code, descriptors, deployment model, messaging, transactions, configuration, tests, container image, operations docs.

Semantic complexity - High. Container-managed behavior must be replaced or made explicit. Transaction boundaries, security context, threading assumptions, and resource lookup patterns matter.

Knowledge transparency - Usually the swing factor. If the app follows documented internal blueprints, the work is hard but readable. If the framework is custom and undocumented, assessment becomes a project of its own.

Integration and data surface - High. JMS, database contracts, batch files, partner APIs, reports, and downstream consumers can dominate the work.

Feedback readiness - Usually mixed. There may be strong production knowledge and weak automated tests.

Operational and governance constraints - High. These systems are often business-critical, release windows are narrow, and rollback has to be planned before the first serious change.

For this kind of project, “modernize the application” is not a task. A slice might be:

one REST resource,

its service dependency,

its JPA entities and repositories,

the transaction behavior,

the message or file output it creates,

the configuration needed to run it locally,

and one acceptance test.

That slice gives you the spiderweb shape for the rest of the project. It also tells you whether the hard part is code, framework behavior, missing documentation, downstream contracts, or feedback quality.

What This Is Not

This is not the ultimate estimation model for AI modernization. I do not think that exists yet. I would distrust anything sold with that much confidence.

The version I trust is smaller:

The original three axes are a useful first sketch.

Established modernization approaches show why the sketch is incomplete.

Knowledge transparency and integration surface need their own place.

Operational constraints are part of the work, not an afterthought.

AI assessment suitability is an outcome of the spiderweb, not a marketing badge.

Calibration slices turn gut feeling into evidence.

For Java modernization, that is already a decent step forward. Not because it predicts the future perfectly, but because it gives developers better questions before the future starts billing by the token.