Build Hybrid MCP Tool Agents in Quarkus

A hands-on walkthrough that shows where MCP tools belong, where the model belongs, and how the full LangChain4j flow fits together.

I like agent workflows right up until somebody turns trim().toUpperCase() into a planning problem.

Some steps in a pipeline need language judgment. Others need to do one deterministic thing, do it fast, and stop there. Hashing a payload, cleaning up a record ID, or enforcing a fixed mapping does not improve because a model got involved. It just gets slower and harder to reason about.

That is why LangChain4j’s langchain4j-agentic-mcp module is interesting. It lets an MCP tool show up as a node inside an agentic graph, so the topology still tells the truth about the workflow while the boring stages stay boring. For me, that is the useful part of the whole idea.

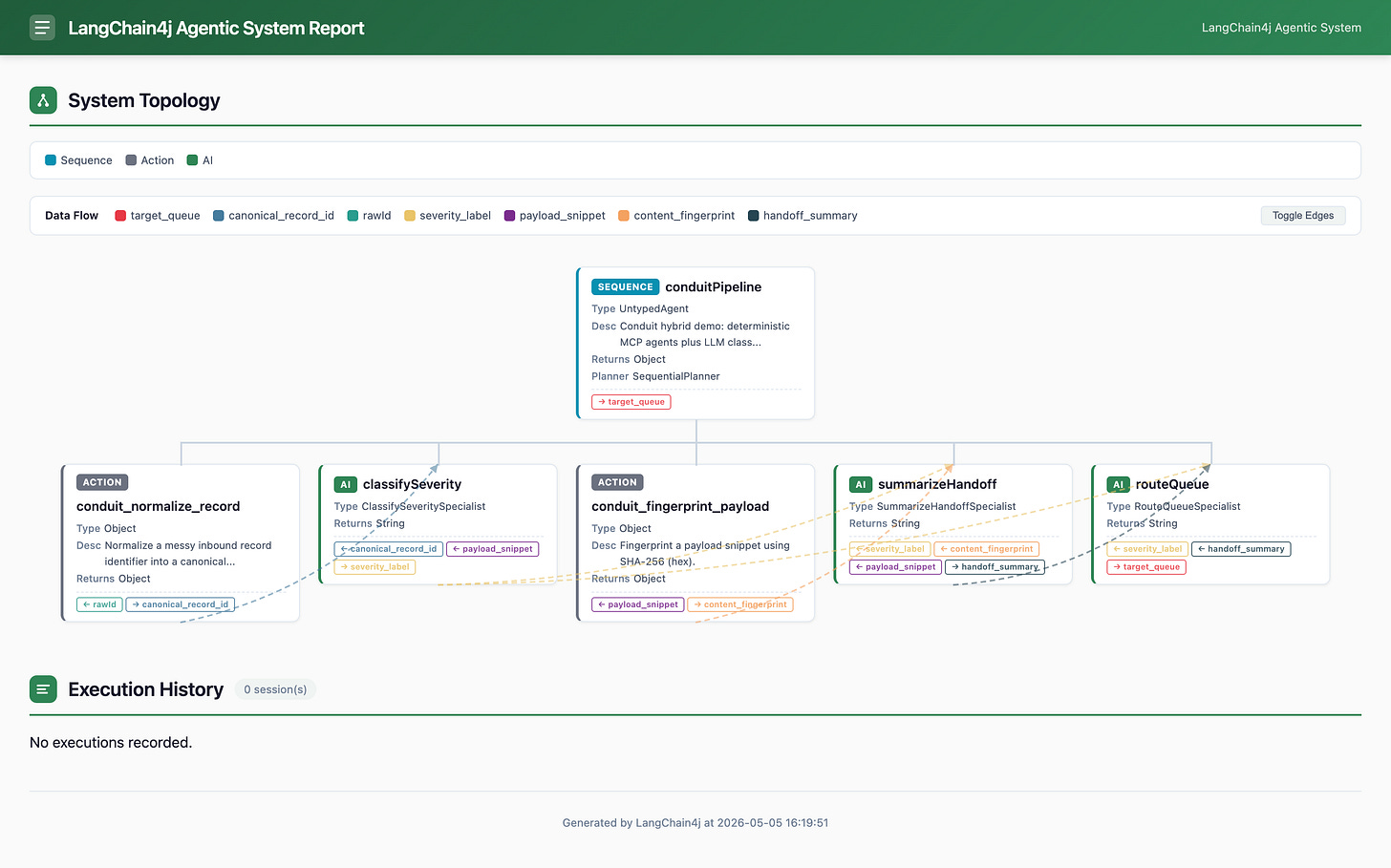

In this piece we build Conduit, a small hybrid system split across two Quarkus apps. conduit-mcp-server exposes two tools over Streamable HTTP on /mcp. conduit-workflow runs a five-step sequence with two MCP tool agents and three LLM-backed agents, then renders the whole thing as HTML on GET /topology.

The tests keep us honest. ConduitWorkflowIT packages the sibling MCP server, boots its runnable jar on a free localhost port, overrides conduit.mcp.server-url, and runs the workflow against local Ollama. No stub MCP client, no fake model, and much less room for a reassuring lie.

What we build

You end up with three things:

conduit-mcp-server, a tiny Quarkus MCP server withconduit_normalize_recordandconduit_fingerprint_payloadconduit-workflow, a five-stage LangChain4j sequence:normalize -> classifySeverity -> fingerprint -> summarizeHandoff -> routeQueuetests that hit the real shapes:

McpAssuredfor the server module, andConduitWorkflowITfor the full workflow with a launched MCP JVM and live Ollama

Architecture

flowchart LR

subgraph wfApp [conduit_workflow]

runs["POST /runs"]

seq["conduitPipeline sequence"]

mcpNorm["McpAgent conduit_normalize_record"]

aiCls["ChatModel classifySeverity"]

mcpFp["McpAgent conduit_fingerprint_payload"]

aiSum["ChatModel summarizeHandoff"]

aiRt["ChatModel routeQueue"]

monitor["AgentMonitor listener"]

topo["GET /topology HTML"]

runs --> seq

seq --> mcpNorm --> aiCls --> mcpFp --> aiSum --> aiRt

seq --- monitor --- topo

end

subgraph mcpApp [conduit_mcp_server]

toolNorm["Tool conduit_normalize_record"]

toolFp["Tool conduit_fingerprint_payload"]

end

mcpNorm -->|"Streamable HTTP MCP"| mcpApp

mcpFp -->|"Streamable HTTP MCP"| mcpApp

What you need

This assumes you have run Quarkus apps before and do not mind two terminals.

JDK 25

Quarkus CLI if you want to use the exact

quarkus create appcommands belowOllama listening on http://localhost:11434

A bewerage of your choice. Maybe a ☕️

a little patience on the first

conduit-workflowtest run, because it packages the sibling MCP module before starting the workflow test JVM

If Ollama listens somewhere else, export CONDUIT_TEST_OLLAMA_BASE_URL or pass -Dconduit.test.ollama-base-url=... before you run the workflow tests.

Project setup

Create both modules without codestarts so the package layout stays predictable. Or start from my Github repository if you like.

mkdir conduit-hybrid-agents && cd conduit-hybrid-agents

quarkus create app dev.conduit:conduit-mcp-server \

--extension=quarkus-mcp-server-http \

--java=25 \

--no-code \

--package-name=dev.conduit.mcp

quarkus create app dev.conduit:conduit-workflow \

--extension=quarkus-rest-jackson,quarkus-langchain4j-ollama \

--java=25 \

--no-code \

--package-name=dev.conduit.workflowThe extensions and what they do:

quarkus-mcp-server-httpexposes tools over Streamable HTTPquarkus-rest-jacksongives us/runsand/topologyquarkus-langchain4j-ollamaprovides the injectableChatModelfor the three LLM-backed stages

Each generated module already imports io.quarkus.platform:quarkus-bom at 3.35.2. The server module also imports quarkus-mcp-server-bom, and the workflow module imports quarkus-langchain4j-bom, which is where the LangChain4j agent versions come from in this repo.

Add the agent runtime in conduit-workflow/pom.xml:

<dependency>

<groupId>dev.langchain4j</groupId>

<artifactId>langchain4j-agentic</artifactId>

</dependency>

<dependency>

<groupId>dev.langchain4j</groupId>

<artifactId>langchain4j-agentic-mcp</artifactId>

</dependency>Add rest-assured as a test dependency in both modules. I still prefer spelling that one out instead of relying on whatever happened to arrive transitively:

<dependency>

<groupId>io.rest-assured</groupId>

<artifactId>rest-assured</artifactId>

<scope>test</scope>

</dependency>On conduit-mcp-server, add quarkus-mcp-server-test so McpAssured can talk MCP without you hand-building JSON-RPC payloads:

<dependency>

<groupId>io.quarkiverse.mcp</groupId>

<artifactId>quarkus-mcp-server-test</artifactId>

<scope>test</scope>

</dependency>I also keep a small aggregator pom.xml beside both modules. It does not change runtime behavior. It just lets the IDE treat the sample as one Maven reactor, which is more pleasant than pretending the two apps are unrelated.

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 https://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>dev.conduit</groupId>

<artifactId>conduit-hybrid-agents</artifactId>

<version>1.0.0-SNAPSHOT</version>

<packaging>pom</packaging>

<name>Conduit hybrid agents (aggregator)</name>

<modules>

<module>conduit-mcp-server</module>

<module>conduit-workflow</module>

</modules>

</project>Build the MCP side

The server app only needs one bit of config so it does not fight the workflow app for port 8080. Add below to the application.properties

quarkus.application.name=conduit-mcp-server

quarkus.http.port=8090Then add the tools in dev.conduit.mcp.ConduitMcpTools.java:

package dev.conduit.mcp;

import java.nio.charset.StandardCharsets;

import java.security.MessageDigest;

import java.security.NoSuchAlgorithmException;

import java.util.HexFormat;

import java.util.Locale;

import io.quarkiverse.mcp.server.Tool;

import io.quarkiverse.mcp.server.ToolArg;

import jakarta.enterprise.context.ApplicationScoped;

@ApplicationScoped

public class ConduitMcpTools {

@Tool(description = "Normalize a messy inbound record identifier into a canonical uppercase token.")

public String conduit_normalize_record(@ToolArg(description = "Raw record id from upstream") String rawId) {

if (rawId == null || rawId.isBlank()) {

return "";

}

return rawId.trim().toUpperCase(Locale.ROOT).replaceAll("\\s+", "");

}

@Tool(description = "Fingerprint a payload snippet using SHA-256 (hex).")

public String conduit_fingerprint_payload(

@ToolArg(description = "Payload body or JSON fragment") String payload_snippet)

throws NoSuchAlgorithmException {

String normalized = payload_snippet == null ? "" : payload_snippet;

MessageDigest digest = MessageDigest.getInstance("SHA-256");

byte[] hash = digest.digest(normalized.getBytes(StandardCharsets.UTF_8));

return HexFormat.of().formatHex(hash);

}

}conduit_normalize_record strips whitespace and uppercases the record ID. conduit_fingerprint_payload hashes the payload exactly as received and returns a 64-character hex string. Nothing here needs a model, and that is the whole point.

The workflow resource later rejects a blank rawId, so the empty-string fallback in the tool is defensive rather than the main validation path. I like that split. The HTTP boundary handles request shape, and the MCP tool stays deterministic.

Test the tools over MCP

io.quarkiverse.mcp.server.test.McpAssured speaks the same Streamable HTTP MCP dialect the server exposes, which means the test stays boring in the best possible way:

package dev.conduit.mcp;

import static org.junit.jupiter.api.Assertions.assertEquals;

import static org.junit.jupiter.api.Assertions.assertFalse;

import java.util.Map;

import org.junit.jupiter.api.Test;

import io.quarkiverse.mcp.server.test.McpAssured;

import io.quarkiverse.mcp.server.test.McpAssured.McpStreamableTestClient;

import io.quarkus.test.junit.QuarkusTest;

@QuarkusTest

class ConduitMcpToolsIT {

@Test

void normalizeRecordToolReturnsCanonicalToken() {

try (McpStreamableTestClient client = McpAssured.newStreamableClient()

.setMcpPath("/mcp")

.build()

.connect()) {

client.when()

.toolsCall("conduit_normalize_record")

.withArguments(Map.of("rawId", " abc-42\t"))

.withAssert(response -> assertEquals("ABC-42", response.firstContent().asText().text()))

.send()

.thenAssertResults();

}

}

@Test

void fingerprintPayloadReturnsStableSha256Hex() {

try (McpStreamableTestClient client = McpAssured.newStreamableClient()

.setMcpPath("/mcp")

.build()

.connect()) {

client.when()

.toolsCall("conduit_fingerprint_payload")

.withArguments(Map.of("payload_snippet", "{\"event\":\"demo\"}"))

.withAssert(response -> {

assertFalse(response.isError());

String hex = response.firstContent().asText().text();

assertEquals(64, hex.length());

})

.send()

.thenAssertResults();

}

}

}That is enough to prove the tool boundary works without turning the article into a testing catalog. If you want to test it quickly just run:

./mvnw verify -DskipITs=falseBuild the workflow side

The workflow module points at the sibling MCP server and the local Ollama runtime. Add below to the application.properties

quarkus.application.name=conduit-workflow

conduit.mcp.server-url=http://127.0.0.1:8090/mcp

quarkus.langchain4j.ollama.base-url=http://localhost:11434

quarkus.langchain4j.ollama.chat-model.model-id=llama3.2Wire the MCP client

Typed config stays short:

package dev.conduit.workflow.config;

import io.smallrye.config.ConfigMapping;

@ConfigMapping(prefix = "conduit.mcp")

public interface ConduitMcpConfig {

String serverUrl();

}Then we build a single McpClient bean and close it on shutdown:

package dev.conduit.workflow.mcp;

import dev.conduit.workflow.config.ConduitMcpConfig;

import dev.langchain4j.mcp.client.DefaultMcpClient;

import dev.langchain4j.mcp.client.McpClient;

import dev.langchain4j.mcp.client.transport.http.StreamableHttpMcpTransport;

import jakarta.annotation.PreDestroy;

import jakarta.enterprise.context.ApplicationScoped;

import jakarta.inject.Inject;

@ApplicationScoped

public class ConduitMcpBridge {

private final McpClient client;

@Inject

public ConduitMcpBridge(ConduitMcpConfig config) {

var transport = StreamableHttpMcpTransport.builder().url(config.serverUrl()).build();

this.client = new DefaultMcpClient.Builder().transport(transport).build();

}

public McpClient client() {

return client;

}

@PreDestroy

void shutdown() throws Exception {

client.close();

}

}This is one of those classes that should stay dull. It owns the transport, exposes the client, and gets out of the way. Just to be clear: You do not need this. You could also directly use the McpClient in the ConduitTopology later in the article. I was playing with mock approaches and left this as an artifact and I was too lazy to remove it :-) Consider it the human touch.

Define the LLM stages

Each LLM-backed stage lives in its own @CreatedAware interface. The prompts are deliberately narrow because I want classification, summarization, and routing, not a spontaneous novel.

ClassifySeveritySpecialist.java:

package dev.conduit.workflow.agents;

import dev.langchain4j.agentic.Agent;

import dev.langchain4j.service.UserMessage;

import dev.langchain4j.service.V;

import io.quarkiverse.langchain4j.CreatedAware;

@CreatedAware

public interface ClassifySeveritySpecialist {

@Agent

@UserMessage("Reply with exactly one token: LOW, MEDIUM, or HIGH based on payload severity cues. Canonical record ID: {{canonical_record_id}}. Payload snippet: {{payload_snippet}}")

String classify(

@V("canonical_record_id") String canonicalRecordId,

@V("payload_snippet") String payloadSnippet);

}SummarizeHandoffSpecialist.java:

package dev.conduit.workflow.agents;

import dev.langchain4j.agentic.Agent;

import dev.langchain4j.service.UserMessage;

import dev.langchain4j.service.V;

import io.quarkiverse.langchain4j.CreatedAware;

@CreatedAware

public interface SummarizeHandoffSpecialist {

@Agent

@UserMessage("Produce under 140 characters summarizing severity and fingerprint for ops. Severity: {{severity_label}}. Fingerprint: {{content_fingerprint}}. Payload: {{payload_snippet}}")

String summarize(

@V("severity_label") String severityLabel,

@V("content_fingerprint") String contentFingerprint,

@V("payload_snippet") String payloadSnippet);

}RouteQueueSpecialist.java:

package dev.conduit.workflow.agents;

import dev.langchain4j.agentic.Agent;

import dev.langchain4j.service.UserMessage;

import dev.langchain4j.service.V;

import io.quarkiverse.langchain4j.CreatedAware;

@CreatedAware

public interface RouteQueueSpecialist {

@Agent

@UserMessage("Return exactly one queue token: ops-general, ops-priority, or ops-security. Severity: {{severity_label}}. Summary: {{handoff_summary}}")

String route(

@V("severity_label") String severityLabel,

@V("handoff_summary") String handoffSummary);

}@CreatedAware matters here because AgenticServices.agentBuilder(Class) needs Quarkus-friendly creation semantics. The narrower point is more important: each stage has one job. If you let those prompts drift into vague “helpful assistant” territory, the topology still looks clean while the output gets much harder to trust.

Assemble the hybrid pipeline

ConduitTopology is where the whole thing becomes a real graph instead of a pile of nice intentions:

package dev.conduit.workflow.agents;

import java.util.Map;

import org.jboss.logging.Logger;

import dev.conduit.workflow.mcp.ConduitMcpBridge;

import dev.langchain4j.agentic.AgenticServices;

import dev.langchain4j.agentic.UntypedAgent;

import dev.langchain4j.agentic.mcp.McpAgent;

import dev.langchain4j.agentic.observability.AgentMonitor;

import dev.langchain4j.model.chat.ChatModel;

import jakarta.annotation.PostConstruct;

import jakarta.enterprise.context.ApplicationScoped;

import jakarta.inject.Inject;

@ApplicationScoped

public class ConduitTopology {

private static final Logger LOG = Logger.getLogger(ConduitTopology.class);

private final ChatModel chatModel;

private final ConduitMcpBridge mcpBridge;

private final AgentMonitor monitor;

private UntypedAgent pipeline;

@Inject

public ConduitTopology(ChatModel chatModel, ConduitMcpBridge mcpBridge) {

this.chatModel = chatModel;

this.mcpBridge = mcpBridge;

this.monitor = new AgentMonitor();

}

@PostConstruct

void buildPipeline() {

UntypedAgent normalize = McpAgent.builder(mcpBridge.client())

.toolName("conduit_normalize_record")

.inputKeys("rawId")

.outputKey("canonical_record_id")

.build();

ClassifySeveritySpecialist classify = AgenticServices.agentBuilder(ClassifySeveritySpecialist.class)

.chatModel(chatModel)

.name("classifySeverity")

.outputKey("severity_label")

.build();

UntypedAgent fingerprint = McpAgent.builder(mcpBridge.client())

.toolName("conduit_fingerprint_payload")

.inputKeys("payload_snippet")

.outputKey("content_fingerprint")

.build();

SummarizeHandoffSpecialist summarize = AgenticServices.agentBuilder(SummarizeHandoffSpecialist.class)

.chatModel(chatModel)

.name("summarizeHandoff")

.outputKey("handoff_summary")

.build();

RouteQueueSpecialist route = AgenticServices.agentBuilder(RouteQueueSpecialist.class)

.chatModel(chatModel)

.name("routeQueue")

.outputKey("target_queue")

.build();

pipeline = AgenticServices.sequenceBuilder()

.subAgents(normalize, classify, fingerprint, summarize, route)

.listener(monitor)

.name("conduitPipeline")

.description("Conduit hybrid demo: deterministic MCP agents plus LLM classification chain")

.outputKey("target_queue")

.build();

}

public AgentMonitor monitor() {

return monitor;

}

public String run(Map<String, Object> inputs) {

Object out = pipeline.invoke(inputs);

String queue = out != null ? out.toString() : "";

LOG.infov("Conduit finished target_queue={0}", queue);

return queue;

}

}McpAgent.builder(...) turns each remote tool into an UntypedAgent, which means the deterministic stages can sit in the same sequence as the LLM stages without pretending they are the same kind of work. AgentMonitor hangs off the sequence root, so LangChain4j can later render the observed topology as HTML.

Be picky about the input and output keys. rawId, canonical_record_id, severity_label, content_fingerprint, handoff_summary, and target_queue are the contracts between stages. Misspell one and you get silence dressed as orchestration.

Add the HTTP boundary

The workflow app exposes exactly two endpoints. RunRequest is record RunRequest(String rawId, String payloadSnippet), and RunResponse is record RunResponse(String targetQueue). The resource itself does the only validation we need:

package dev.conduit.workflow.api;

import java.util.HashMap;

import java.util.Map;

import dev.conduit.workflow.agents.ConduitTopology;

import jakarta.enterprise.context.ApplicationScoped;

import jakarta.inject.Inject;

import jakarta.ws.rs.Consumes;

import jakarta.ws.rs.POST;

import jakarta.ws.rs.Path;

import jakarta.ws.rs.Produces;

import jakarta.ws.rs.core.MediaType;

@Path("/runs")

@ApplicationScoped

@Produces(MediaType.APPLICATION_JSON)

@Consumes(MediaType.APPLICATION_JSON)

public class RunResource {

private final ConduitTopology conduitTopology;

@Inject

public RunResource(ConduitTopology conduitTopology) {

this.conduitTopology = conduitTopology;

}

@POST

public RunResponse run(RunRequest request) {

if (request == null || request.rawId() == null || request.rawId().isBlank()) {

throw new jakarta.ws.rs.WebApplicationException(

jakarta.ws.rs.core.Response.status(jakarta.ws.rs.core.Response.Status.BAD_REQUEST)

.entity("{\"error\":\"rawId must be non-blank\"}")

.build());

}

Map<String, Object> inputs = new HashMap<>();

inputs.put("rawId", request.rawId().trim());

inputs.put("payload_snippet", request.payloadSnippet() == null ? "" : request.payloadSnippet());

String queue = conduitTopology.run(inputs);

return new RunResponse(queue);

}

}TopologyResource is even smaller. HtmlReportGenerator.generateReport(...) already returns a complete HTML document.

package dev.conduit.workflow.api;

import dev.conduit.workflow.agents.ConduitTopology;

import dev.langchain4j.agentic.observability.HtmlReportGenerator;

import jakarta.enterprise.context.ApplicationScoped;

import jakarta.inject.Inject;

import jakarta.ws.rs.GET;

import jakarta.ws.rs.Path;

import jakarta.ws.rs.Produces;

import jakarta.ws.rs.core.MediaType;

@Path("/topology")

@ApplicationScoped

public class TopologyResource {

private final ConduitTopology conduitTopology;

@Inject

public TopologyResource(ConduitTopology conduitTopology) {

this.conduitTopology = conduitTopology;

}

@GET

@Produces(MediaType.TEXT_HTML)

public String topologyHtml() {

return HtmlReportGenerator.generateReport(conduitTopology.monitor());

}

}That gives us a small HTTP surface and a much more interesting internal graph. POST /runs accepts the incoming record and payload. GET /topology shows you exactly which stages exist and, after a run, which ones were invoked.

I have skipped the tests here. They can be found in my GitHub repository.

Configuration that breaks in real life

Three things are worth naming once because they are the ones that actually waste time.

Wrong conduit.mcp.server-url - The value must point to the MCP endpoint, not just the server root. In this sample that means http://127.0.0.1:8090/mcp. If StreamableHttpMcpTransport cannot complete the MCP handshake, the workflow is dead on arrival.

Reachable Ollama, missing model - The workflow tests verify that the Ollama base URL answers before they do anything expensive. That still does not create the configured model for you. If quarkus.langchain4j.ollama.chat-model.model-id=llama3.2 is not available locally, the LLM stages fail when the workflow reaches them.

Public topology page - /topology is useful because it shows the graph and recent invocation breadcrumbs. That also makes it a terrible thing to expose on a public edge without auth.

What I would change for production

This repo is intentionally small. It has no retry policy around MCP calls, no tracing exporter, no rate limiting on /runs, and no timeout budget discussion beyond what the underlying clients already do. In a real system you still need to decide how much latency each MCP hop can spend, where secrets live if a tool crosses a trust boundary, and whether the MCP server stays next to the workflow or moves behind something stricter such as mTLS.

The architectural rule I care about is simple: if a step is deterministic and cheap, keep it deterministic. Tool agents are useful because they let you keep that boundary visible instead of smuggling everything through a ChatModel just because the API can.

Prove it

Run the module tests first:

cd conduit-mcp-server

./mvnw verify -DskipITs=false

cd ../conduit-workflow

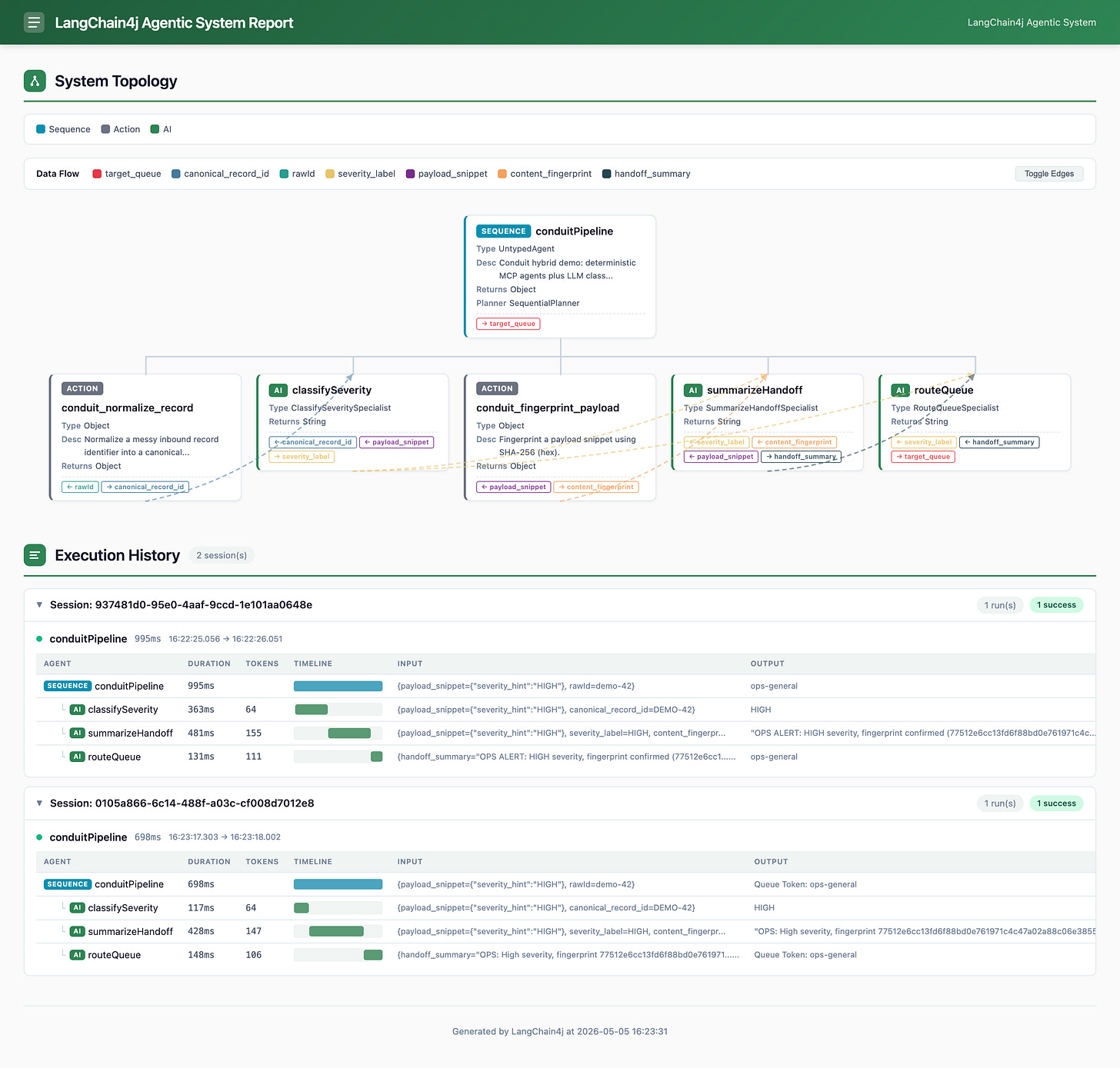

./mvnw verify -DskipITs=falseYou should get BUILD SUCCESS in both modules. The workflow test is doing more than a quick unit pass:

it renders

/topologybefore any run, which already proves the launched MCP server answered discovery callsit posts to

/runsand checks that the returnedtargetQueuecontains one ofops-general,ops-priority, orops-securityit reloads

/topologyafter the run and checks that both MCP tool names appear in the report

Then run both apps in dev mode:

Terminal A:

cd conduit-mcp-server

./mvnw quarkus:devTerminal B:

cd conduit-workflow

./mvnw quarkus:dev -Ddebug=5006Now prove the topology page and visit http://localhost:8080/topology

And the workflow output:

curl -sS -X POST http://localhost:8080/runs \

-H 'Content-Type: application/json' \

-d '{"rawId":" demo-42 ","payloadSnippet":"{\"severity_hint\":\"HIGH\"}"}'The second call should return JSON like this:

{

"targetQueue": "Queue Token: ops-general"

}The exact queue depends on what the model sees in the payload. The important part is that targetQueue contains one of the three valid routing tokens. The integration test uses loose matching here on purpose because local models occasionally decide a one-token answer deserved extra decoration.

If you re-check the topology, you can see the individual session:

When to use this pattern

When you sketch a hybrid workflow, I would keep the split this plain:

use a plain in-process

@Toolwhen you do not need an MCP boundary at alluse

McpAgentwhen deterministic logic lives behind MCP but still belongs in the workflow graphuse

AgenticServices.agentBuilder(...).chatModel(...)when the stage genuinely needs language judgment, summarization, or fuzzy routing

Pick the wrong shape and you pay twice: latency where you did not need it, and nondeterminism where you actively did not want it.

Close the loop

Conduit is small enough to understand in one sitting, which is why I like it as a demo. The important part is the boundary. Normalization and hashing stay deterministic, the LLM only handles judgment, and the topology page shows both without pretending they are the same kind of work.