Make Agent Workflows Inspectable Before Production

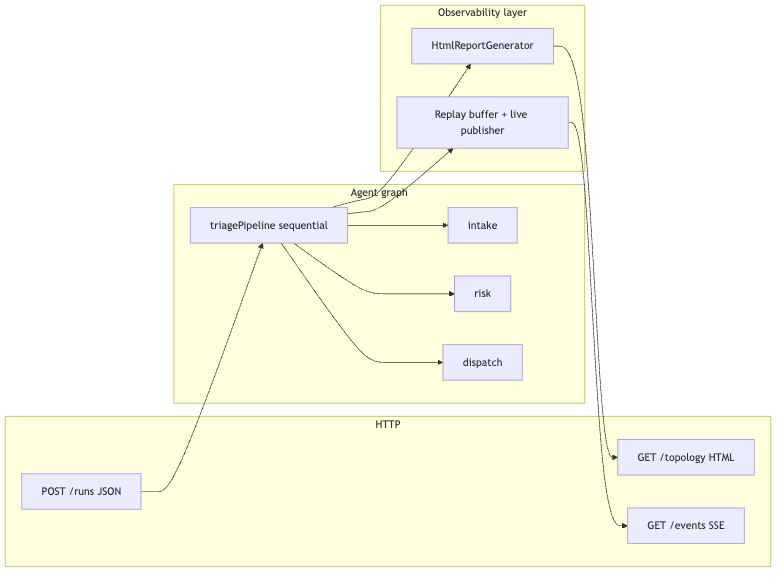

A small Quarkus service exposes live topology and recent executions, which makes agent systems easier to review, debug, and operationalize.

I do not trust agent diagrams that only exist in slides. They are usually accurate right up to the first refactor, the first extra specialist, or the first incident where someone asks which hop actually produced the bad answer.

Once the run is live, the runtime already knows the graph. It knows which agents were registered, which ones ran, how long they took, and what each hop returned. If that truth stays trapped inside the JVM, you are back to reading logs and rebuilding the call tree from memory.

This sample keeps the graph intentionally small: three specialists in a straight line, one AgentMonitor, one HTML report, and one SSE feed for recent runs. That is enough to show the useful part. I am not trying to build a planner here because the point is to expose runtime topology over ordinary HTTP, not to hide the topology behind a smarter demo.

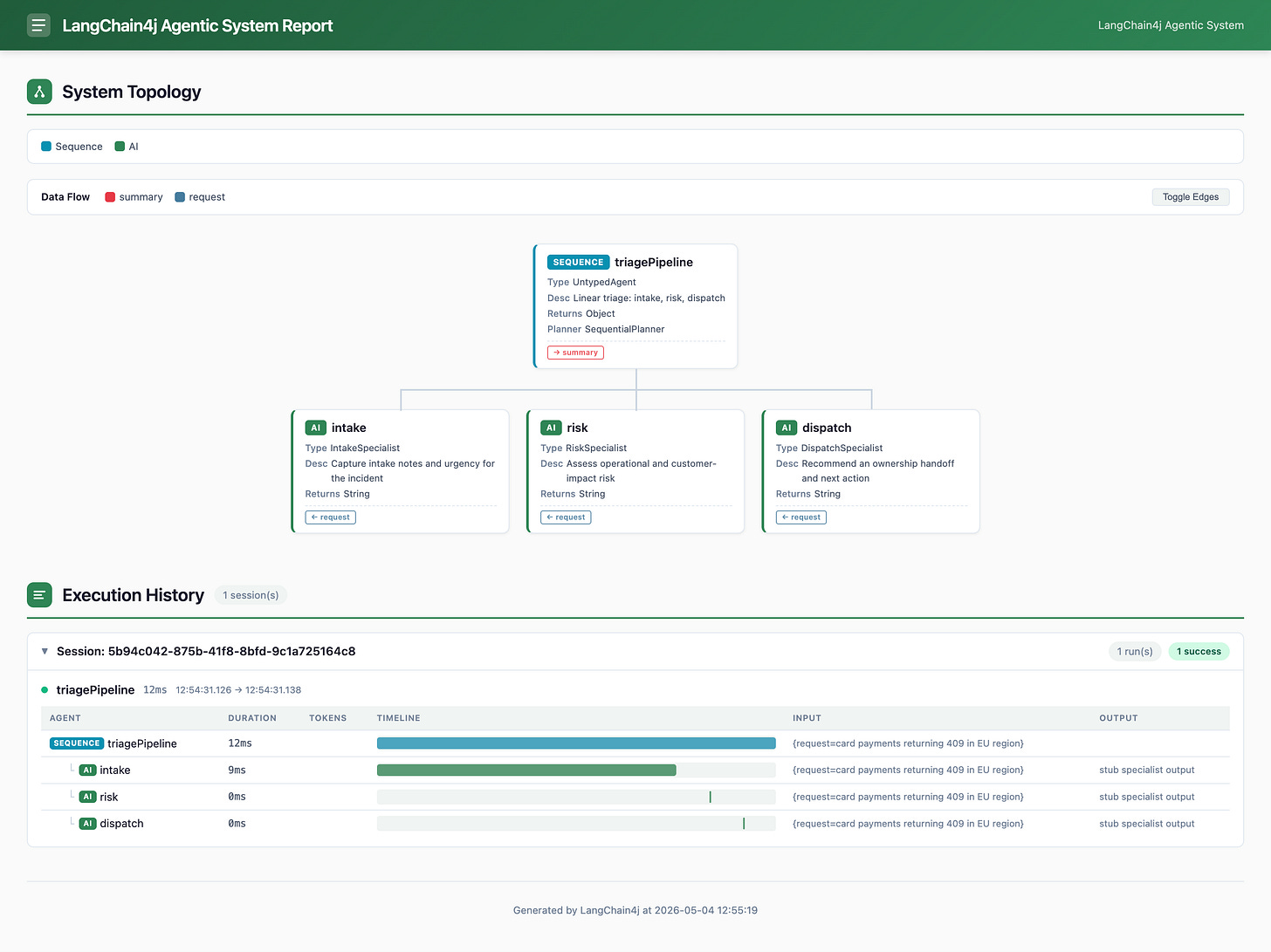

At the end you have a Quarkus app on Java 25 and Quarkus 3.35.1 with GET /topology serving the LangChain4j HTML report, POST /runs triggering the workflow once, and GET /events replaying recent runs as SSE. Automated verification uses a stub ChatModel, so the test path does not need Ollama.

Prerequisites

I am assuming you have already run at least one Quarkus service, at least one LangChain4j sample, and that editing application.properties does not need its own tutorial. If you type along, set aside about an hour. If you only want the topology trick and the HTTP shape, it is less.

Java 25

Quarkus CLI on your

PATHcurl or a browser for manual checks

Ollama only if you want live model calls in dev mode

Project setup

Create the project or start from my Github repository.

quarkus create app dev.topology:agent-topology \

--package-name=dev.topology \

--extensions=rest-jackson,quarkus-langchain4j-ollama \

--no-code \

-DjavaVersion=25After that, the interesting part is not the generated boilerplate. It is the one extra dependency LangChain4j does not bring through the Quarkus extension: langchain4j-agentic, which contains the workflow builder and the HTML report generator. Also we do need RestAssured.

Add the following two dependencies manually to the pom.xml:

<dependencies>

<dependency>

<groupId>dev.langchain4j</groupId>

<artifactId>langchain4j-agentic</artifactId>

<version>1.13.1-beta23</version>

</dependency>

<dependency>

<groupId>io.rest-assured</groupId>

<artifactId>rest-assured</artifactId>

<scope>test</scope>

</dependency>

</dependencies>Each dependency has one job:

quarkus-rest-jacksongives us JSON HTTP endpoints and record serializationquarkus-langchain4j-ollamagives us the CDIChatModelbacked by Ollamalangchain4j-agenticgives us the agentic workflow APIs andHtmlReportGeneratorquarkus-junitandrest-assuredcover the HTTP test path

Architecture

At the edge, the browser only talks HTTP. Inside the JVM, the sequence runs through three specialists. The monitor records both structure and execution, the topology endpoint renders that report as HTML, and the SSE endpoint replays recent runs as JSON.

Implementation

The specialist interfaces

Each specialist is a tiny typed agent. The part worth calling out is @CreatedAware. Without it, Quarkus LangChain4j does not know these interfaces are going to be instantiated through AgenticServices.agentBuilder(...), and the app fails during startup just when you thought the easy part was over.

// IntakeSpecialist.java

package dev.topology.agents;

import dev.langchain4j.agentic.Agent;

import dev.langchain4j.service.V;

import io.quarkiverse.langchain4j.CreatedAware;

/**

* First hop: frames the customer signal for downstream specialists.

*

* <p>{@link CreatedAware} tells Quarkus LangChain4j build-time processing that this agentic interface is

* instantiated via {@code AgenticServices.agentBuilder(...)} rather than as a plain {@code AiService}.

*/

@CreatedAware

public interface IntakeSpecialist {

@Agent("Capture intake notes and urgency for the incident")

String intake(@V("request") String request);

}

// RiskSpecialist.java

package dev.topology.agents;

import dev.langchain4j.agentic.Agent;

import dev.langchain4j.service.V;

import io.quarkiverse.langchain4j.CreatedAware;

@CreatedAware

public interface RiskSpecialist {

@Agent("Assess operational and customer-impact risk")

String assessRisk(@V("request") String request);

}

// DispatchSpecialist.java

package dev.topology.agents;

import dev.langchain4j.agentic.Agent;

import dev.langchain4j.service.V;

import io.quarkiverse.langchain4j.CreatedAware;

@CreatedAware

public interface DispatchSpecialist {

@Agent("Recommend an ownership handoff and next action")

String dispatch(@V("request") String request);

}I like this shape because each interface says one thing clearly. The sample does not need a dozen prompt methods or a pretend domain model just to make the graph look serious.

Build the root workflow once

TriageTopology owns two things: the three-agent sequence and the one AgentMonitor attached to it. That single monitor feeds both the topology page and the execution timeline after each run.

package dev.topology.agents;

import java.util.Map;

import jakarta.annotation.PostConstruct;

import jakarta.enterprise.context.ApplicationScoped;

import jakarta.inject.Inject;

import dev.langchain4j.agentic.AgenticServices;

import dev.langchain4j.agentic.UntypedAgent;

import dev.langchain4j.agentic.observability.AgentMonitor;

import dev.langchain4j.model.chat.ChatModel;

/**

* Small sequential specialist chain with a single {@link AgentMonitor} attached to the root workflow.

* The monitor feeds {@link dev.langchain4j.agentic.observability.HtmlReportGenerator}.

*/

@ApplicationScoped

public class TriageTopology {

private final ChatModel chatModel;

private final AgentMonitor monitor;

private UntypedAgent pipeline;

@Inject

public TriageTopology(ChatModel chatModel) {

this.chatModel = chatModel;

this.monitor = new AgentMonitor();

}

@PostConstruct

void buildPipeline() {

IntakeSpecialist intake =

AgenticServices.agentBuilder(IntakeSpecialist.class).chatModel(chatModel).name("intake").build();

RiskSpecialist risk =

AgenticServices.agentBuilder(RiskSpecialist.class).chatModel(chatModel).name("risk").build();

DispatchSpecialist dispatch =

AgenticServices.agentBuilder(DispatchSpecialist.class).chatModel(chatModel).name("dispatch").build();

pipeline = AgenticServices.sequenceBuilder()

.subAgents(intake, risk, dispatch)

.listener(monitor)

.name("triagePipeline")

.description("Linear triage: intake, risk, dispatch")

.outputKey("summary")

.build();

}

public AgentMonitor monitor() {

return monitor;

}

/**

* Runs the whole pipeline once; LangChain4j records invocations on {@link #monitor()}.

*/

public String run(String userRequest) {

Object out = pipeline.invoke(Map.of("request", userRequest));

return out != null ? out.toString() : "";

}

}The important decision is the listener on the root sequence, not on each child. That keeps the topology report and the execution report tied to the same object, which means GET /topology can stay boring in the best way.

Serve the topology as plain HTML

The topology endpoint is deliberately dull. HtmlReportGenerator.generateReport(...) already returns a full HTML document, so we just hand it back as text/html.

package dev.topology.api;

import jakarta.enterprise.context.ApplicationScoped;

import jakarta.inject.Inject;

import jakarta.ws.rs.GET;

import jakarta.ws.rs.Path;

import jakarta.ws.rs.Produces;

import jakarta.ws.rs.core.MediaType;

import dev.langchain4j.agentic.observability.HtmlReportGenerator;

import dev.topology.agents.TriageTopology;

@Path("/topology")

@ApplicationScoped

@Produces(MediaType.TEXT_HTML)

public class TopologyResource {

private final TriageTopology triageTopology;

@Inject

public TopologyResource(TriageTopology triageTopology) {

this.triageTopology = triageTopology;

}

@GET

public String topologyHtml() {

return HtmlReportGenerator.generateReport(triageTopology.monitor());

}

}Before the first run, the report already shows the registered graph and a No executions recorded. message. After a run, the same page adds execution rows with timings, inputs, and per-agent outputs. That is exactly why I prefer exposing the runtime view directly instead of redrawing it by hand.

Trigger the workflow over HTTP

The run endpoint uses two small records for the payload and then does three things in order: reject blank requests, invoke the sequence, and publish a short event for replay and live SSE subscribers.

// RunRequest.java

package dev.topology.api;

public record RunRequest(String request) {}

// RunResponse.java

package dev.topology.api;

public record RunResponse(String summary) {}

// RunResource.java

package dev.topology.api;

import jakarta.enterprise.context.ApplicationScoped;

import jakarta.inject.Inject;

import jakarta.ws.rs.Consumes;

import jakarta.ws.rs.POST;

import jakarta.ws.rs.Path;

import jakarta.ws.rs.Produces;

import jakarta.ws.rs.core.MediaType;

import dev.topology.agents.TriageTopology;

import dev.topology.stream.RunEventPublisher;

@Path("/runs")

@ApplicationScoped

@Produces(MediaType.APPLICATION_JSON)

@Consumes(MediaType.APPLICATION_JSON)

public class RunResource {

private static final int SNIPPET_MAX = 200;

private final TriageTopology triageTopology;

private final RunEventPublisher runEventPublisher;

@Inject

public RunResource(TriageTopology triageTopology, RunEventPublisher runEventPublisher) {

this.triageTopology = triageTopology;

this.runEventPublisher = runEventPublisher;

}

@POST

public RunResponse run(RunRequest request) {

if (request == null || request.request() == null || request.request().isBlank()) {

throw new jakarta.ws.rs.WebApplicationException(

jakarta.ws.rs.core.Response.status(jakarta.ws.rs.core.Response.Status.BAD_REQUEST)

.entity("{\"error\":\"request must be non-blank\"}")

.build());

}

String summary = triageTopology.run(request.request());

String snippet = snippet(request.request());

runEventPublisher.publishSummary(snippet, summary);

return new RunResponse(summary);

}

private static String snippet(String request) {

String t = request.trim();

if (t.length() <= SNIPPET_MAX) {

return t;

}

return t.substring(0, SNIPPET_MAX) + "…";

}

}The 200-character snippet is not cosmetic. It keeps the SSE replay useful without turning one pasted incident ticket into permanent in-memory confetti.

One honest detail is worth calling out here: with the current three-hop sequence, the root summary can be empty. The useful output in this sample is the per-agent trace in the topology report and the event stream, not the HTTP body from POST /runs. If you want a user-facing summary later, add an explicit aggregation step or change the response contract so the endpoint returns the part you actually care about.

Replay recent runs over SSE

There are two jobs here: replay recent events to new subscribers and fan out new events to everyone already connected. The deque handles replay. SubmissionPublisher handles live updates. Multi.createBy().concatenating().streams(past, tail) is the whole trick: past first, then live.

// RunEvent.java

package dev.topology.stream;

/**

* One completed pipeline execution surfaced on the SSE feed.

*/

public record RunEvent(String requestSnippet, String summary) {}

// RunEventPublisher.java

package dev.topology.stream;

import java.util.ArrayList;

import java.util.List;

import java.util.concurrent.ConcurrentLinkedDeque;

import java.util.concurrent.Executor;

import java.util.concurrent.Executors;

import java.util.concurrent.Flow;

import java.util.concurrent.SubmissionPublisher;

import jakarta.enterprise.context.ApplicationScoped;

import jakarta.inject.Inject;

import org.eclipse.microprofile.config.inject.ConfigProperty;

import com.fasterxml.jackson.core.JsonProcessingException;

import com.fasterxml.jackson.databind.ObjectMapper;

import io.smallrye.mutiny.Multi;

/**

* Bounded replay buffer plus a shared {@link Flow.SubmissionPublisher} so multiple SSE clients see new runs.

*/

@ApplicationScoped

public class RunEventPublisher {

private final int maxEvents;

private final ObjectMapper objectMapper;

private final ConcurrentLinkedDeque<String> ring = new ConcurrentLinkedDeque<>();

private final SubmissionPublisher<String> live;

private final Executor executor = Executors.newVirtualThreadPerTaskExecutor();

@Inject

public RunEventPublisher(

ObjectMapper objectMapper,

@ConfigProperty(name = "topology.run-events.max", defaultValue = "50") int maxEvents) {

this.objectMapper = objectMapper;

this.maxEvents = maxEvents;

this.live = new SubmissionPublisher<String>(executor, Flow.defaultBufferSize());

}

public void publishSummary(String requestSnippet, String summary) {

try {

String line = objectMapper.writeValueAsString(new RunEvent(requestSnippet, summary));

while (ring.size() >= maxEvents) {

ring.pollFirst();

}

ring.offerLast(line);

live.submit(line);

} catch (JsonProcessingException e) {

throw new IllegalStateException(e);

}

}

/**

* Replay recent JSON lines, then stream live submissions (same format as SSE elements).

*/

public Multi<String> stream() {

List<String> snapshot = new ArrayList<>(ring);

Multi<String> past = Multi.createFrom().iterable(snapshot);

Multi<String> tail = Multi.createFrom().publisher(live);

return Multi.createBy().concatenating().streams(past, tail);

}

}

// EventsResource.java

package dev.topology.api;

import jakarta.enterprise.context.ApplicationScoped;

import jakarta.inject.Inject;

import jakarta.ws.rs.GET;

import jakarta.ws.rs.Path;

import jakarta.ws.rs.Produces;

import jakarta.ws.rs.core.MediaType;

import org.jboss.resteasy.reactive.RestStreamElementType;

import io.smallrye.mutiny.Multi;

import dev.topology.stream.RunEventPublisher;

@Path("/events")

@ApplicationScoped

public class EventsResource {

private final RunEventPublisher runEventPublisher;

@Inject

public EventsResource(RunEventPublisher runEventPublisher) {

this.runEventPublisher = runEventPublisher;

}

@GET

@Produces(MediaType.SERVER_SENT_EVENTS)

@RestStreamElementType(MediaType.APPLICATION_JSON)

public Multi<String> events() {

return runEventPublisher.stream();

}

}I like this shape because it stays honest about what we are streaming. Each SSE element is already a JSON string in the exact shape we want, so there is no second transformation layer pretending to add value.

Keep the test path deterministic

The test path swaps the live model for a stub and then exercises the HTTP surface. The class name matters here: TopologyAndRunsIT is an integration-style test, not a plain unit test, so the Maven command has to match that.

// TopologyStubChatModel.java

package dev.topology.testsupport;

import jakarta.annotation.Priority;

import jakarta.enterprise.context.ApplicationScoped;

import jakarta.enterprise.inject.Alternative;

import dev.langchain4j.data.message.AiMessage;

import dev.langchain4j.model.chat.ChatModel;

import dev.langchain4j.model.chat.request.ChatRequest;

import dev.langchain4j.model.chat.response.ChatResponse;

/**

* Deterministic model for {@code @QuarkusTest} so the agentic graph runs without Ollama.

*/

@Alternative

@Priority(1)

@ApplicationScoped

public class TopologyStubChatModel implements ChatModel {

@Override

public ChatResponse chat(ChatRequest request) {

return ChatResponse.builder()

.aiMessage(AiMessage.from("stub specialist output"))

.build();

}

}package dev.topology.api;

import static org.hamcrest.CoreMatchers.containsString;

import static org.hamcrest.Matchers.equalTo;

import static org.junit.jupiter.api.Assertions.assertTrue;

import java.io.InputStream;

import java.net.HttpURLConnection;

import java.net.URI;

import org.junit.jupiter.api.Test;

import io.quarkus.test.common.http.TestHTTPResource;

import io.quarkus.test.junit.QuarkusTest;

import io.restassured.RestAssured;

import io.restassured.http.ContentType;

@QuarkusTest

class TopologyAndRunsIT {

@TestHTTPResource("/events")

URI eventsEndpoint;

@Test

void topologyReturnsHtmlBeforeAnyRun() {

String html = RestAssured.given()

.when()

.get("/topology")

.then()

.statusCode(200)

.contentType(ContentType.HTML)

.extract()

.body()

.asString();

assertTrue(html.toLowerCase().contains("<html") || html.toLowerCase().contains("<!doctype html"),

"Expected HTML document markers");

}

@Test

void runPipelineThenTopologyShowsExecution() {

RestAssured.given()

.contentType(ContentType.JSON)

.body("{\"request\":\"payment webhook retries exhausting DLQ budget\"}")

.when()

.post("/runs")

.then()

.statusCode(200)

.body("summary", equalTo(""));

RestAssured.given()

.when()

.get("/topology")

.then()

.statusCode(200)

.body(containsString("triagePipeline"))

.body(containsString("stub specialist output"));

}

@Test

void eventsStreamRegistersAndReturnsInitialBytesAfterRun() throws Exception {

RestAssured.given()

.contentType(ContentType.JSON)

.body("{\"request\":\"SSE smoke\"}")

.post("/runs");

HttpURLConnection connection = (HttpURLConnection) eventsEndpoint.toURL().openConnection();

connection.setRequestMethod("GET");

connection.setReadTimeout(5_000);

connection.connect();

assertTrue(connection.getContentType() != null && connection.getContentType().contains("text/event-stream"),

"Expected text/event-stream");

try (InputStream in = connection.getInputStream()) {

byte[] buf = new byte[16_384];

int n = in.read(buf);

assertTrue(n > 0, "Expected initial SSE bytes");

} finally {

connection.disconnect();

}

}

}The test class proves the three user-facing behaviors we care about: HTML is served, a run mutates the topology report, and the SSE endpoint opens and starts streaming. I keep the exact payload shape in the manual curl -N step below because open streams are easier to prove with one real client than with a pile of brittle string assertions. That is still much better than telling readers to trust the screenshot in your blog post. We have all seen how that movie ends.

Configuration

These are the two properties files:

# src/main/resources/application.properties

# ---- Ollama (local hands-on; tests replace the model with TopologyStubChatModel) ----

quarkus.langchain4j.ollama.base-url=${OLLAMA_BASE_URL:http://localhost:11434}

quarkus.langchain4j.ollama.chat-model.model-id=${OLLAMA_MODEL:qwen2.5:7b}

quarkus.langchain4j.ollama.chat-model.log-requests=true

quarkus.langchain4j.ollama.chat-model.log-responses=true

# Small window is enough: each specialist is one model call in this demo

quarkus.langchain4j.chat-memory.memory-window.max-messages=16

quarkus.langchain4j.ai-service.max-tool-executions=8

# ---- Demo-owned: SSE replay buffer (JSON lines) ----

topology.run-events.max=${TOPOLOGY_RUN_EVENTS_MAX:50}

# Request body limit for POST /runs (Quarkus default is generous; pin explicitly for the article)

quarkus.http.limits.max-body-size=64K

# Route noisy specialist traffic to DEBUG in dev

quarkus.log.category."dev.topology".level=INFO# src/test/resources/application.properties

%test.quarkus.arc.selected-alternatives=dev.topology.testsupport.TopologyStubChatModelThe important knobs are:

OLLAMA_BASE_URLandOLLAMA_MODEL- These control live dev mode. Wrong host, wrong port, or a missing model shows up on the first real call to/runs.quarkus.langchain4j.ollama.chat-model.log-requestsandlog-responses- Useful while you are wiring the sample. Turn them off once the graph is behaving and you no longer need model chatter in the logs.topology.run-events.max- This is the replay budget for SSE. Raise it and reconnecting clients see more history. Raise it too far and you are just parking more strings in memory.quarkus.http.limits.max-body-size- This keepsPOST /runsfrom becoming a giant paste bucket with a heap attached.%test.quarkus.arc.selected-alternatives- This is what makes the automated path deterministic. Without it, your tests depend on Ollama availability, which is a boring way to make CI flaky.

The memory window and tool-execution cap are just sane ceilings. This sample does not lean on them hard, but I would rather keep the guardrails near the example than remember them after the example grows teeth.

Verification

Use the command that actually runs the integration-style test:

./mvnw verify -DskipITs=falseIf you only run ./mvnw test, Maven stops before TopologyAndRunsIT. The IT suffix is a clue, not decoration.

For a deterministic local smoke test without Ollama, start dev mode with the same alternative the test profile uses:

./mvnw quarkus:dev \

-Dquarkus.arc.selected-alternatives=dev.topology.testsupport.TopologyStubChatModelThen check the whole path:

Open

http://localhost:8080/topologyand confirm the graph renders withtriagePipeline,intake,risk, anddispatch, plusNo executions recorded.before the first run.Trigger one run:

curl -s -X POST http://localhost:8080/runs \

-H 'Content-Type: application/json' \

-d '{"request":"card payments returning 409 in EU region"}'With the current minimal sequence, the response is:

{"summary":""}That empty field is normal for this sample because the root workflow does not aggregate the three specialist outputs into a single summary value.

Reload

http://localhost:8080/topologyand confirm the execution section now lists onetriagePipelinerun and three child rows. With the stub model, each child output readsstub specialist output.Open the SSE feed:

curl -N http://localhost:8080/eventsYou should see elements shaped like this:

data:{"requestSnippet":"card payments returning 409 in EU region","summary":""}If you switch back to live Ollama in dev mode, the HTTP shape stays the same. What changes is the per-agent output in the topology report and the event stream.

Conclusion

You now serve the same truth the JVM saw instead of the diagram someone forgot to update after the second refactor. The graph stays small, the HTTP surface stays boring, and the useful part is exactly where it should be: in the runtime trace, not in someone’s memory.