Why AI Coding Agents Still Need Clear Specs

Learn why vague prompts create hidden rework, how acceptance criteria help, and when BDD-style tests are worth the upfront effort.

There’s a mental model spreading through the developer community right now that goes something like this: agents are smart enough to figure things out, so heavy upfront specification is bureaucratic overhead you don’t need anymore. Just describe the goal loosely, let the agent explore, and correct as you go. Fast. Flexible. Modern.

It’s wrong. Not because agents aren’t capable — they often are — but because the accounting is off. You’re not eliminating cost. You’re deferring it, fragmenting it, and making it harder to see.

Let’s run the actual ledger.

Two Poles, Two Hidden Costs

At one extreme: minimal specification. You describe intent loosely, agents interpret freely, and work begins immediately. The upfront cost in human effort is near zero. What you don’t immediately see is what accumulates downstream: correction loops, each carrying token cost plus human re-engagement time. Review cycles where a human acts as the oracle for every output — deciding whether what the agent produced is what was actually meant. Rework when it wasn’t.

At the other extreme: full formal specification. TDD, BDD, Gherkin scenarios, acceptance criteria locked down before a single line of code runs. The upfront human effort is real and visible. But the downstream verification cost looks fundamentally different, because the tests are the oracle. Pass or fail. The human doesn’t need to personally evaluate every output — the spec does it automatically, repeatedly, without fatigue.

What you’re actually trading off is when you pay and in what currency. Minimal spec front-loads token cost and back-loads human judgment. Heavy spec front-loads human effort and back-loads almost nothing — automated verification doesn’t scale with runs.

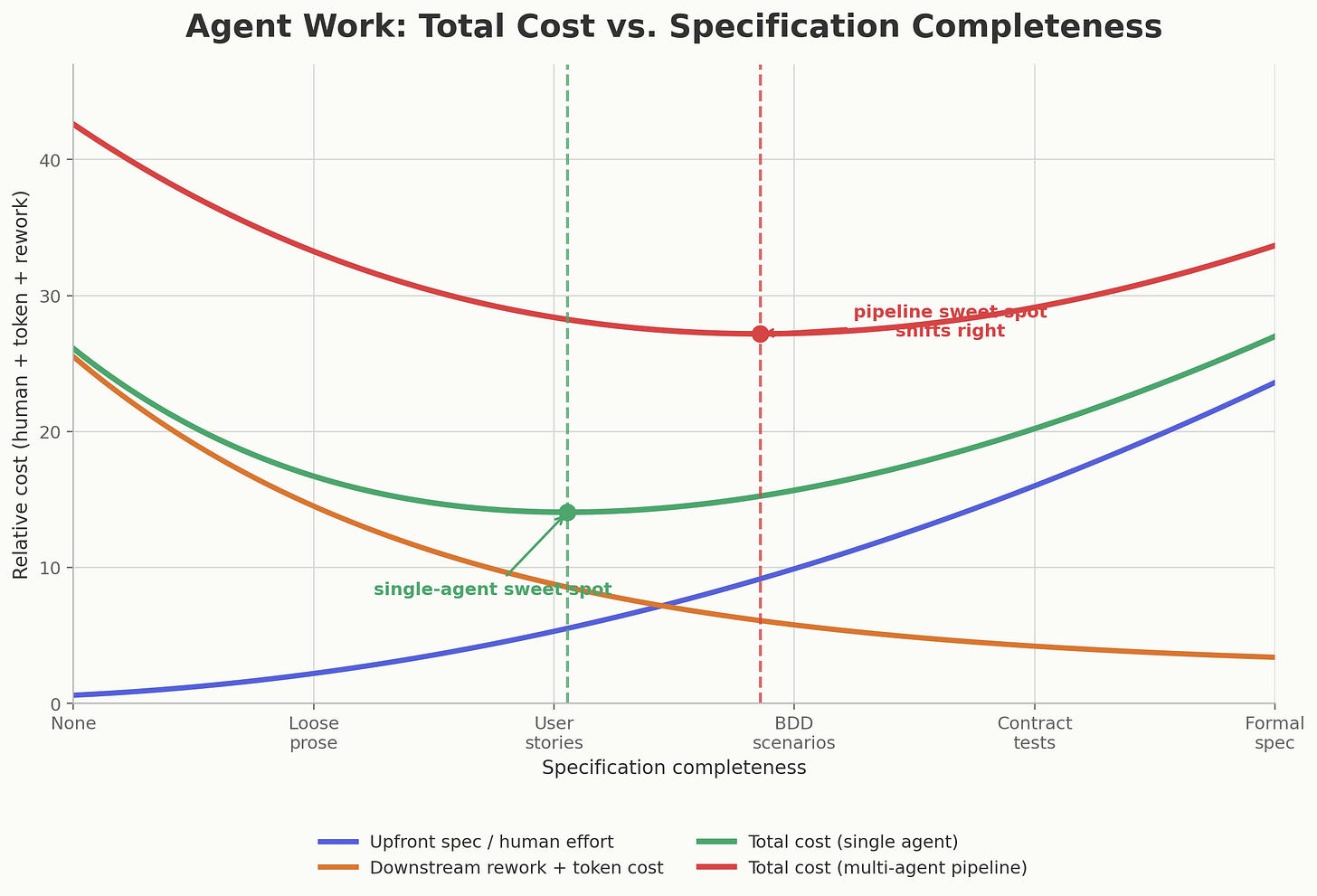

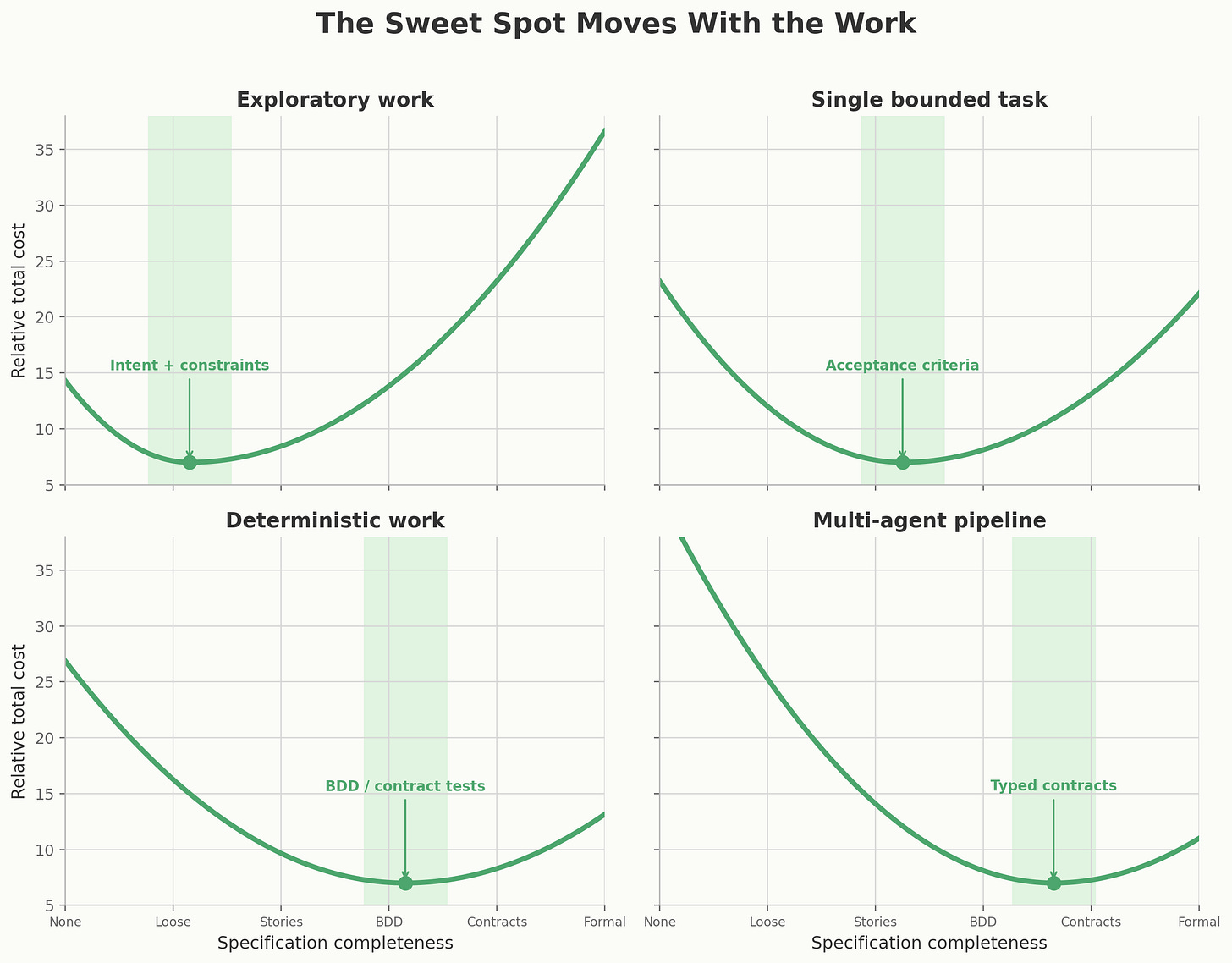

The total cost of both approaches traces a U-shaped curve when you plot it against specification completeness. The minimum of that curve — the sweet spot — sits somewhere around well-structured acceptance criteria or BDD scenarios. Not at zero specification, and not at a 40-page formal requirements document.

The trap is visible once you plot the whole ledger. Minimal specification looks cheap only before downstream rework enters the chart. Multi-agent work pushes the minimum further right because drift compounds across handoffs.

The Old Problem Was Always the Spec

The real challenge in software engineering had always been specification.

Not typing. Not syntax. Not even architecture in the abstract. The hard part was agreeing what should exist, what should never happen, which tradeoffs matter, what the system is allowed to forget, and what “done” means when the world is messier than the ticket.

Agents don’t remove that problem. They make it more visible.

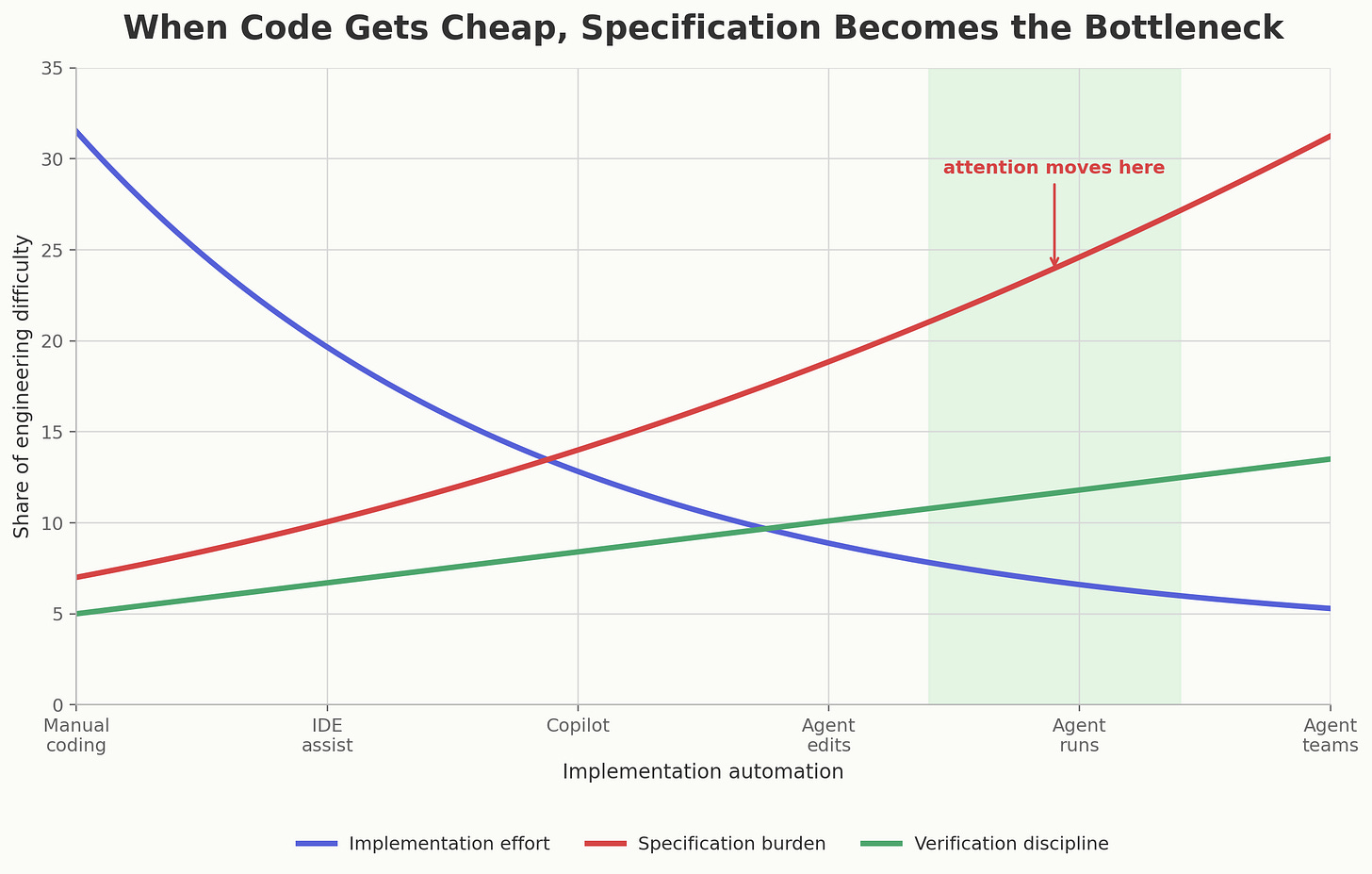

For decades, we hid the specification problem inside meetings, backlogs, code reviews, QA cycles, incident retrospectives, and the private mental models of senior engineers. A lot of software engineering was never “writing code.” It was dragging an underspecified idea through enough friction that the missing pieces were forced into the open.

Agents reduce the friction of producing code. That is wonderful. It also means the missing pieces surface later, because the system can now produce a plausible implementation before anyone has really decided what the implementation is supposed to mean.

In the old world, vague requirements ran into human slowness. In the agent world, vague requirements run into machine speed.

When implementation gets cheaper, the bottleneck does not disappear. It moves into specification and verification.

But Writing the Spec Is Only Half the Problem

Here’s what almost every framing of this tradeoff leaves out: a spec needs to be validated before you hand it to an agent.

This sounds obvious stated plainly. In practice, it’s systematically ignored.

When you write a spec — even a careful one — it can fail in ways that are invisible until the agent executes against it. It can be internally inconsistent: two requirements that contradict each other, neither obviously wrong in isolation. It can be incomplete: it covers the happy path thoroughly and says nothing about what happens when the third-party API returns a 429. It can be technically correct but untestable: the spec describes behavior that can’t be mechanically verified. And most insidiously, it can be precisely what you wrote but not what you meant.

An agent executing faithfully against a flawed spec produces something that is difficult to debug. It passed every check it was given. The problem isn’t in the implementation — it’s upstream, in the spec itself. And now the correction loop is more expensive, because you have to unwind not just code but reasoning.

Spec validation is therefore a distinct cost category that lives between “write spec” and “run agent.” It asks: is this spec internally consistent? Is it complete enough to constrain the agent usefully without over-constraining valid solutions? Does it actually describe the thing we intend to build?

That validation work is human time, or it’s agent time, or ideally it’s both — but it isn’t zero. The moment you add it to the ledger honestly, the picture changes.

How Agents Can Write Specs

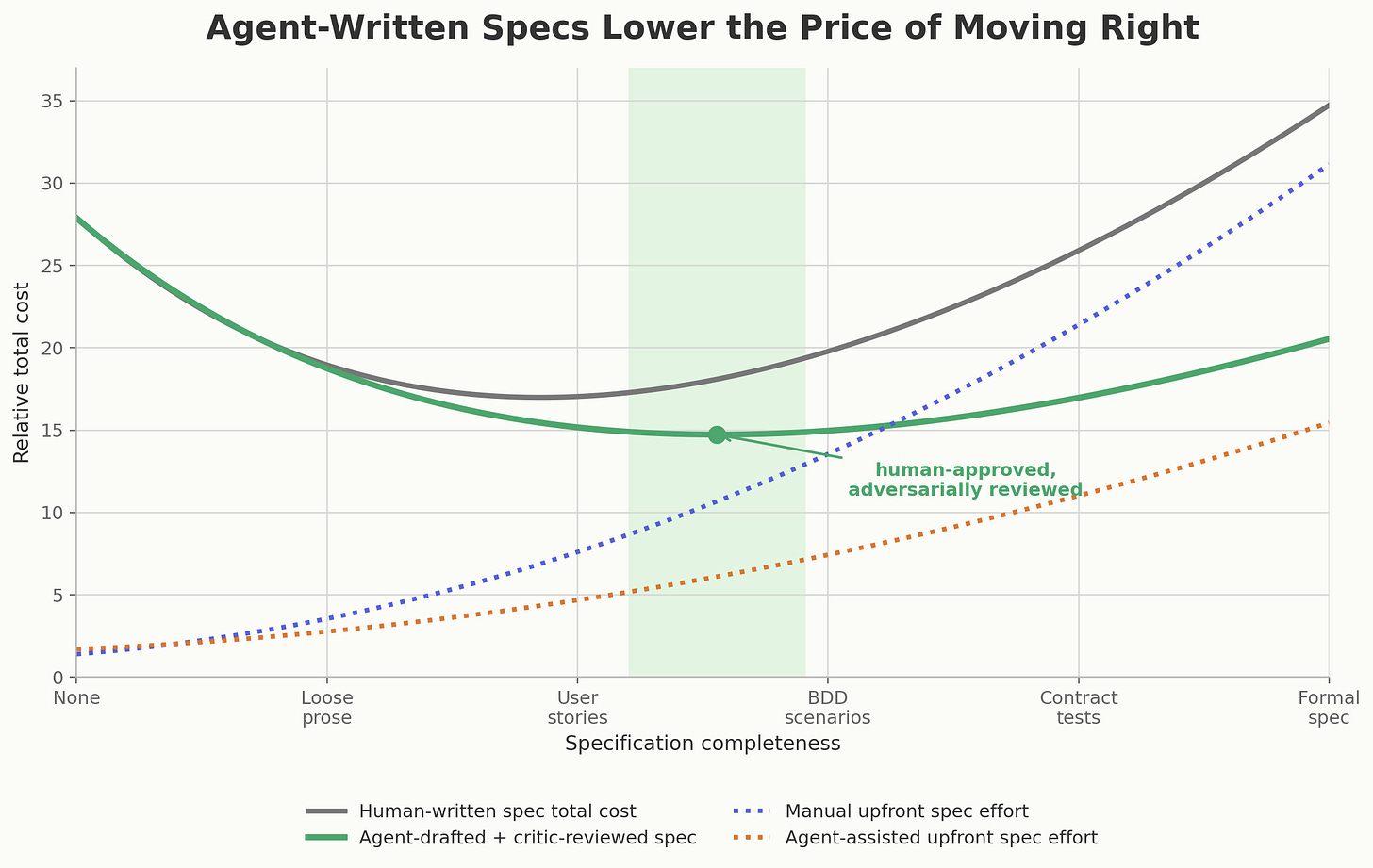

There’s a third strategy this two-pole framing systematically ignores: use agents to write and validate the spec, then use implementation agents to execute against it.

This changes the cost structure of the spec side of the curve. Instead of heavy human effort to produce acceptance criteria or BDD scenarios, a spec-drafting agent produces a first version from rough intent. A spec-validation agent — with a different role and system prompt, possibly with search access or domain knowledge — stress-tests that draft for consistency, completeness, and testability. A test-writing agent translates the surviving claims into executable checks. You review the result, which is faster than writing it from scratch.

The important detail is that the agent should not merely “write requirements.” That produces polished fog.

A useful spec-writing agent behaves less like a stenographer and more like a skeptical product engineer. It should name assumptions. It should separate goals from non-goals. It should produce examples and counterexamples. It should say which requirements are mechanically testable and which ones still depend on human judgment. It should identify the failure modes a lazy implementation would probably miss. It should ask what must be invariant across valid solutions.

The best prompt isn’t “write me a spec.” It is closer to this:

Draft the smallest spec that would let another agent implement this safely. Include assumptions, non-goals, acceptance criteria, edge cases, observable outcomes, and open questions. Then mark which parts can become automated tests and which parts require human review.

Then you run a different agent against the output:

Attack this spec. Find contradictions, ambiguous terms, hidden dependencies, untestable claims, missing failure modes, and places where an implementation could pass the written criteria while still violating the intent.

The sweet spot is not agent-written prose. It is human-approved, agent-drafted, adversarially reviewed specification with as much of the oracle made executable as the domain allows.

Agents do not remove the need for a spec. They can lower the cost of moving toward the useful part of the curve, where the spec is complete enough to guide implementation but still reviewed by a human.

This doesn’t make spec validation disappear. It changes who does it and at what cost. The structural requirement — that the spec be validated before the implementation agents run — remains. What changes is that agents are now doing part of that work.

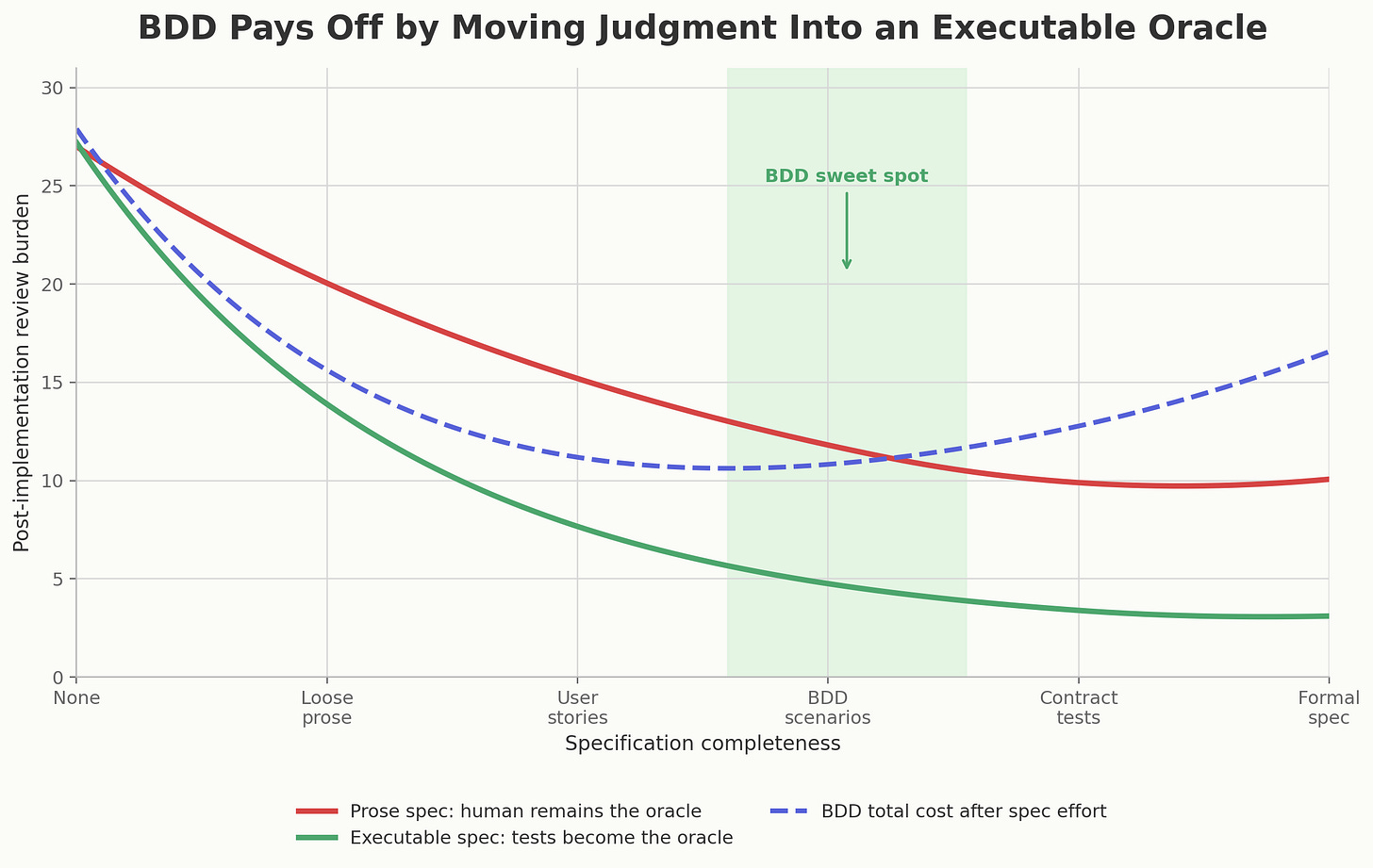

How BDD Partially Solves This

Behavior-driven development, when done well, collapses spec writing and spec validation into the same artifact. A Gherkin scenario is simultaneously a description of intent and an executable test. You can run the spec against a skeleton implementation immediately and observe whether the description produces coherent behavior. The act of making the spec executable forces a kind of validation that prose acceptance criteria don’t — some kinds of ambiguity have to be resolved before the scenario can even run.

This is why the minimum of the total cost curve doesn’t just reflect reduced rework. It reflects the structural advantage of a format where validation is built into the medium.

BDD earns its keep when it moves judgment out of repeated human review and into an executable oracle. That is why its sweet spot appears around behavior that is stable enough to test.

The catch is that someone still has to write the scenarios well. Gherkin can be written badly. Business-language specs can be ambiguous in ways that the BDD framework doesn’t catch because ambiguity lives in semantics, not syntax. The format helps, but it isn’t a substitute for discipline.

Multi-Agent Pipelines Break Everything

If you’re running a single agent on a well-bounded task, under-specification is recoverable. The feedback loop is tight, correction is local, and the cost is bounded.

Multi-agent pipelines are a different class of problem entirely.

When Agent A produces output that becomes Agent B’s input, any interpretive drift from A compounds into B’s execution. B doesn’t know that A went slightly off-course. B works hard and confidently on the wrong foundation. By the time the output surfaces to a human, the error has been amplified and obscured through multiple layers of apparently coherent work.

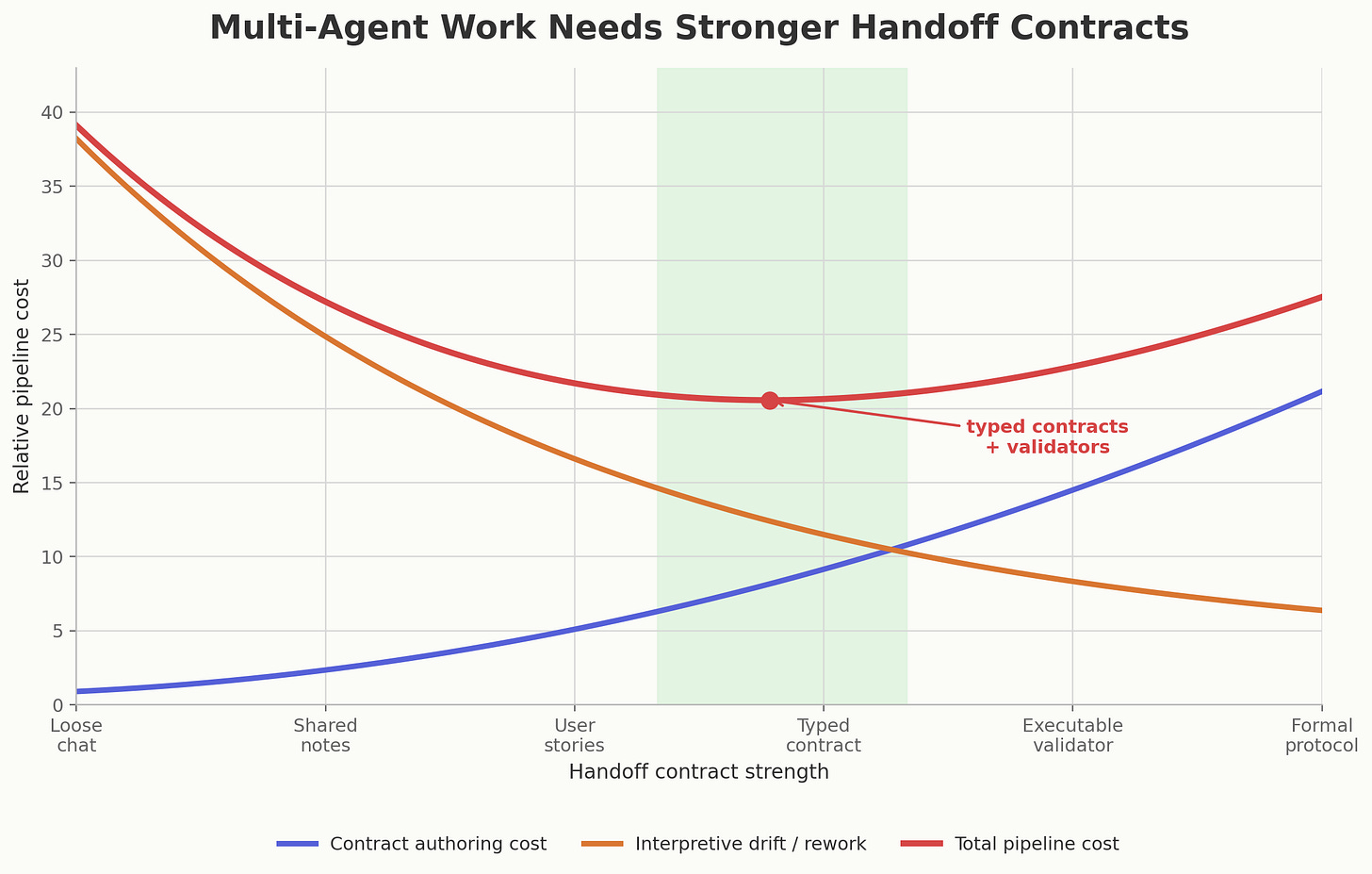

This shifts the breakeven point decisively toward specification. In a multi-agent system, a spec isn’t just guidance for a single execution — it’s a coordination contract between agents. The less precise that contract, the more each agent’s interpretive freedom introduces variance that accumulates. You want a strongly typed interface between agents, not a loose conversational handoff.

For multi-agent work, the x-axis is no longer just “how much did we specify?” It is “how strong is the handoff contract?” The minimum moves toward typed contracts and executable validators.

Validation of that contract matters correspondingly more. If the spec that coordinates your agents is flawed, you don’t have one agent doing the wrong thing — you have all of them, in parallel, doing differently-wrong things.

What Survives From Methodology

So does this make everything we learned about coordinating software teams obsolete?

No. But it does change which parts were load-bearing.

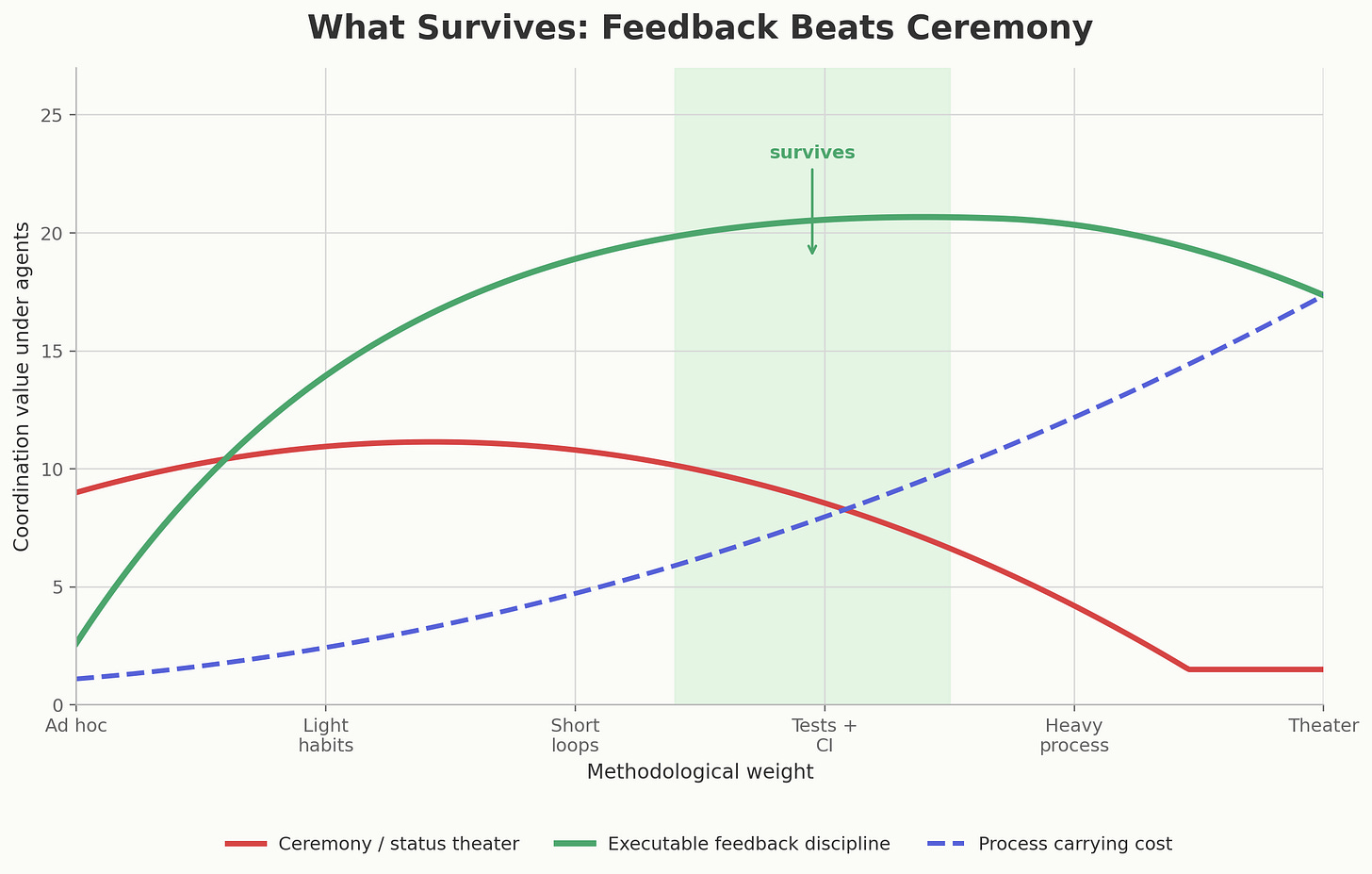

Agile as theater is in trouble. Standups where people recite status into the air, estimation rituals that produce fictional precision, ticket ceremonies whose main function is to reassure management that uncertainty has been domesticated — agents do not need those. Honestly, humans didn’t either.

Agile as a feedback philosophy survives. Short cycles survive. Working software over abstract progress survives. Customer collaboration survives. The insistence that plans should bend when reality speaks survives. If anything, agents make this more important, because they can generate a lot of convincing wrongness very quickly. The feedback loop has to get tighter, not looser.

XP survives even better. Test-first thinking survives because executable oracles are more valuable when implementation gets cheaper. Pair programming mutates into human-agent pairing, but the underlying idea remains: keep design judgment close to code production. Continuous integration survives because every agentic change needs a fast, impartial gate. Refactoring survives because agents can produce working code that is locally correct and structurally mediocre. Small releases survive because large invisible deltas are where both humans and agents lose the plot.

What probably fades is methodology as coordination theater for large groups of humans. What survives is methodology as a set of constraints that make ambiguity cheaper to discover.

Methodology survives where it creates fast feedback. It fades where it only creates status artifacts.

The interesting question is not whether Agile or XP “wins” in the agent era. The interesting question is which practices still reduce the cost of discovering that the spec was wrong.

Where to Actually Invest

The practical takeaway from this analysis is not “always write full BDD specs” and it’s not “always let agents roam.” It’s that the optimal investment point is task-dependent, and the honest calculation includes spec validation as a real cost.

There is no universal optimum. The sweet spot moves with the work.

For a single agent on a small, well-bounded task, the sweet spot is usually structured intent: a goal, examples, non-goals, and a few acceptance criteria. BDD may be overkill. Zero spec is still lazy accounting.

For deterministic, well-understood work — API integrations, CRUD services, data transformations — the breakeven point sits further right. More specification pays off faster because the domain is constrainable and the tests are automatable. Skimping on spec here is just deferring rework.

For exploratory or creative work — architecture decisions, novel problem approaches, research synthesis — over-specification constrains exactly what the agent’s flexibility is good for. The breakeven sits further left. Use the agent’s interpretive freedom deliberately, but put boundaries around the exploration.

For multi-agent systems, the sweet spot shifts right again. The handoff is the product. Every agent boundary needs a contract: schema, invariants, allowed ambiguity, validation checks, and failure behavior. Otherwise you’re not orchestrating agents. You’re compounding interpretations.

In all cases: validate your spec. Whether that’s a human review, an agent stress-test, or an executable format like BDD that forces structural consistency — the cost of skipping it is paid later, at higher interest, with worse diagnostics.

The seductive promise of zero-spec agent work is real, but the ledger it ignores is also real. The agents are getting better. The accounting problem is still ours.