CLI Tools vs MCP: The Hidden Architecture Behind AI Agents

Why AI models behave like terminal users—and how Java developers can use JBang and Skills to build better AI workflows.

The enterprise AI discussion in 2026 has clearly moved past “add a chatbot to the side of the app.” What architects are dealing with now is something else: systems where the model is not a feature, but part of the execution fabric. I was at Devnexus last week and that shift is visible everywhere.

Most teams start with one agent. One agent reads prompts, calls tools, searches data, writes output, and maybe even tries to validate its own work. For a small prototype, this feels productive. In a real enterprise workflow, it breaks down. The same agent is suddenly expected to research, reason, execute, validate, and stay within security and compliance boundaries. That is too much cognitive load in one place. The context window fills up, tool selection gets noisy, and reliability drops.

So the architecture naturally moves toward multi-agent systems. One agent investigates. Another transforms data. Another validates. Another has permission to execute. This looks a lot more like enterprise software already looks: specialized roles, bounded responsibilities, and controlled handoffs. But once you make that move, one technical question becomes central: how do these agents talk to real systems?

Most architects still answer that question with the same mental model they use for microservices. If a capability matters, expose it as an API. Put it behind HTTP. Maybe use a specialized protocol such as MCP. That reasoning is understandable. It matches how we already structure distributed systems. Anthropic introduced the Model Context Protocol in late 2024 specifically as an open standard for connecting AI systems to tools and data sources through MCP clients and servers.

On paper, that sounds clean. In practice, many people building real agent systems are reaching for something simpler: the command line. Jannik Reinhard’s article makes that argument directly. His point is not that MCP is useless. His point is that for many agent workflows, CLI tools align much more naturally with how the model actually operates: reason, run a command, inspect output, decide the next step.

That is the important architectural shift for Java developers. For years, we treated the HTTP endpoint as the default service boundary. But agent-driven systems increasingly treat the terminal as the integration surface. If you want Java systems to participate in that world, the fastest path is often not another microservice. The fastest path is a well-designed CLI tool.

And in the Java ecosystem, the most practical way to build that tool is JBang. I’ve written about JBang and Pyhon scripts before, so go check this out too!

Why the API-Centric Model Starts to Hurt

The problem with an API-first agent architecture is not that APIs are bad. The problem is that the protocol overhead often becomes part of the model’s working memory.

MCP gives you structure. It also gives you schemas, parameter definitions, and tool metadata that often have to be loaded before the agent can do any real work. In Reinhard’s example, a GitHub MCP server with 93 tools costs roughly 55,000 tokens before the actual task starts. In his broader enterprise example, stacking multiple MCP servers pushes tool-definition overhead beyond 150,000 tokens. He contrasts that with CLI usage, where a familiar command like gh issue create ... consumes only the command and its output, not a giant schema payload.

That token math changes the architecture. Tokens are not just billing units. They are working memory. Every token spent on protocol plumbing is a token not spent on reasoning, validation, or problem solving. In Reinhard’s side-by-side Intune example, the MCP flow consumed about 145,000 tokens for a 50-device workflow, while the CLI flow used about 4,150. He presents that as roughly a 35x reduction. Even if your own numbers differ, the structural lesson is hard to ignore: agent systems become fragile when tool discovery and transport overhead start to dominate the context window.

There is another problem. MCP tends to move intermediate data through the model. If the tool returns a large log, a large transcript, or a wide JSON payload, the model has to carry that material through its reasoning loop. A shell does not have that limitation. The shell can filter before the model ever sees the result.

This is why the terminal works so well for agents. The shell already gives you progressive disclosure. The tool can return exactly what the model needs right now, not the entire universe of data that happens to be available.

Why Models Behave Like Terminal Users

Reinhard makes another useful point: models are already fluent in terminal patterns. They have seen massive amounts of documentation, tutorials, Stack Overflow posts, shell snippets, GitHub READMEs, and operational examples involving tools such as git, docker, kubectl, gh, and az. So when an agent sees a command like git log --oneline -10, it is working with a familiar pattern. When it sees a new MCP schema at runtime, it first has to learn the abstraction before it can solve the task.

This is also where Skills become important. A model may already recognize command-line patterns, but Skills make those patterns executable in a more disciplined way. They provide a reusable operating method around the CLI: which command to run, how to structure arguments, what output format to expect, how to detect failure, and how to recover safely. In that sense, Skills play a role similar to shell scripts, operational runbooks, or internal platform conventions. They do not replace CLI tools. They make CLI tools easier for agents to use correctly and consistently.

That is why CLI composability matters. The shell gives agents a native environment for chaining operations. One command produces JSON, another filters it, another extracts the field, another writes the final file. The reasoning loop becomes smaller and more focused. The model spends less effort learning the tool surface and more effort deciding what to do next.

This is also why the terminal fits multi-agent systems so well. One agent can call a diagnostic command. Another can consume the filtered output. A third can validate the result. The terminal becomes the shared execution layer, while the Skills define safe and repeatable usage patterns around that layer.

This addition fits your argument well because it shows that CLI success is not just about the shell being familiar. It is also about giving the model a reusable procedure for working inside that environment.

The Java Terminal Renaissance

If this were 2016, the argument would mostly stop here because Java would still be the wrong tool for this environment. Too much ceremony. Too much packaging. Too much runtime overhead.

That is not really true anymore.

JBang changed the ergonomics. The simplest script is a single .java file with a normal main method, and JBang supports dependency and execution metadata through script directives embedded in the file itself. It also supports remote execution, which means a script can be executed directly from a URL.

That is a big deal for agents. It means your Java CLI tool no longer needs a Maven project, a shaded jar, and a release process just to become usable. You can publish a single file and run it. You can host it in version control and let the agent fetch it directly. You can update the capability by changing one source file instead of pushing a whole service deployment.

Then there is GraalVM. You can use Native Image as a way to produce ahead-of-time compiled executables with reduced startup time and lower memory overhead that start instantly and require no warmup to run at peak performance.

For agent workloads, that matters a lot. Agents do not call tools once. They call them repeatedly. They probe. They test. They retry. They branch. If every invocation pays full JVM startup cost, that latency compounds quickly. JBang reduces friction for distribution and local execution. GraalVM removes the remaining startup penalty when the tool becomes performance-critical.

That combination: JBang for zero-ceremony distribution and GraalVM for low-latency execution, gives Java a very credible CLI story in 2026.

What an Agent-Native Java CLI Tool Should Look Like

A human-friendly CLI and an agent-friendly CLI are not the same thing.

If the consumer is an autonomous agent, your tool needs three things. It needs unambiguous arguments. It needs machine-readable output. And it needs reliable exit codes.

That is why Picocli is such a good fit. It gives you strict parameter handling and a predictable command surface. If the model generates a bad parameter, the command fails early and clearly. That is exactly what you want. The CLI becomes a hard boundary around the model’s probabilistic behavior.

The second requirement is structured output. JSON should be the default. Humans can still get a text mode for debugging, but the agent should not have to parse decorative console output. A JSON response on standard output is a much stronger contract than “some lines that probably contain the information.”

The third requirement is deterministic exit behavior. A return code of 0 means success. Non-zero means failure. That sounds basic, but it is the entire control channel for an orchestrator deciding whether to continue, retry, escalate, or stop.

Here is a small example that fits that model:

///usr/bin/env jbang "$0" "$@" ; exit $?

//DEPS info.picocli:picocli:4.7.6

//MAIN ai_tool

//DEPS com.fasterxml.jackson.core:jackson-databind:2.17.0

import picocli.CommandLine;

import picocli.CommandLine.Command;

import picocli.CommandLine.Option;

import com.fasterxml.jackson.databind.ObjectMapper;

import com.fasterxml.jackson.databind.node.ObjectNode;

import java.lang.management.ManagementFactory;

import java.time.Instant;

import java.util.concurrent.Callable;

@Command(name = "ai-tool", mixinStandardHelpOptions = true, description = "Provides structured system information for AI agents")

public class ai_tool implements Callable<Integer> {

@Option(names = "--format", description = "Output format: json or text", defaultValue = "json")

String format;

public static void main(String[] args) {

int exitCode = new CommandLine(new ai_tool()).execute(args);

System.exit(exitCode);

}

@Override

public Integer call() throws Exception {

long uptime = ManagementFactory.getRuntimeMXBean().getUptime();

int processors = Runtime.getRuntime().availableProcessors();

long memory = Runtime.getRuntime().maxMemory();

if ("text".equalsIgnoreCase(format)) {

System.out.println("uptime=" + uptime);

System.out.println("processors=" + processors);

System.out.println("maxMemory=" + memory);

} else {

ObjectMapper mapper = new ObjectMapper();

ObjectNode node = mapper.createObjectNode();

node.put("timestamp", Instant.now().toString());

node.put("uptime", uptime);

node.put("processors", processors);

node.put("maxMemory", memory);

System.out.println(

mapper.writerWithDefaultPrettyPrinter().writeValueAsString(node));

}

return 0;

}

}This is small, but it already expresses the right contract. The command accepts a controlled argument, returns structured output by default, and finishes with a deterministic status code.

Tool Discovery Without MCP Bloat

A fair objection to CLI-first architecture is tool discovery. MCP has a built-in mechanism for advertising tool definitions. If you remove MCP, how does the agent know what exists?

In the Java world, JBang gives you a very practical answer: catalogs. JBang supports app installation and organization around scripts, and a catalog can serve as a lightweight registry of available tools. Instead of injecting giant JSON schemas into the model context, your orchestrator can maintain a compact manifest of aliases and descriptions.

That manifest can be tiny. A short Markdown list like this is often enough:

## Available Tools

- `jbang sys-info` - Returns uptime, CPU count, and max memory as JSON

- `jbang repo-check` - Scans a Git repository and reports branch, remotes, and dirty state

- `jbang log-filter` - Extracts matching lines from local application logs

This gives the model the discovery layer it needs without paying the token cost of full protocol schemas. It also keeps the descriptions human-editable. Architects can curate the tool surface instead of dumping everything into the model and hoping for the best.

Configuration and State

CLI tools for agents should stay as stateless as possible.

That is why environment variables are a good default for configuration. They work well with shells, containers, and ephemeral sandboxes. They avoid file mutation. They reduce race conditions. They fit how orchestration systems already inject secrets and environment-specific values.

A pattern like this is simple and effective:

export AI_TOOL_MODE=productionAnd in Java:

String mode = System.getenv("AI_TOOL_MODE");This keeps the tool portable and easy to run under different agent roles.

Production Hardening

This is the part many demos skip. A CLI tool that works once on your laptop is not yet an enterprise integration surface.

First, you need to assume parallel execution. Agents will run tools concurrently. That means no hardcoded temp files, no global mutable state, and no fixed ports unless you really mean it. Each invocation should behave as if it is the only thing that matters, even when 20 copies are running at once.

Second, you need to treat the shell boundary as a security boundary. Do not pass raw model text into a shell and hope for good behavior. Anthropic’s transparency reporting shows that command-line and external-tool scenarios remain a serious safety domain, which is exactly why the execution layer must be controlled.

The pattern that scales is mediation. The model does not get shell access directly. An orchestrator validates intent, maps it to an allowed command, executes it in a sandbox, captures output, redacts sensitive data if needed, and only then passes the result back into the model. That is where frameworks such as Spring AI or LangChain4j fit nicely in a Java architecture: not as the executor, but as the policy and orchestration layer.

Third, you should think in terms of privilege tiers. A read-only research tool is not the same as an execution tool. A diagnostic agent should not be able to mutate infrastructure just because it can compose convincing commands. In multi-agent systems, that distinction becomes one of the main safety mechanisms.

Where MCP Still Makes Sense

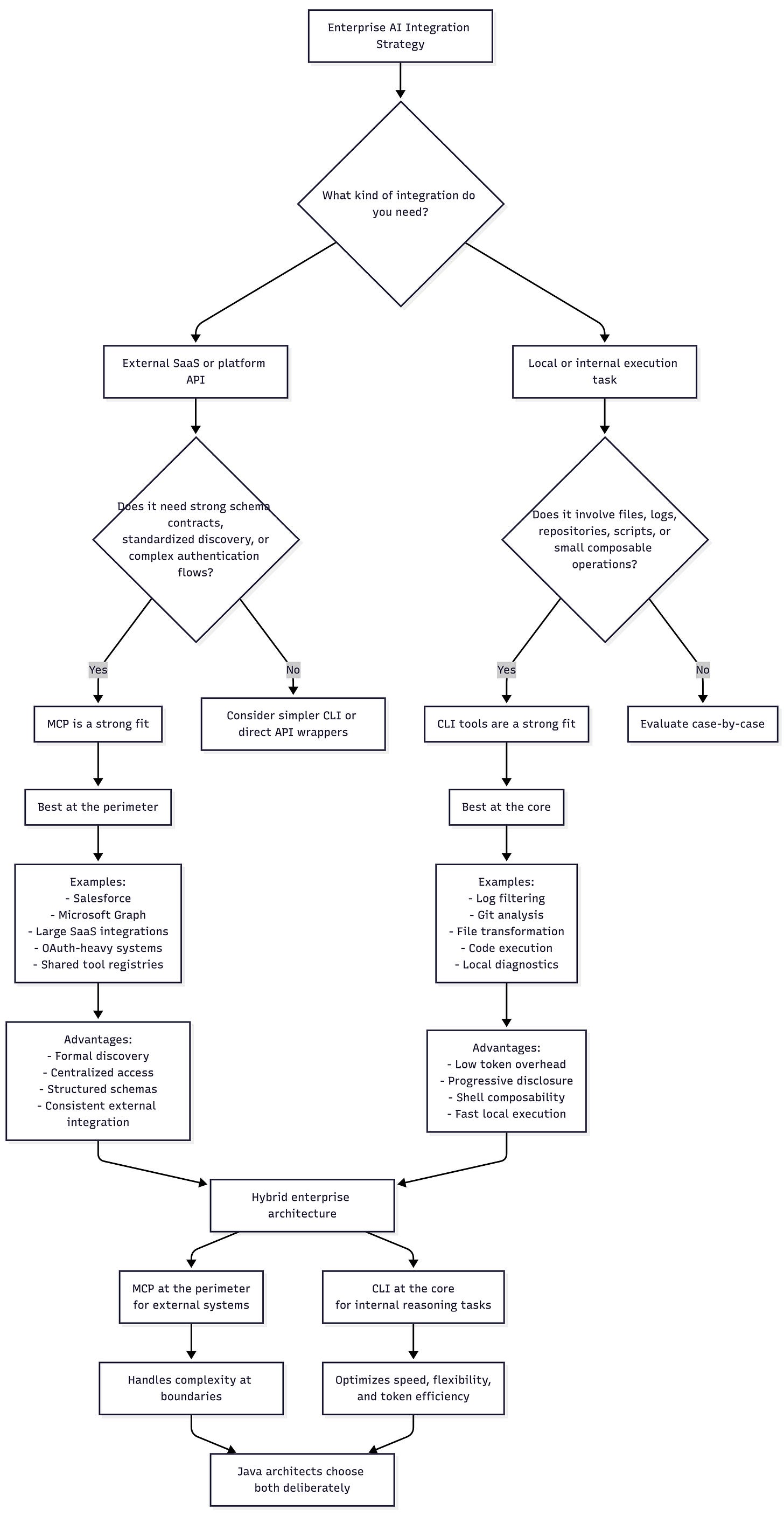

The right conclusion here is not “MCP is dead.” Even Reinhard’s article does not make that claim. He argues that CLI should be the default in many workflows, not the only pattern forever.

MCP still makes sense when you need strict schema-driven contracts, complex SaaS integrations, explicit capability negotiation, or a tool surface that has no natural command-line equivalent. Anthropic still positions MCP as an open standard for connecting agents to external tools and data, and there are good reasons that idea keeps gaining support.

The more realistic enterprise answer is hybrid.

Use MCP at the perimeter where the complexity of third-party systems, OAuth flows, multi-tenant permissioning, and formal discovery justify the extra protocol. Use CLI tools at the core where the agent needs fast, local, composable, token-efficient operations on files, logs, repositories, scripts, or internal utilities.

That hybrid view is much more useful for Java architects than a protocol war.

Verification

You can run the JBang script directly:

jbang ai-tool.javaJBang officially supports this single-file execution model, so there is no Maven project required for the first iteration. (jbang.dev)

Expected output:

{

"timestamp" : "2026-03-07T15:25:56.110634Z",

"uptime" : 70,

"processors" : 16,

"maxMemory" : 17179869184

}Now run the text mode:

jbang ai-tool.java --format textExpected output:

uptime=69

processors=16

maxMemory=17179869184The important part is not the exact values. The important part is the contract. The command accepts a stable argument, returns a stable structure, and signals completion with a stable exit code.

That is what makes it usable by an agent.

Conclusion

The industry started by assuming agents would integrate like API clients. That assumption is starting to crack. Protocols such as MCP give structure, but they also introduce schema overhead, token pressure, and a less natural execution model for many real workflows. CLI tools fit the agent loop much better: reason, run, inspect, refine. Reinhard’s examples and benchmarks make that case clearly, even if every team will end up with its own numbers.

For Java architects, this opens an opportunity. JBang turns Java into a zero-ceremony scripting environment. GraalVM Native Image gives Java an execution profile that fits high-frequency agent loops. Picocli gives you strong command boundaries. Put together, they give Java a serious role in the terminal-native AI stack.

The future is probably not MCP or CLI. It is MCP and CLI, used deliberately. But when you need the fastest, simplest, most agent-native path into an existing Java estate, the terminal is often the right integration surface.

Very interesting! I'm working on an API for submitting certain kind of data, and have made several jbang+picocli scripts for testing, validating and inspecting the data and API behavior. Your article shows an additional value, the scripts are (with some small extra effort) also agent-friendly!

A small comment about the code example, with Java records and Jackson, you don't have to use object builder and put, you just serialize the record.