Control Planes for AI: The Missing Layer in Enterprise Java

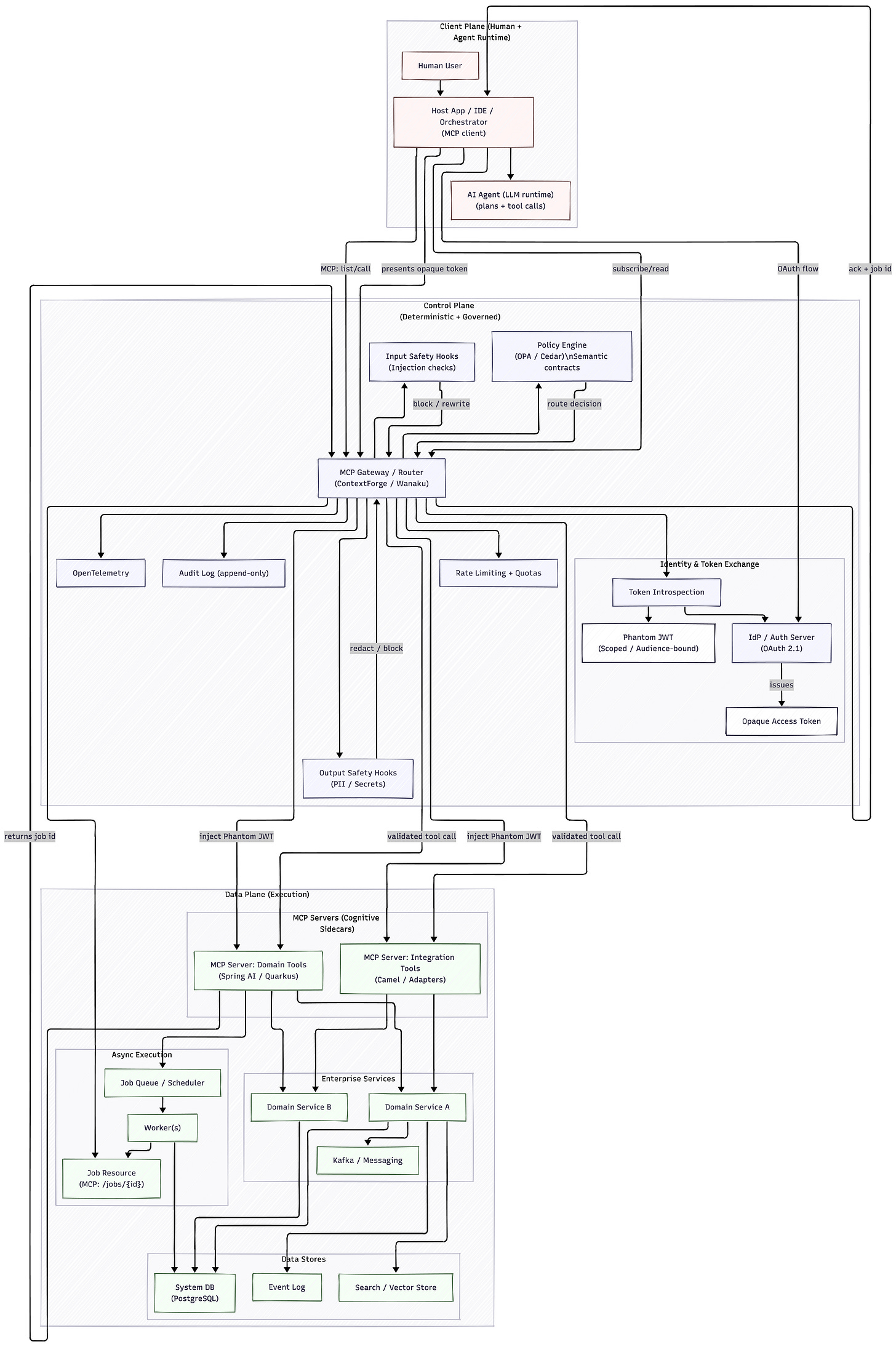

Designing deterministic routing, identity propagation, and secure MCP gateways for Spring and Quarkus platforms.

The enterprise software world is changing fast. For years, most companies treated artificial intelligence as a feature you could bolt onto the side of your application. A chatbot in a sidebar. A conversational helper that answers questions but never really acts. I call this “Mascot AI.” It looks impressive in demos. It does not change your architecture.

Mascot AI forces humans to stay in the loop as manual integration layers. You copy insights from the chat window. You paste them into your ERP. You run the report yourself. The AI gives advice. The system of record stays untouched. In production, this becomes friction. People context-switch all day. Errors creep in. Nothing is automated end-to-end.

What is happening now is different. We are moving from conversational AI to agentic AI. From one-shot generation to reasoning systems that plan, call tools, and execute multi-step workflows. And this changes architecture. The platform must treat the AI agent as a first-class citizen. Not a UI widget. A headless collaborator with programmatic access, like a CI runner or a service account.

This shift aligns with a deeper change in model capabilities. Early generative systems behave like “System 1” thinking. Fast, intuitive, single-step predictions. They are good at drafting text. They hallucinate under pressure. Agentic systems move toward “System 2” reasoning. They decompose tasks, call tools, validate outputs, and iterate. That requires infrastructure. Stateful execution. Clear contracts. Security boundaries. And this is where the Model Context Protocol enters the picture. I have written about the how and approaches to MCP in Enterprise Java systems before. Today I am going to look beyond the technical implementation and deployment patterns.

From Chat Feature to Platform Primitive

The biggest problem in the old model is context switching. Vendors shipped proprietary chat UIs. They expected users to bridge the gap between AI suggestions and backend systems. The human became the integration layer.

This does not scale.

An AI-ready enterprise platform needs different primitives. The agent must discover capabilities dynamically. It must authenticate with short-lived credentials. It must call well-defined tools with structured schemas. And every action must be tied to a human identity.

For local experiments, a Model Context Protocol (MCP) server running over standard input and output is fine. This works well in an IDE. It works for coding assistants. It does not work for enterprise production. In production, you need remote-hosted MCP servers. You need Server-Sent Events or streamable HTTP. You need lifecycle management, audit logs, and centralized governance.

Three primitives are essential.

First, discoverable tool calling. Business logic must be packaged as explicit functions with JSON schemas. The model must understand not just the parameter types, but the intent. If a tool approves a loan, the schema should say what “approval” means in business terms. Not just

boolean approved.Second, structured context delivery. Dumping raw data into a prompt is a mistake. Token windows are finite. Latency increases with input size. Reasoning degrades with noise. The platform must expose summarized resources first, then allow drill-down. Context becomes a managed asset.

Third, identity and governance. Every autonomous action must be non-repudiable. The agent acts on behalf of a user. That relationship must be explicit and enforced. Otherwise, you create a new attack surface.

The Cognitive Sidecar

It is tempting to compare MCP servers to microservices. But that analogy is incomplete.

Microservices solved an organizational scaling problem. They allowed teams to build and deploy bounded contexts independently. MCP solves a contextual scaling problem. It allows non-human agents to discover and safely invoke those bounded contexts without custom integration code for every model.

The MCP server becomes a cognitive sidecar. It translates deterministic APIs into semantic contracts. It does not replace your Spring Boot or Quarkus services. It wraps them in a language the model can understand.

This matters for the Java ecosystem. There is a misconception that AI integration belongs to Python. That is not true. MCP is language-agnostic. If your enterprise runs on Spring Boot, Quarkus, Jakarta EE, or even legacy Java stacks, you can expose your domain as agent-callable infrastructure.

Spring AI already supports MCP integration through its Java SDK. You can annotate existing services with @McpTool. The framework inspects the method signature, generates JSON schemas, and handles serialization. Behind the scenes, it converts model-generated JSON into strongly typed Java objects and back. If the model sends an invalid parameter, the framework returns a structured protocol error. The model can then self-correct.

Quarkus offers similar capabilities through its MCP server extensions. In high-performance environments, you can expose Panache-backed search methods as tools. You secure them with OIDC. You propagate tokens downstream. The cognitive sidecar remains secure by default.

The key idea is simple: your existing domain logic becomes agent-callable without rewriting the world.

Domain-Driven Design for Probabilistic Consumers

Traditional APIs were built for deterministic clients. A mobile app calls /orders/123. A backend service posts to /payments. The execution flow is scripted.

An agent is different. It plans. It selects tools dynamically. It may retry operations. It may explore multiple paths.

This changes Domain-Driven Design.

The Ubiquitous Language is no longer just a shared vocabulary between developers and domain experts. It becomes a semantic contract for the model. The schema descriptions in your MCP tools must encode intent, constraints, and side effects. The model does not read your JavaDocs. It reads the schema.

You must not expose raw CRUD operations. Giving an agent a generic updateOrderStatus tool with arbitrary fields is dangerous. Hallucinated parameters can corrupt data. Instead, expose intent-based commands. approveLoanApplication. cancelBooking. generateInvoice. Inside these methods, you enforce invariants and transaction boundaries.

Idempotency becomes critical. Models retry. Networks fail. If an agent calls processPayment twice, you must not charge the customer twice. Every domain command exposed via MCP must be idempotent or protected with unique identifiers.

Context must be lazy-loaded. Start with summaries. Let the agent drill down when needed. This keeps token usage under control and improves reasoning accuracy.

Event sourcing becomes interesting here. When system state is an immutable sequence of events, an agent can inspect history, understand causality, and maintain traceability. This is powerful for debugging multi-agent workflows and for compliance.

Asynchronous Reality

Language models expect responses. Cloud infrastructure enforces timeouts. Many enterprise operations take minutes or hours.

You cannot block a connection for 20 minutes waiting for a risk simulation.

MCP defines asynchronous patterns. Instead of blocking, the server acknowledges the request and returns a job identifier. The client subscribes to a resource. Progress updates are emitted with structured notifications. The agent sees percentage complete, intermediate results, and status transitions.

Cancellation is equally important. If the user changes direction, the agent can send a cancellation request. The backend stops the job. Resources are released. This prevents runaway background tasks.

This pattern aligns well with reactive stacks in Spring and Quarkus. It keeps the data plane stable while serving dynamic, probabilistic clients.

The Routing Latency Paradox

In classic distributed systems, we embraced “smart endpoints and dumb pipes.” With AI, the pipe itself can reason. A model could inspect payloads and decide where to route them.

This sounds attractive. It is also dangerous.

Every routing decision would require inference. That adds latency. It introduces non-determinism. In regulated environments, you cannot have routing decisions change because of a slightly different prompt phrasing.

The solution is a deterministic Control Plane.

Separate your system into a Data Plane and a Control Plane. The Data Plane contains your Java services, databases, Kafka brokers. The Control Plane enforces routing, authentication, and rate limits.

An MCP router in the Control Plane intercepts all agent requests. It applies semantic contracts defined as policy-as-code. It decides which backend tools are reachable. Routing becomes deterministic and fast. The model focuses on reasoning within a bounded sandbox.

This resolves the routing latency paradox. You do not ask a billion-parameter model to act as a load balancer.

Gateway Virtualization

Standard API gateways are not enough. MCP traffic includes dynamic schema discovery, tool registration, and streaming interactions.

Specialized gateways have emerged.

Wanaku, built on Quarkus and Apache Camel, provides a native path for Java-heavy organizations. It can expose existing Camel routes as MCP tools through YAML configuration. No new Java code required. The agent can query the live Camel catalog, validate URIs, and interact with legacy systems through structured tools.

ContextForge acts as a federated AI gateway. It can ingest OpenAPI specifications and wrap them as MCP interfaces. It supports multi-cluster federation, Redis-backed caching, and safety hooks. Pre- and post-execution guards can detect prompt injection attempts or data exfiltration. The Control Plane enforces safety before the request reaches the Data Plane.

Both approaches share a common idea: virtualize legacy systems as agent-callable capabilities without rewriting them.

Identity and the Confused Deputy

Autonomous agents introduce new attack vectors. The most critical is the Confused Deputy problem. If the agent holds broad system credentials, a malicious prompt can trick it into performing privileged actions.

The fix is identity-aware MCP servers with strict token propagation.

Use OAuth 2.1. The client obtains an opaque reference token. The AI agent never sees a full JWT with sensitive claims. The API gateway exchanges the opaque token via introspection and injects a short-lived JWT upstream. This is the Phantom Token pattern.

The backend validates the JWT. It enforces role-based access control. A basic user cannot trigger administrative tools. Even if the model attempts it, the call fails.

Tokens must expire. They must include unique identifiers. They must be revocable. Credentials must never leak into prompt context. Anything in the context window is exposed.

Network isolation is equally important. Gateways must block server-side request forgery by default. Untrusted MCP servers must run in hardened containers or micro-VMs. The Control Plane becomes a zero-trust environment.

Industry Momentum

This is no longer theoretical.

In insurance, companies are virtualizing mainframe capabilities as MCP tools. Autonomous claims agents file documents, trigger workflows, and update systems. Underwriting agents query rating engines and compute risk scenarios. Humans move into supervisory roles.

In finance and automotive, similar patterns emerge. Conferences across Europe now dedicate tracks to agentic Domain-Driven Design, event sourcing for AI systems, and secure AI integration in CI pipelines. The conversation has shifted from experiments to production.

The Java ecosystem is part of this shift. Spring, Quarkus, and Jakarta EE applications are becoming AI infrastructure. Not by rewriting everything. By exposing well-defined semantic contracts through MCP.

Conclusion

The era of Mascot AI is ending. Enterprise platforms must treat AI agents as first-class, headless collaborators with secure, governed access to domain capabilities.

The Model Context Protocol provides the vocabulary and integration layer. But the protocol alone is not enough. You need intent-based tools, idempotent domain commands, asynchronous execution models, deterministic Control Planes, and identity propagation through patterns like Phantom Tokens.

The organizations that succeed will not be those who embed a large language model into a UI. They will be those who build secure semantic bridges between probabilistic reasoning engines and deterministic enterprise Java systems.