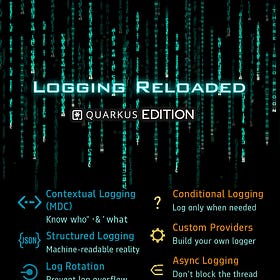

The Quarkus Logging Files

From MDC correlation IDs to structured JSON and async logging. Build logs that actually help when production goes down.

It was a Tuesday night when production went dark. Three thousand users. One crashed order service. A bug report that said only: “It’s broken.”

The on-call engineer had five minutes, a cold coffee, and one weapon left: the logs.

Most developers think logging is about printing messages. Add LOG.info("Order received"), push to Git, move on. It works in dev. It compiles. It looks fine.

Then production happens.

You get twelve concurrent requests, three threads, one exception. Logs interleave. No correlation IDs. No context. Framework noise everywhere. Hibernate screaming. Kafka chatting. Your own code whispering useless things like “Done.”

The problem is not that logging is missing. The problem is that logging without structure becomes noise under pressure. And noise at 2am is worse than silence.

In this tutorial, we don’t configure properties for fun. We build an order service. We instrument it properly. We break it. We observe it. We fix it. And we walk away with a logging setup that survives production.

By the end, you will understand:

JBoss Logging vs

io.quarkus.logging.LogLog levels and category filtering

JSON output with ECS format

MDC correlation IDs

Named handlers for audit logs

Async logging and backpressure trade-offs

And you’ll never write “Order received” again.

Prerequisites

You need a working Quarkus setup. I assume you already build services in production.

Java 21 installed

Maven 3.9+

Quarkus CLI or Maven wrapper

Basic understanding of REST endpoints

Project Setup

Create the project or start from my Github repository.

mvn io.quarkus.platform:quarkus-maven-plugin:create \

-DprojectGroupId=com.noir \

-DprojectArtifactId=logging-order-service \

-Dextensions="rest,rest-jackson,io.quarkus:quarkus-logging-json"

cd logging-order-serviceExtensions explained:

rest– REST endpointsrest-jackson– JSON serializationlogging-json– structured JSON output for production

With the inclusion of the logging-json dependency, the logging is switched to json automatically. We do not want this in test and dev. So we turn it back into a human readable format by adding the following lines to the application.properties:

%dev.quarkus.log.console.json.enabled=false

%test.quarkus.log.console.json.enabled=falseStart dev mode:

./mvnw quarkus:devYou should see something like:

INFO [io.quarkus] (Quarkus Main Thread) logging-order-service 1.0.0-SNAPSHOT on JVM (powered by Quarkus 3.31.3) started in 0.874s. Listening on: http://localhost:8080Good. The crime scene is ready.

Implementation

We build a minimal but realistic order service with:

OrderResourceOrderRequestPaymentServiceAuditServiceCorrelationFilter

Everything compiles. Nothing hand-wavy.

The Request Model

Start with a proper request object.

package com.noir;

public record OrderRequest(String orderId, String userId, double amount) {

}OrderResource

Now the entry point.

package com.noir;

import com.noir.audit.AuditService;

import com.noir.payments.PaymentService;

import jakarta.enterprise.context.ApplicationScoped;

import jakarta.inject.Inject;

import jakarta.ws.rs.POST;

import jakarta.ws.rs.Path;

import jakarta.ws.rs.Consumes;

import jakarta.ws.rs.Produces;

import jakarta.ws.rs.core.MediaType;

import jakarta.ws.rs.core.Response;

import org.jboss.logging.Logger;

@Path("/orders")

@ApplicationScoped

public class OrderResource {

private static final Logger LOG =

Logger.getLogger(OrderResource.class);

@Inject

PaymentService paymentService;

@Inject

AuditService auditService;

@POST

@Consumes(MediaType.APPLICATION_JSON)

@Produces(MediaType.APPLICATION_JSON)

public Response placeOrder(OrderRequest request) {

LOG.infof("Order received: orderId=%s userId=%s amount=%.2f",

request.orderId, request.userId, request.amount);

paymentService.charge(request.orderId, request.amount);

auditService.auditOrder(request.orderId, request.userId);

LOG.debugf("Order processing complete: orderId=%s",

request.orderId);

return Response.ok().build();

}

}This already teaches something important.

We log:

At INFO for business events

At DEBUG for lifecycle details

We do not log sensitive data like payment tokens. That matters later.

PaymentService

We use io.quarkus.logging.Log for zero boilerplate.

package com.noir.payments;

import io.quarkus.logging.Log;

import jakarta.enterprise.context.ApplicationScoped;

@ApplicationScoped

public class PaymentService {

public void charge(String orderId, double amount) {

Log.infof("Initiating payment: orderId=%s amount=%.2f",

orderId, amount);

if (amount > 10000) {

Log.warnf("Large transaction flagged: orderId=%s amount=%.2f",

orderId, amount);

}

if (amount < 0) {

Log.errorf("Invalid amount: orderId=%s", orderId);

throw new IllegalArgumentException("Amount must be positive");

}

Log.infof("Payment authorised: orderId=%s", orderId);

}

}What this guarantees:

Log category equals the class name.

What it does not guarantee:

Correlation across requests. If ten requests run concurrently, logs interleave.

We fix that next.

AuditService with Named Logger

Audit logs should not mix with application logs.

package com.noir.audit;

import org.jboss.logging.Logger;

import io.quarkus.arc.log.LoggerName;

import jakarta.enterprise.context.ApplicationScoped;

import jakarta.inject.Inject;

@ApplicationScoped

public class AuditService {

@Inject

@LoggerName("com.noir.audit")

Logger auditLogger;

public void auditOrder(String orderId, String userId) {

auditLogger.infof("AUDIT orderId=%s userId=%s",

orderId, userId);

}

}This logger uses a specific category. Later we route it to a separate file.

This is not cosmetic. This is compliance.

MDC Correlation Filter

Now the important part.

package com.noir.logging;

import java.io.IOException;

import java.util.UUID;

import org.jboss.logging.MDC;

import jakarta.annotation.Priority;

import jakarta.ws.rs.Priorities;

import jakarta.ws.rs.container.ContainerRequestContext;

import jakarta.ws.rs.container.ContainerRequestFilter;

import jakarta.ws.rs.ext.Provider;

@Provider

@Priority(Priorities.USER - 100)

public class CorrelationFilter implements ContainerRequestFilter {

@Override

public void filter(ContainerRequestContext context) throws IOException {

String traceId = context.getHeaderString("X-Trace-Id");

if (traceId == null) {

traceId = UUID.randomUUID().toString();

}

MDC.put("traceId", traceId);

MDC.put("path", context.getUriInfo().getPath());

}

}MDC is thread-local context.

Every log line on that request thread now carries traceId.

Without this, debugging concurrent failures becomes guesswork.

With this, you filter by one ID and see the entire request path.

Configuration

Now we wire logging properly in src/main/resources/application.properties.

Log Levels and Categories

Add below configuration to application.properties:

# Global default

quarkus.log.level=INFO

# Our code more verbose

quarkus.log.category."com.noir".level=DEBUG

# Silence framework noise

quarkus.log.category."org.hibernate".level=WARN

quarkus.log.category."io.quarkus.resteasy.reactive".level=WARN

# Enable TRACE compilation

quarkus.log.min-level=TRACEImportant detail:

If you do not set quarkus.log.min-level=TRACE, TRACE calls can be compiled out.

This is build-time behavior. Not runtime filtering.

Human-Friendly Format in Dev

%dev.quarkus.log.console.format=%d{HH:mm:ss.SSS} %-5p [%c{1.}] [traceId=%X{traceId}] (%t) %s%e%n

%dev.quarkus.console.color=trueNow logs look like:

10:22:01.045 INFO [c.n.p.PaymentService] [traceId=a3f9e12b] Initiating paymentReadable. Correlated. Thread visible.

JSON in Production

%prod.quarkus.log.console.json.enabled=true

%prod.quarkus.log.console.json.pretty-print=false

%prod.quarkus.log.console.json.log-format=ecsIn production, output becomes structured JSON ready for OpenSearch or Loki.

This is machine-readable. Queryable. Aggregatable.

Named Handler for Audit Logs

quarkus.log.handler.file.audit.enable=true

quarkus.log.handler.file.audit.path=target/log/order-service/audit.log

quarkus.log.handler.file.audit.level=INFO

quarkus.log.handler.file.audit.format=\

%d{yyyy-MM-dd'T'HH:mm:ss.SSSZ} AUDIT [%X{traceId}] %s%n

quarkus.log.category."com.noir.audit".handlers=audit

quarkus.log.category."com.noir.audit".use-parent-handlers=falseAudit logs now go to a separate file.

This separation protects:

Performance

Compliance

Investigations

Async Logging

quarkus.log.console.async.enabled=true

quarkus.log.console.async.queue-length=512

quarkus.log.console.async.overflow=DISCARDUnder load, logging no longer blocks request threads.

Trade-off:

DISCARD drops logs if queue fills

BLOCK slows requests

For audit logs, never use DISCARD.

Verification

Use these curls to hit POST /orders (JSON body: orderId, userId, amount):

# Basic order

curl -X POST http://localhost:8080/orders \ -H "Content-Type: application/json" \ -d '{"orderId":"ord-001","userId":"user-42","amount":99.99}'

#Another payload

curl -X POST http://localhost:8080/orders \ -H "Content-Type: application/json" \ -d '{"orderId":"ord-abc","userId":"alice","amount":0.50}'

#With trace ID (if your filter reads X-Trace-Id)

curl -X POST http://localhost:8080/orders \ -H "Content-Type: application/json" \ -H "X-Trace-Id: my-trace-123" \ -d '{"orderId":"ord-trace","userId":"bob","amount":123.45}'

#Verbose (see response headers and status)

curl -v -X POST http://localhost:8080/orders \ -H "Content-Type: application/json" \ -d '{"orderId":"ord-001","userId":"user-42","amount":99.99}'You should see correlated output:

INFO [com.noi.OrderResource] [traceId=my-trace-123] (executor-thread-1) Order received: orderId=ord-trace userId=bob amount=123.45

INFO [com.noi.pay.PaymentService] [traceId=my-trace-123] (executor-thread-1) Initiating payment: orderId=ord-trace amount=123.45

INFO [com.noi.pay.PaymentService] [traceId=my-trace-123] (executor-thread-1) Payment authorised: orderId=ord-trace

DEBUG [com.noi.OrderResource] [traceId=my-trace-123] (executor-thread-1) Order processing complete: orderId=ord-traceNow test concurrency:

for i in {1..20}; do

curl -X POST http://localhost:8080/orders \

-H "Content-Type: application/json" \

-d "{\"orderId\":\"ORD-$i\",\"userId\":\"u$i\",\"amount\":100}" &

done

waitPick one traceId. Filter logs. You see the full lifecycle of exactly one order.

That is the difference between noisy logging and production logging.

Production Hardening

What Happens Under Load

Send 50 concurrent requests.

Without MDC, logs interleave and you cannot follow one request.

With MDC, filter by traceId and you reconstruct the timeline instantly.

Concurrency Limits

MDC is thread-local.

If you spawn threads manually or use async pipelines, you must propagate context. Otherwise traceId disappears.

Logging does not solve distributed tracing. It complements it.

Security Considerations

Never log:

Full credit card numbers

JWT tokens

Passwords

Logs are persistent. They leak data if you are careless.

Mask sensitive fields before logging.

Disk Exhaustion

Always configure rotation:

quarkus.log.file.enable=true

quarkus.log.file.path=/var/log/order-service.log

quarkus.log.file.rotation.max-file-size=10M

quarkus.log.file.rotation.max-backup-index=5If logs fill disk, your service crashes. Not hypothetically. It happens.

Conclusion

We built a real order service and instrumented it properly. We controlled noise with categories, structured logs with JSON, correlated requests with MDC, separated audit streams, and protected performance with async logging. Most important, we turned logging from decoration into a diagnostic tool. Make sure to check the latest documentation for detailed configuration.

The next time production goes dark, you will not scroll randomly. You will filter, correlate, and understand.

Also make sure to check out my logging best practices article:

Quarkus Logging Guide: Configuration, JSON & Best Practices

Effective logging is a fundamental discipline for Java developers and architects, necessary for monitoring, debugging, and maintaining applications. With Quarkus, the Kubernetes-native Java framework, using its logging capabilities well improves application observability.